【Class】Stanford CS149 Parallel Computing

学习一下“金字塔尖”课程,全英文不知道能不能看懂😭(自己的课程倒头就睡,别人的课程逐帧学习😜) The goal of this course is to provide a deep understanding of the fundamental principles and engineering trade-offs involved in designing modern parallel computing systems as well as to teach parallel programming techniques necessary to effectively utilize these machines. Because writing good parallel programs requires an understanding of key machine performance characteristics, this course will cover both parallel hardware and software design

课程

2023年课程视频 + 2025年PPT

课程视频

Stanford CS149 I Parallel Computing I 2023 I Kayvon Fatahalian and Kunle Olukotun - YouTube

课程信息

gfxcourses.stanford.edu/cs149/fall25/

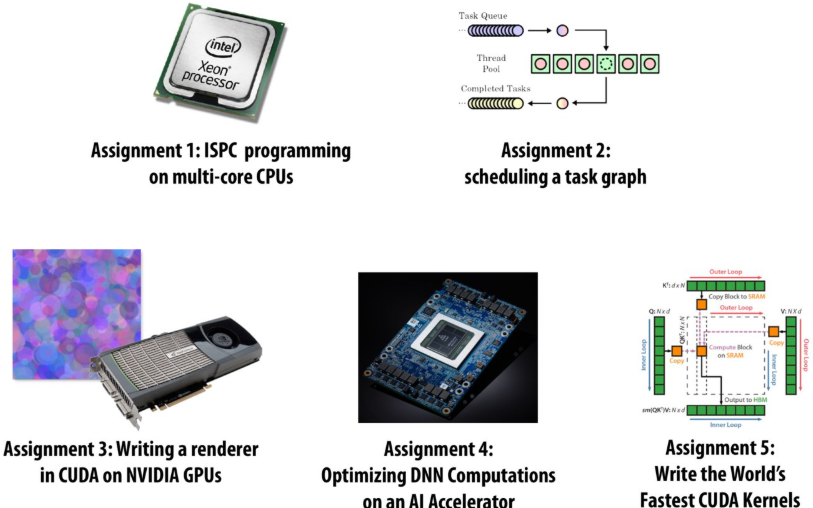

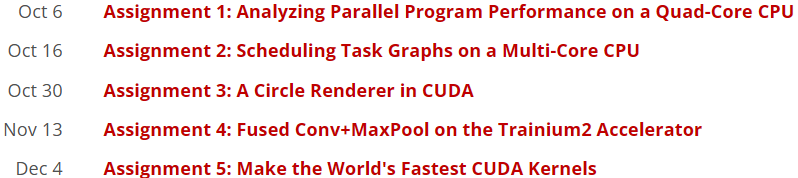

Programming Assignments

Written Assignments

Lecture 1: Why Parallelism? Why Efficiency?

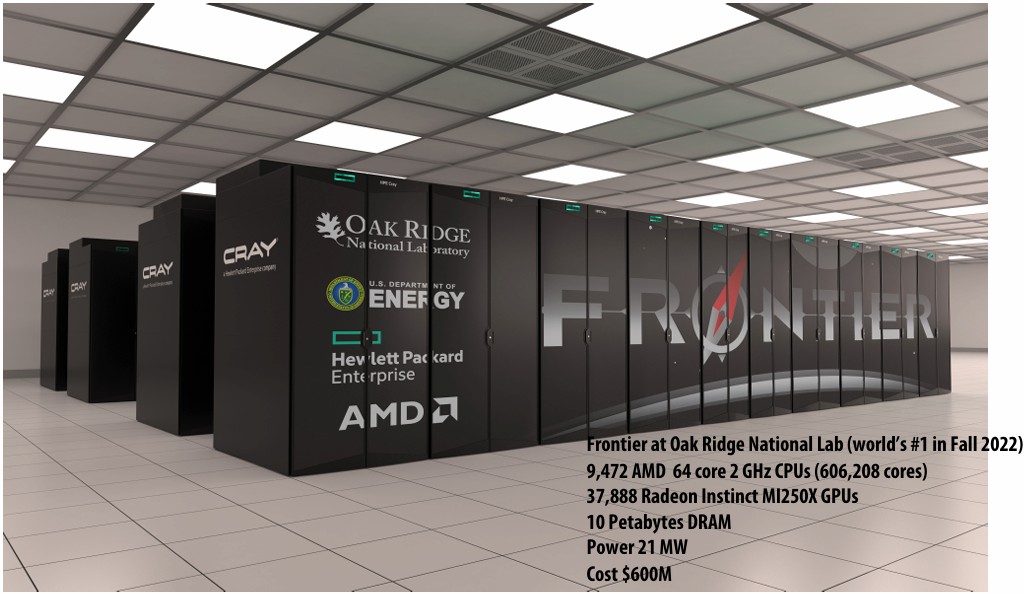

A parallel computer is a collection of processing elements that cooperate to solve problems quickly

collection of processing elements: We’re going to use multiple processing elements to get it

quickly: We care about performance, and we care about efficiency

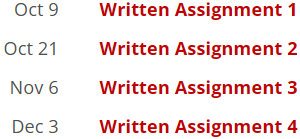

Speedup

One major motivation of using parallel processing: achieve a speedup

For a given problem:

Communication limited the maximum speedup achieved

Minimizing the cost of communication improved speedup

Imbalance in work assignment limited speedup

Improving the distribution of work improved speedup

Communication costs can dominate a parallel computation, severely limiting speedup

Course theme

Course theme 1

Designing and writing parallel programs … that scale!

Parallel thinking

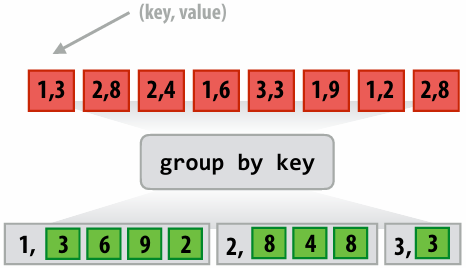

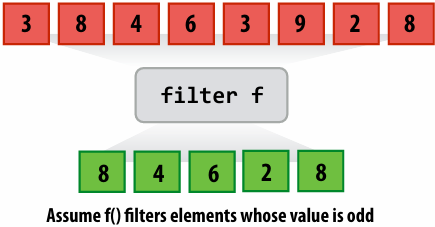

1.Decomposing(分解) work into pieces that can safely be performed in parallel

2.Assigning work to processors

3.Managing communication / synchronization(同步) between the processors so that it does not limit speedup

Abstractions(抽象) / mechanisms(机制) for performing the above tasks

Writing code in popular parallel programming languages

Course theme 2

Parallel computer hardware implementation: how parallel computers work

Mechanisms used to implement abstractions efficiently

1.Performance(性能) characteristics of implementations

2.Design trade-offs(权衡): performance vs. convenience vs. cost

Why do I need to know about hardware?

1.Because the characteristics of the machine really matter

2.Because you care about efficiency and performance

Course theme 3

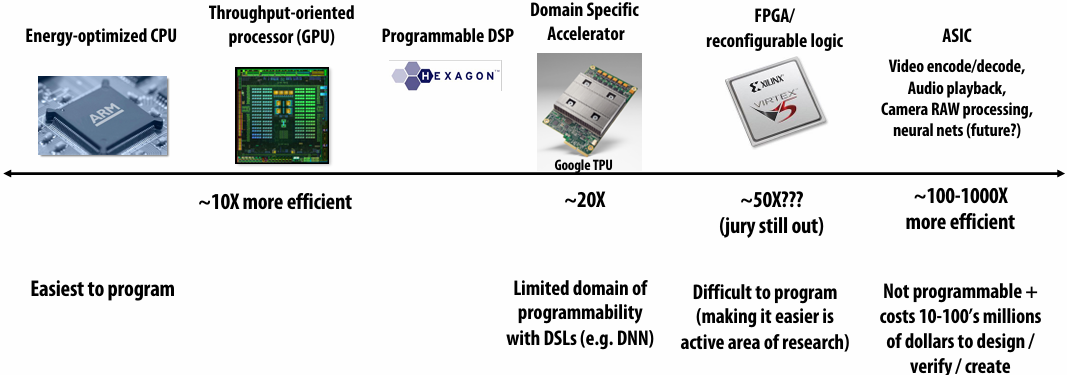

Thinking about efficiency

FAST != EFFICIENT

Just because your program runs faster on a parallel computer, it does not mean it is using the hardware efficiently

Programmer’s perspective: make use of provided machine capabilities

HW(HardWare) designer’s perspective: choosing the right capabilities(功能) to put in system (performance / cost, cost = silicon area?, power?, etc.)

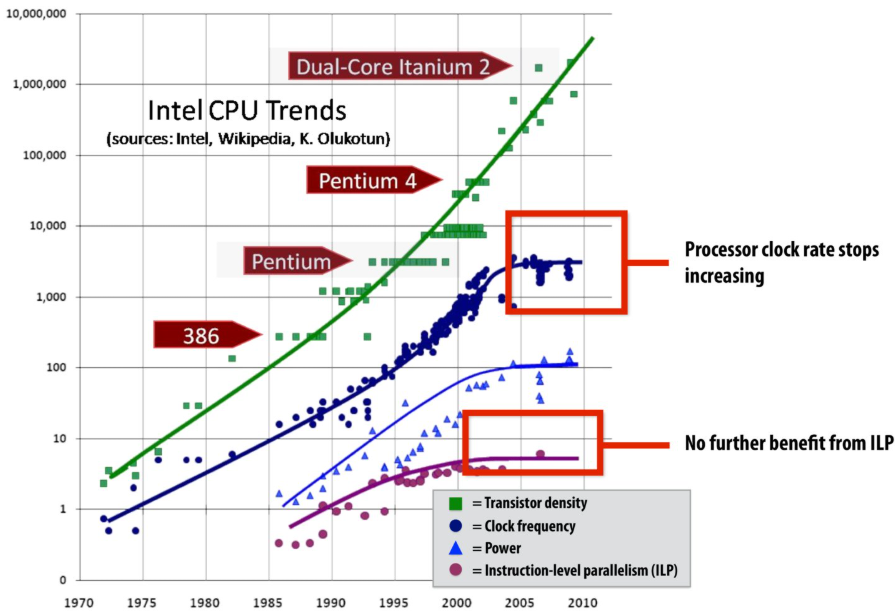

Until ~ 20 years ago: two significant reasons for processor performance improvement

1.Exploiting(利用) instruction-level(指令级) parallelism(superscalar(超标量) execution)

2.Increasing CPU clock frequency

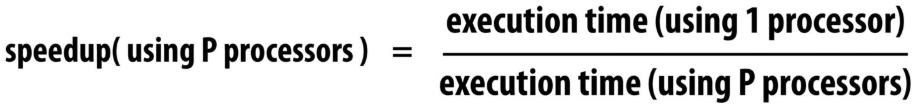

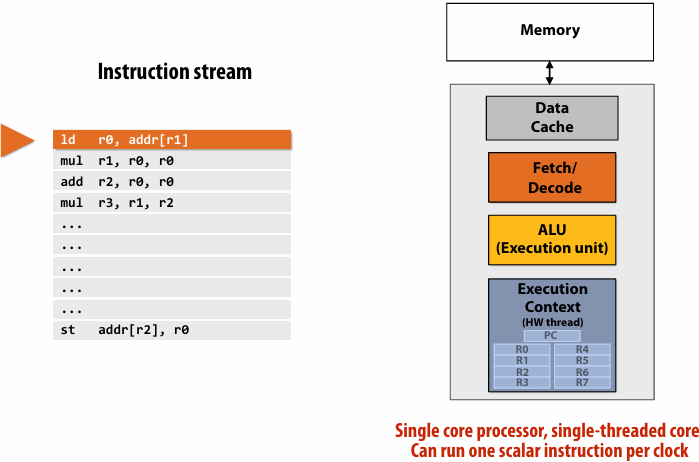

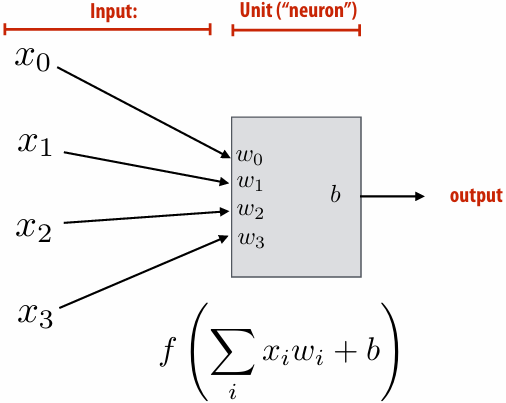

What is a program?(from a processor’s perspective)

A program is just a list of processor instructions

What is an instruction?

It describes an operation for a processor to perform

Executing an instruction typically modifies the computer’s state

computer’s state: the values of program data, which are stored in a processor’s registers or in memory

What does a processor do?

A processor executes instructions

executes one instruction per clock

Superscalar execution: processor automatically finds (Or the compiler finds independent instructions at compile time and explicitly encodes dependencies in the compiled binary(二进制文件)) independent instructions in an instruction sequence and executes them in parallel on multiple execution units

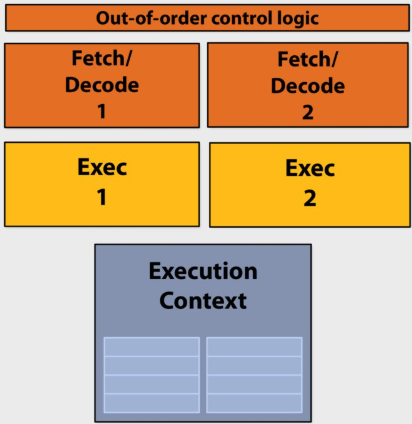

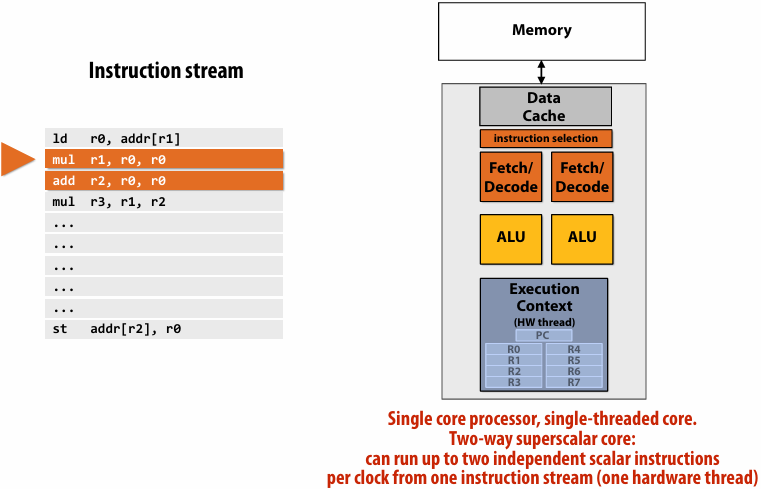

Superscalar processor

This processor can decode and execute up to two instructions per clock

Diminishing(递减) returns(收益) of superscalar execution

Most available ILP(instruction-level parallelism) is exploited by a processor capable of issuing(发出) four instructions per clock(Little performance benefit from building a processor that can issue more)

ILP tapped out(已达极限) + end of frequency scaling(扩展)

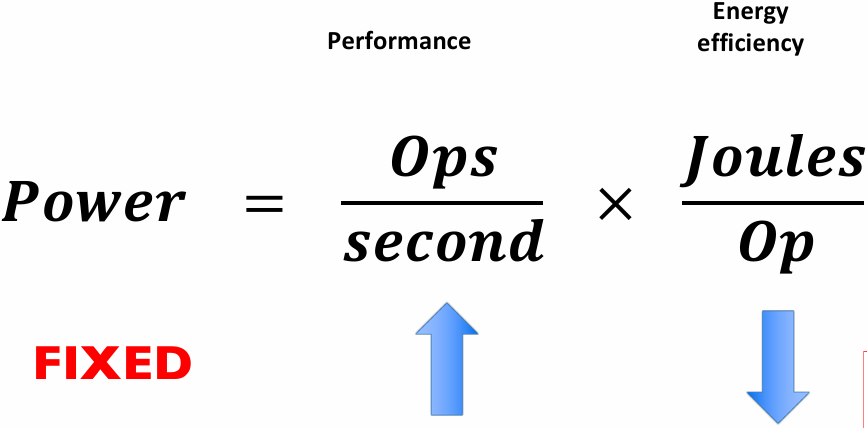

The “power wall”

Power consumed by a transistor:

Static power: transistors burn power even when inactive due to leakage

High power = high heat

Power is a critical design constrant in modern processors

Maximum allowed frequency determined by processor’s core voltage

Single-core performance scaling

The rate of single-instruction stream performance scaling has decreased(almost to zero)

1.Frequency scaling limited by power

2.ILP scaling tapped out

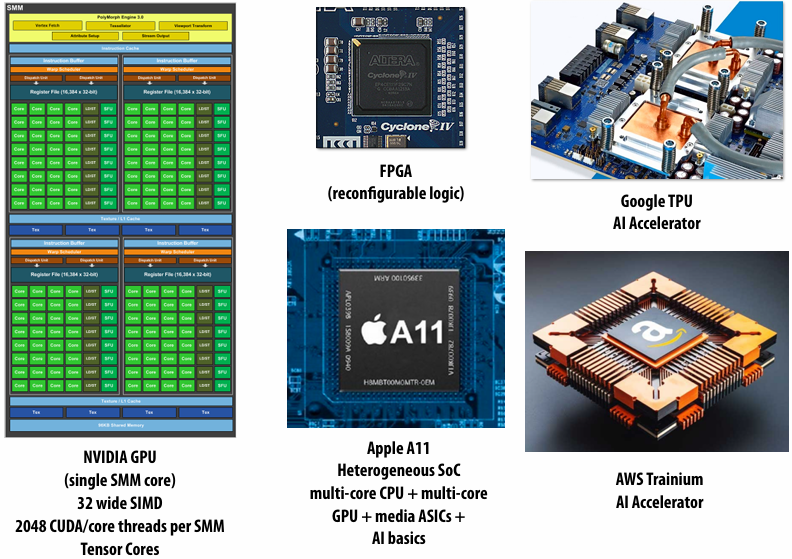

Architects are now building faster processors by adding more execution units that run in parallel(Or units that are specialized for a specific task: like graphics, or audio / video playback)

Software must be written to be parallel to see performance gains. No more free lunch for software developers!

| 特性 | 指令级并行(ILP) | 手动编写的并行(TLP/DLP) |

|---|---|---|

| 负责人 | 硬件(CPU) | 程序员(软件) |

| 代码形式 | 普通的顺序代码 | 特殊的多线程/向量化代码 |

| 程序员负担 | 零负担 (自动提升) | 负担沉重 (需设计架构、调试并发 BUG) |

| 并行规模 | 很小 (通常每个时钟周期几条指令) | 很大 (可以扩展到成千上万个核心) |

| 限制因素 | 指令间的依赖关系、电路复杂度 | 算法的可拆分性、同步开销 (阿姆达尔定律) |

| 粒度 | 极细(指令级)。处理的是加法、乘法、访存等最基本的指令 | 较粗(线程级或任务级)。程序员将一个大任务拆分成多个子任务(例如:处理图像时,把图像分成四块,交给四个核心处理) |

| 实现方式 | 通过流水线(Pipelining)、乱序执行(Out-of-Order Execution)、多发射(Multiple Issue)和分支预测等技术 | 程序员需要考虑如何拆分任务、如何处理线程间的同步(锁、信号量)、如何避免死锁以及如何进行数据交换 |

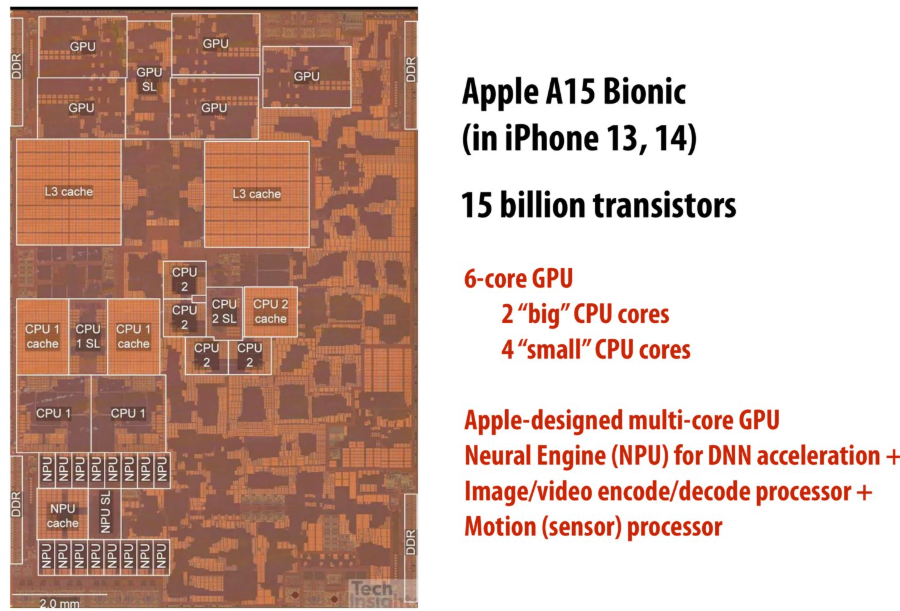

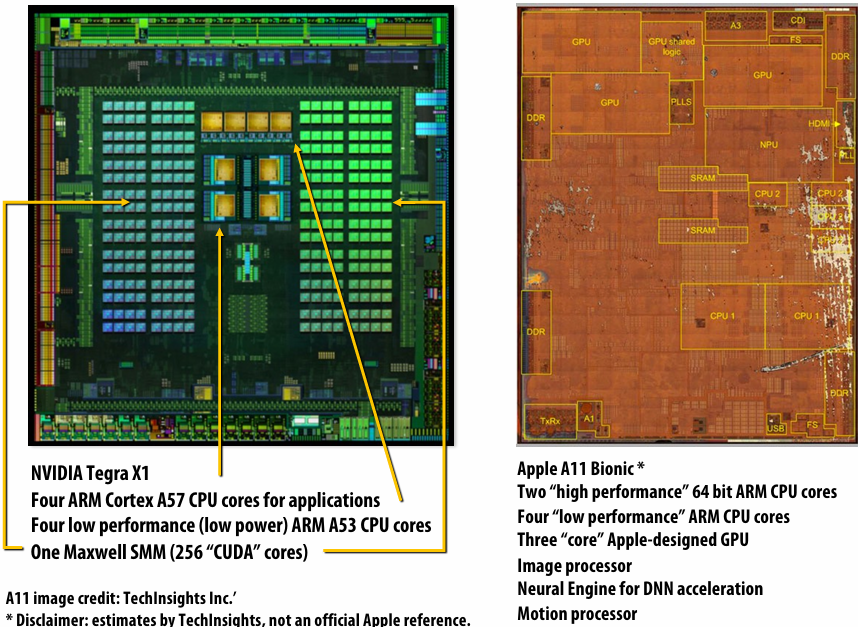

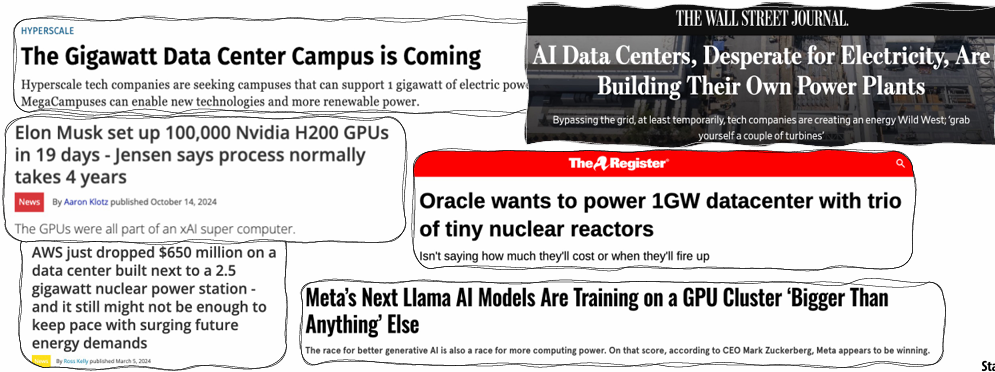

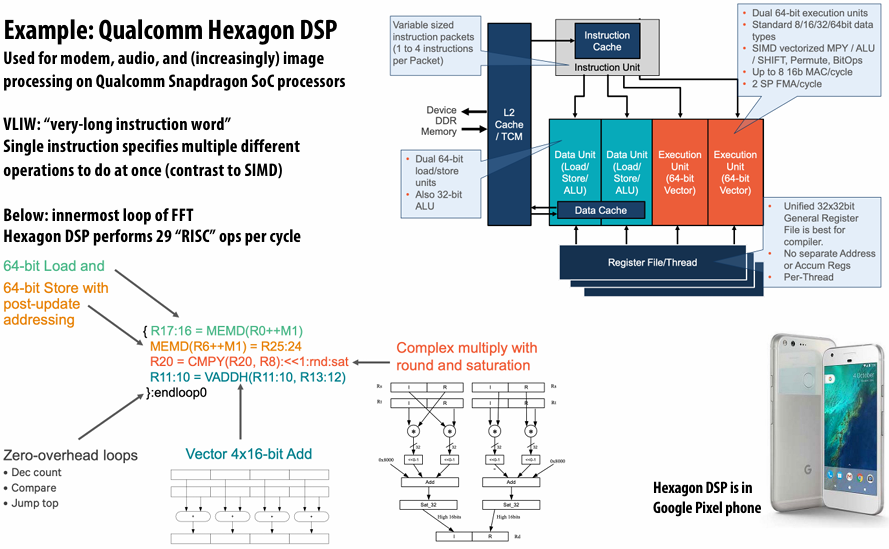

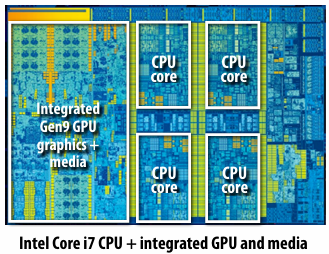

Mobile parallel processing

Power constraints also heavily influence the design of mobile systems

What is a big concern in all computing?

Power

Two reasons to save power

Run at higher performance for a fixed(固定的) amount of time

Power = heat If a chip gets too hot, it must be clocked down(减低频率) to cool off(Another reason: hotter systems cost more to cool)

Run at sufficient performance for a longer amount of time

Power = battery Long battery life(续航时间) is a desirable feature in mobile devices

Specialized processing is ubiquitous in mobile systems

Motion(sensor)(运动(传感器))

Motion(sensor)(运动(传感器))

Parallel + specialized HW

Modern systems not noly use many processing units, but also utilize specialized processing units to achieve high levels of power efficiency

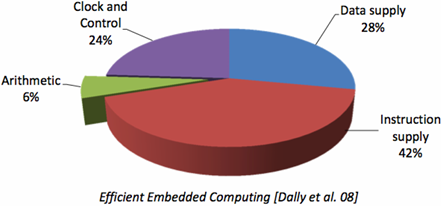

Achieving efficient processing almost always comes down to(归结为) accessing data efficiently

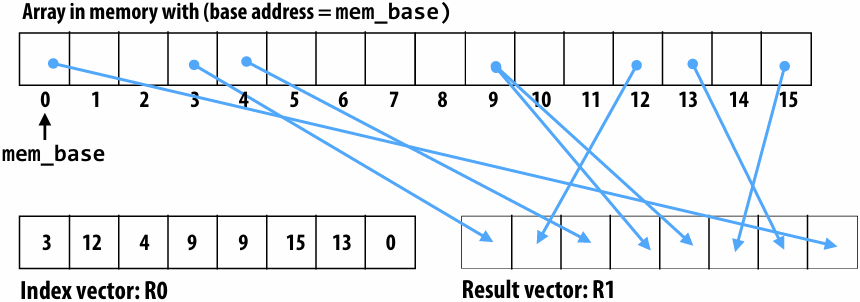

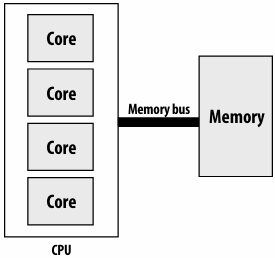

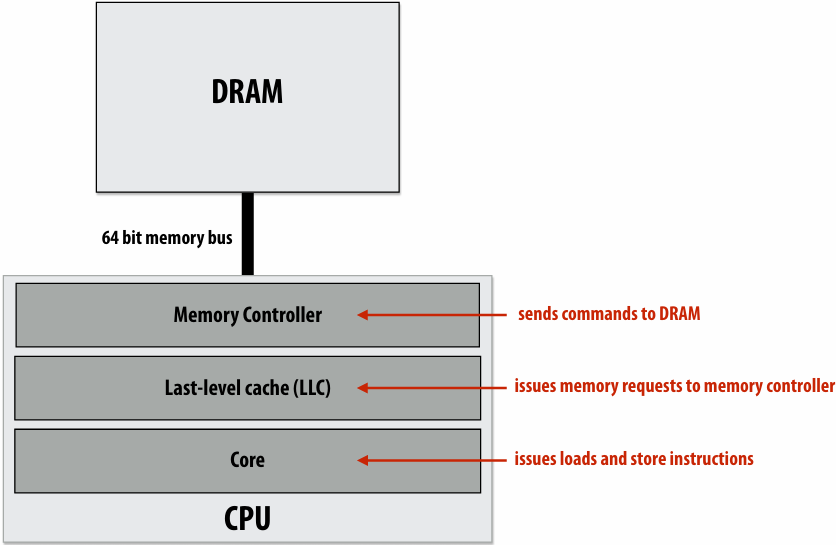

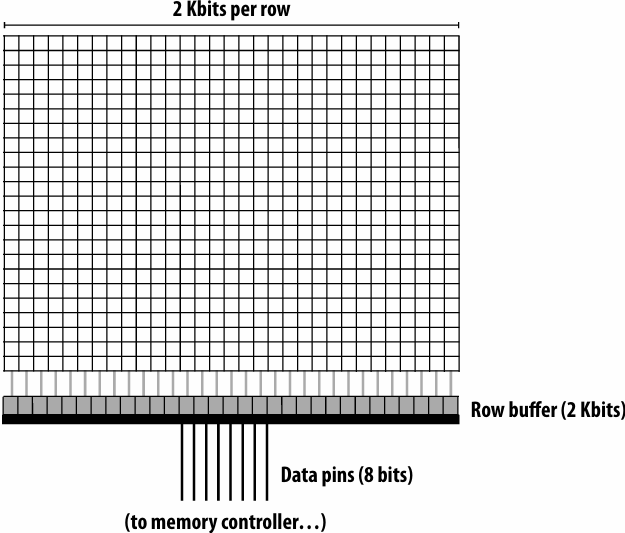

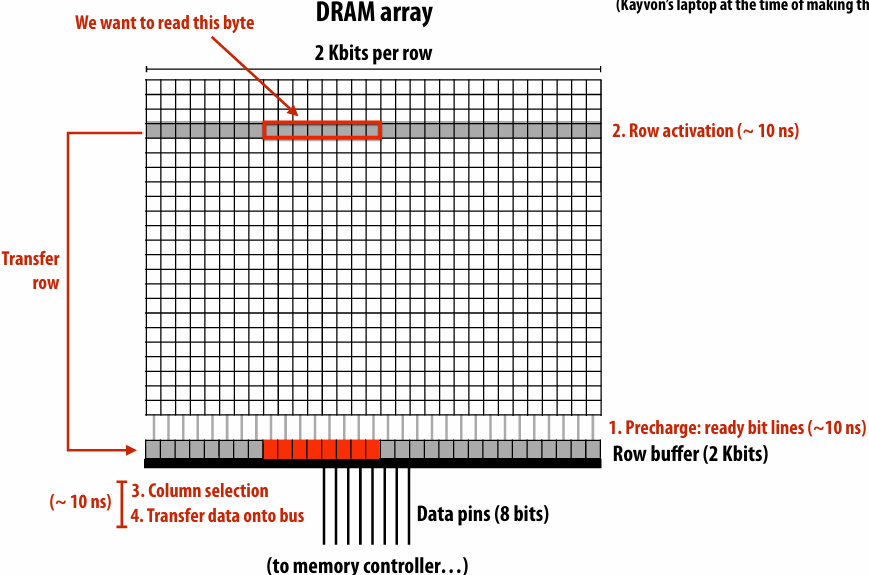

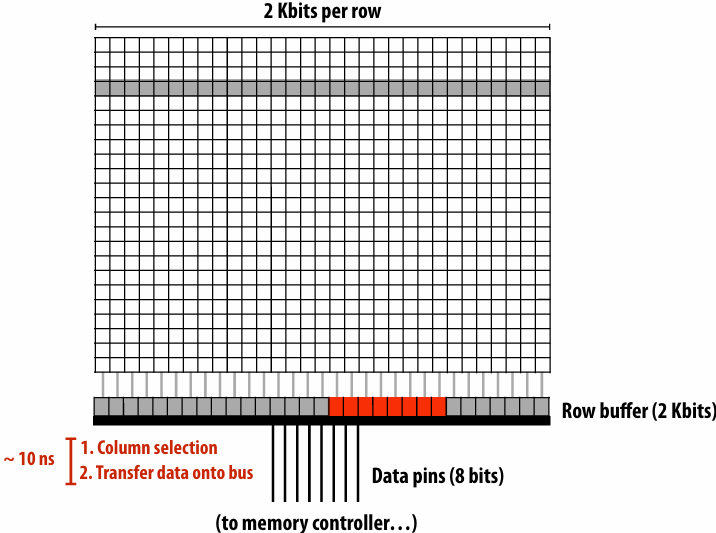

What is memory?

A program’s memory address space

A computer’s memory is organized as an array of bytes

Each byte is identified by its “address” in memory(its position in this array)(We’ll assume memory is byte-addressable)

Load: an instruction for accessing the contents of memory

Memory access latency(延迟)

The amount of time it takes the memory system to provide data to the processor

Stalls(停顿)

A processor “stalls” (can’t make progress) when it cannot run the next instruction in an instruction stream because future instructions depend on a previous instruction that is not yet complete

Accessing memory is a major source of stalls

Memory access times ~ 100’s of cycles, is a measure of latency

What are caches?

- A cache is a hardware implementation detail that does not impact the output of a program, only its performance

- Cache is on-chip storage that maintains a copy of a subset(子集) of the values in memory

- If an address is stored “in the cache” the processor can load/store to this address more quickly than if the data resides(驻留) only in DRAM

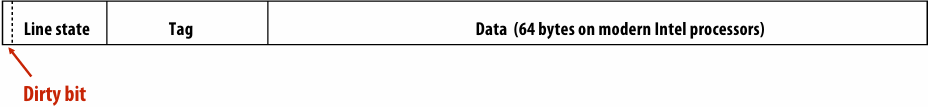

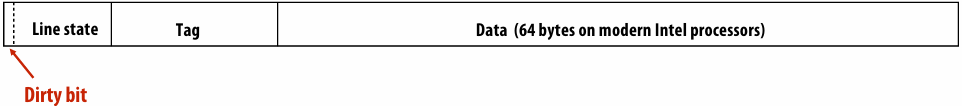

- Caches operate at the granularity(粒度) of “cache lines”

There are two forms of “data locality(局部性)” in this sequence:

Spatial(空间) locality: loading data in a cache line “preloads(预加载)” the data needed for subsequent(后续) accesses to different address in the same line, leading to cache hits

Temporal(时间) locality: repeated accesses to the same address result in hits

Caches reduce length of stalls(reduce memory access latency)

Processors run efficiency when they access data that is resident(驻留) in caches

Caches reduce memory access latency when processors accesses data that they have recently accessed!(Caches also provide high bandwidth(带宽) data transfer(传输))

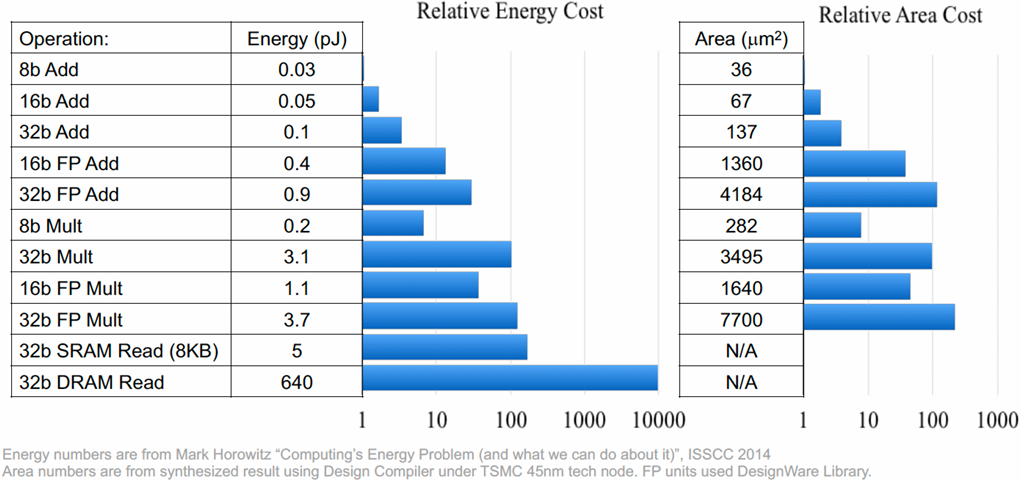

Data movement has high energy cost

Rule of thumb(经验法则) in modern system design: always seek to reduce amount of data movement in a computer

Summary

Single-thread-of-control performance is improving very slowly

To run programs significantly faster, programs must utilize multiple processing elements or specialized processing hardware

Which means you need to know how to reason about(推理) and write parallel and efficient code

Writing parallel programs can be challenging

Requires problems partitioning(划分), communication, synchronization

Knowledge of machine characteristics(特性) is important

In particular, understanding data movement!

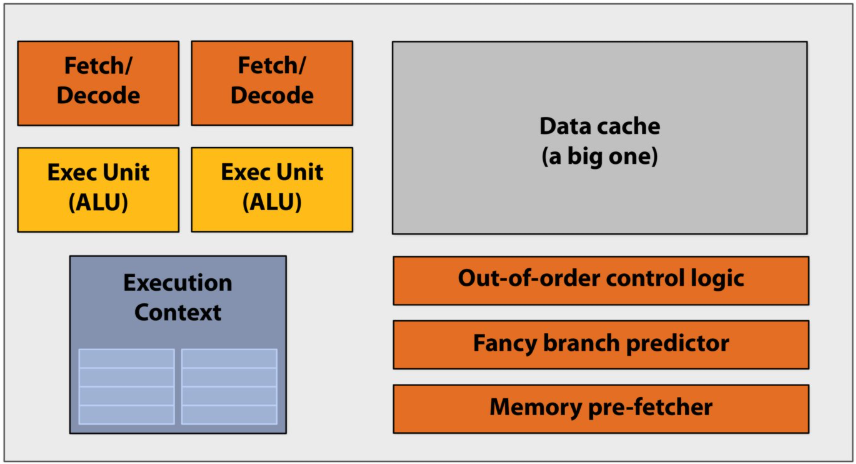

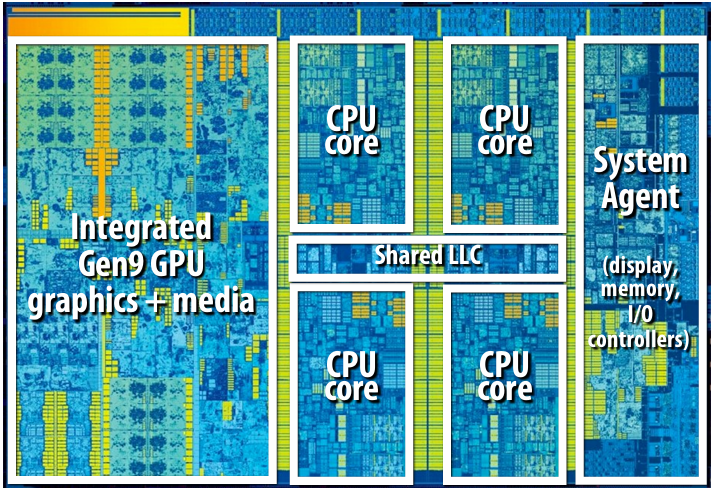

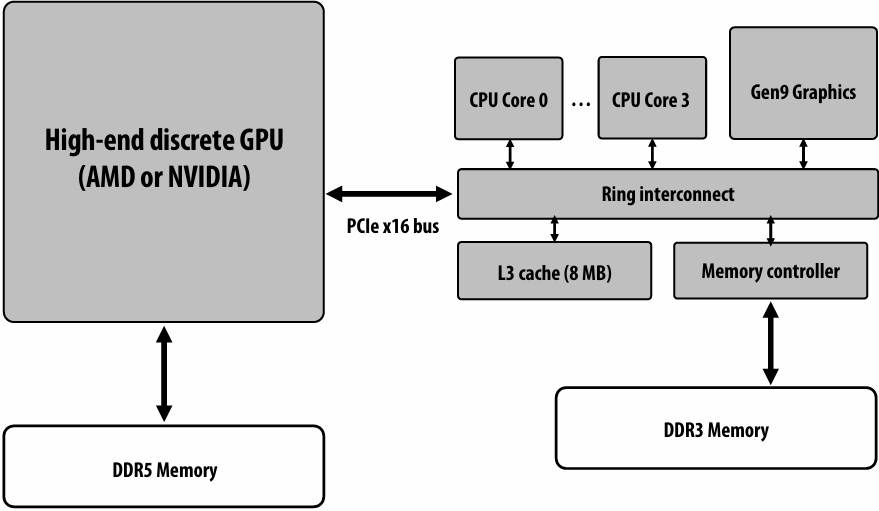

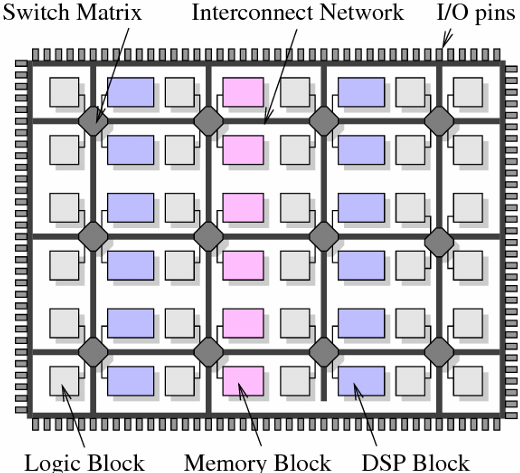

Lecture 2: A Modern Multi-Core Processor (Part I)

Key concepts about how modern parallel processors achieve high throughput(吞吐量)

Two concern(有关) parallel execution (multi-core, SIMD parallel execution)

One addresses(解决) the challenges of memory latency (multi-threading)

Pre multi-core era processor

Majority of chip transistors used to perform operations that help make a single instruction stream run fast

More transistors = larger cache, smarter out-of-order logic, smarter branch predictor, etc

Out-of-order control logic(乱序执行控制逻辑):打破指令在程序中原本的先后顺序,寻找可以并行执行的机会

Multi-core era processor

Use increasing transistor count to add more cores to the processor

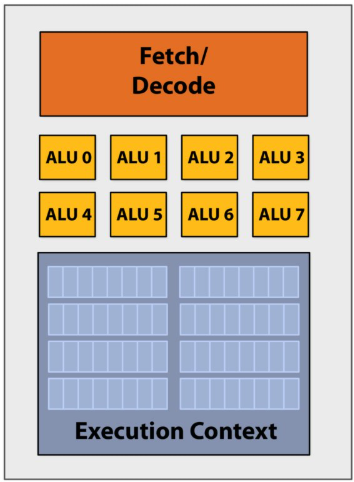

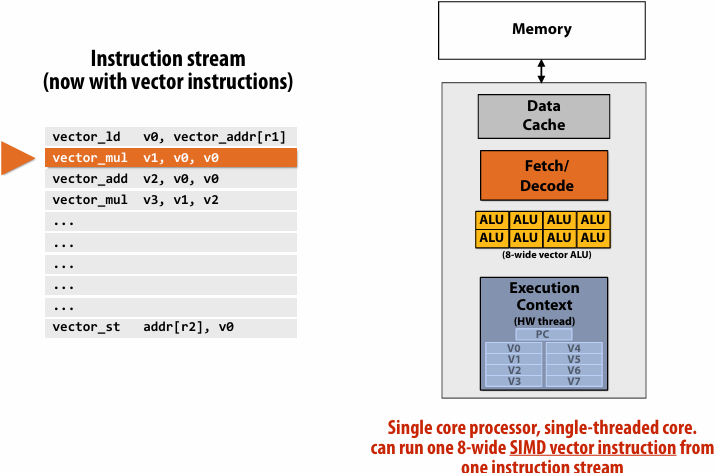

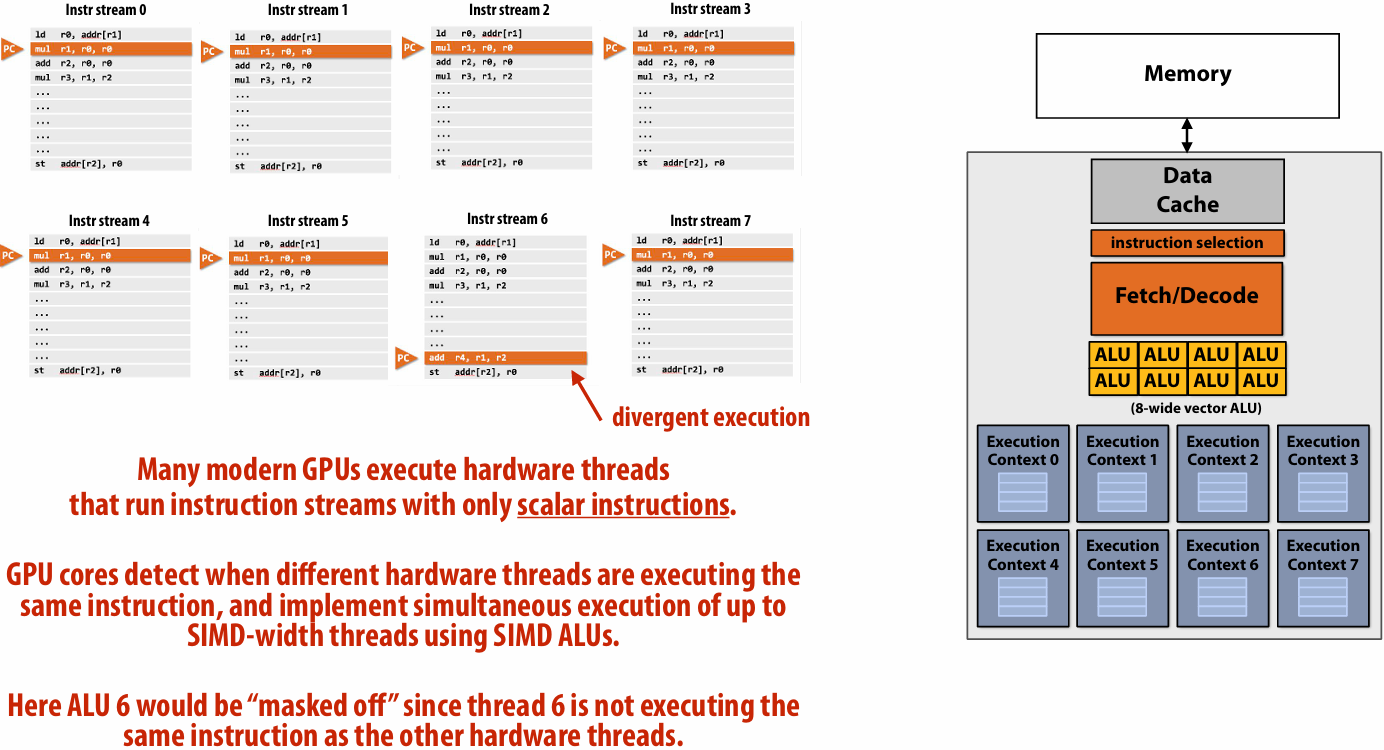

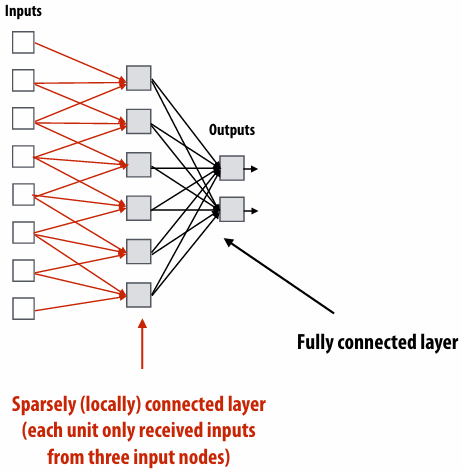

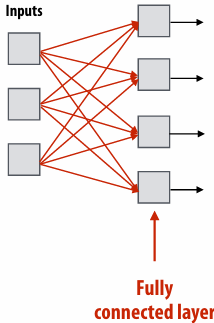

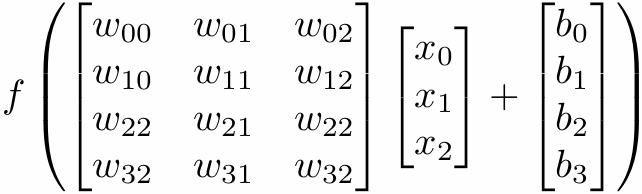

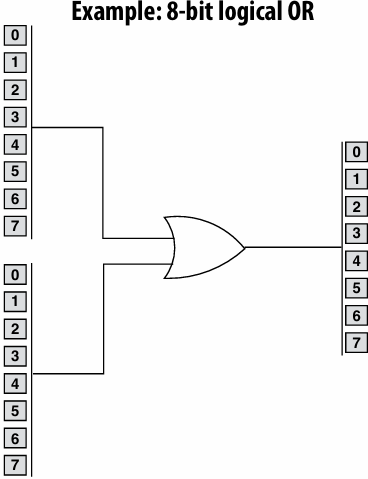

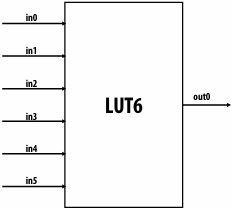

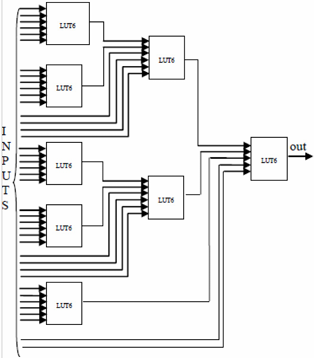

SIMD processing(Single instruction, multiple data)

Add execution units(ALUs) to increase compute capability

Amortize(分摊) cost/complexity of managing an instruction stream across many ALUs

Same instruction broadcast to all ALUs

This operation is executed in parallel on all ALUs

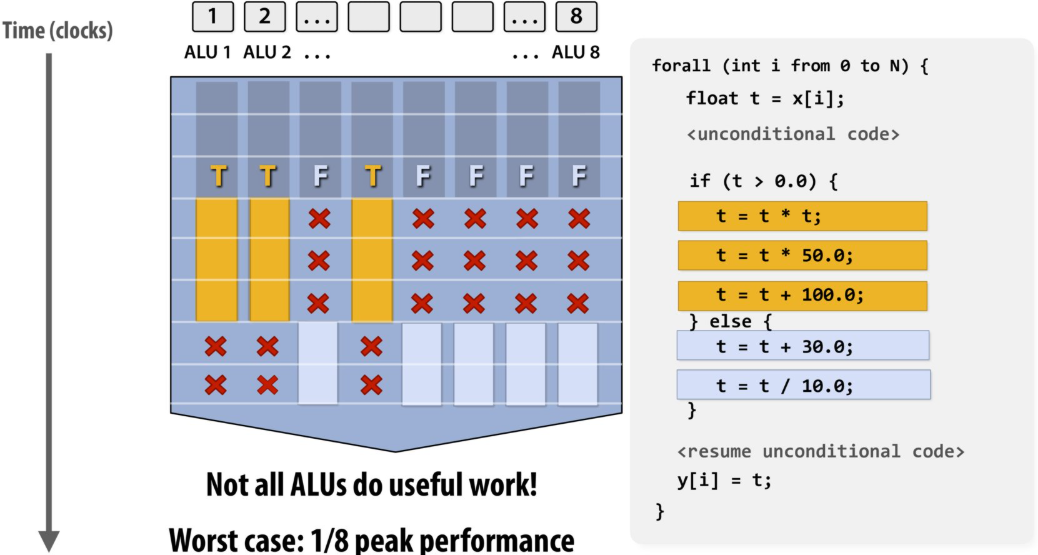

Mask (discard) output of ALU

The program’s use of “forall” declares to the compiler that loop iterations are independent, and that same loop body will be executed on a large number of data elements

This abstraction can facilitate(促进) automatic generation of both multi-core parallel code, and vector instructions to make use of SIMD processing capabilities within a core

当代码运行到 if (t > 0.0) 时,如果 8 个通道里的数据,有些满足条件(T),有些不满足(F),硬件无法让一部分 ALU 跑 if 里的代码,另一部分跑 else 里的

硬件的处理方式是:

第一步: 掩码(Masking)掉所有 F 的通道,只让 T 的通道执行 if 分支里的代码。此时,F 对应的 ALU 是闲置的(如你图中带红色叉号 X 的部分)

第二步: 掩码掉所有 T 的通道,再让 F 的通道执行 else 分支里的代码。此时,T 对应的 ALU 是闲置的

结果: 总耗时 = 执行 if 分支的时间 + 执行 else 分支的时间。原本并行的 8 个核心,在这一刻退化成了类似于串行的工作方式,吞吐量大幅下降

如果所有元素全都在同一个分支里(比如全是正数),处理器就只需要花执行 A 的时间,效率会提高一倍

Some common jargon(术语)

Instruction stream coherence(一致性)(“coherent execution”)

Property(特性) of a program where the same instruction sequence applies to many data elements

Coherent execution IS NECESSARY for SIMD processing resources to be used efficiently

Coherent execution IS NOT NECESSARY for efficient parallelization across different cores, since each core has the capability to fetch/decode a different instructions from their thread’s instruction stream

“Divergent(发散)” execution

A lack of instruction stream coherence in a program

Instruction are generated by the compiler

Parallelism explicitly requested by programmer using intrinsics(内部函数)

Parallelism conveyed using parallel language semantics(语义)(e.g., forall example)

Parallelism inferred by dependency analysis of loops by “auto-vectorizing” compiler

Terminology(术语): “explicit SIMD”: SIMD parallelization is performed at compile time

Can inspect program binary and see SIMD instructions (vstoreps, vmulps, etc.)

“Implicit SIMD”

Compiler generates a binary with scalar instructions

But N instances of the program are always run together on the processor

Hardware(not compiler) is responsible for simultaneously executing the same instruction from multiple program instances on different data on SIMD ALUs

SIMD width of most modern GPUs ranges from 8 to 32

Divergent execution can be a big issue(poorly written code might execute at 1/32 the peak capability of the machine!)

Summary: three different forms of parallel execution

Superscalar: exploit ILP within an instruction stream. Process different instructions from the same instruction stream in parallel(within a core)

SIMD: multiple ALUs controlled by same instruction(within a core)

Efficient for data-parallel workloads: amortize control costs over many ALUs

Vectorization(向量化) done by compiler (explicit SIMD) or at runtime by hardware(implicit SIMD)

Multi-core: use multiple processing cores

Provides thread-level parallelism: simultaneously execute a completely different instruction stream on each core

Software creates threads to expose parallelism to hardware(e.g., via threading API)

Hardware-supported multi-threading

Data prefetching(预取) reduces stalls (hides latency)

Many modern CPUs have logic for guessing what data will be accessed in the future and “pre-fetching” this data into caches

Dynamically analyze program’s memory access patterns to make predictions

Prefetching reduces stalls since data is resident in cache when accessed

Note: Prefetching can also reduce performance if the guess is wrong(consumes bandwidth, pollutes caches)

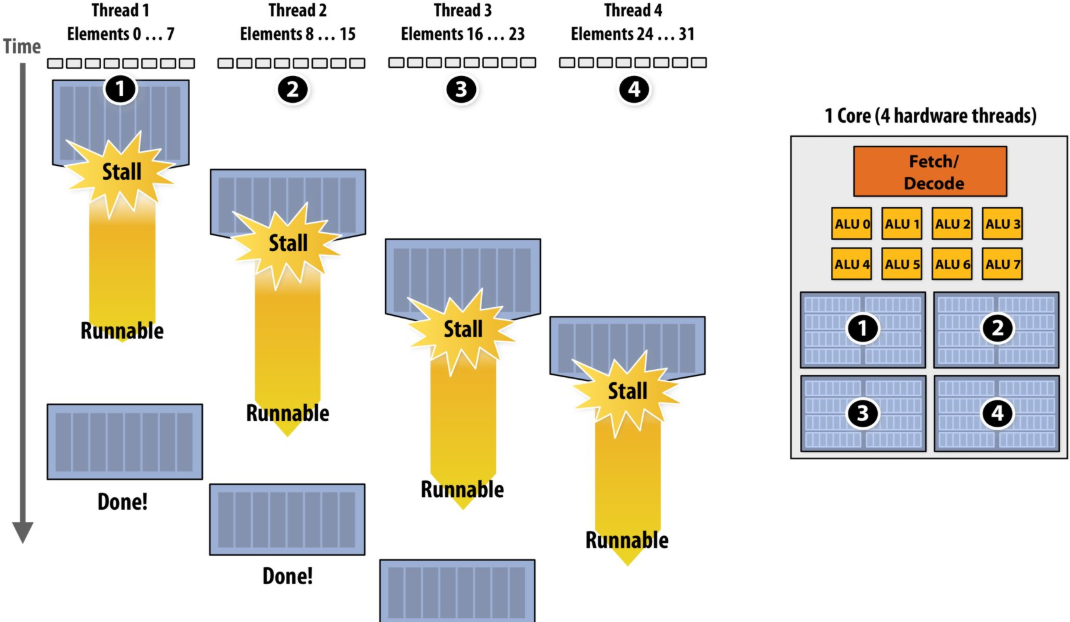

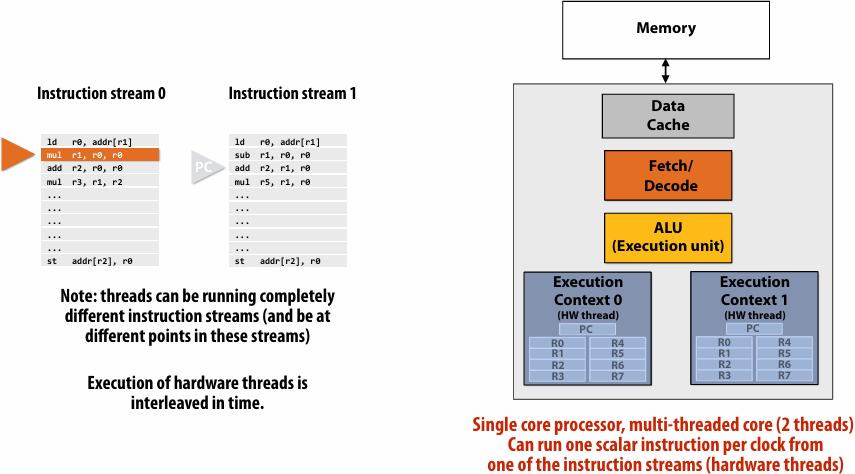

Multi-threading reduces stalls

interleave(交错) processing of multiple threads on the same core to hide stalls

if you can’t make progress on the current thread… work on another one

Hiding stalls with multi-threading

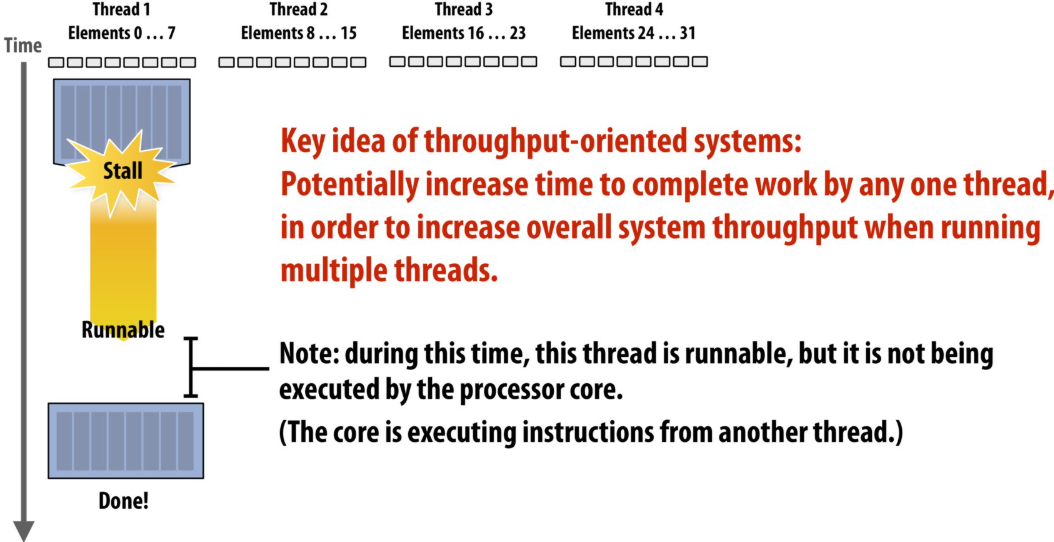

Throughput(吞吐量) computing: a trade-off(权衡)

No free lunch: storing execution contexts

Consider on-chip storage of execution contexts as a finite resource

Many small contexts (high latency hiding ability)

16 hardware threads: storage for small working set per thread

Four large contexts (low latency hiding ability)

4 hardware threads: storage for large working set per thread

Takeaway (point 1):

A processor with multiple hardware threads has the ability to avoid stalls by performing instructions from other threads when one thread must wait for a long latency operation to complete

Note: the latency of the memory operation is not changed by multi threading, it just no longer causes reduced processor utilization

Takeaway (point 2):

A multi-threaded processor hides memory latency by performing arithmetic from other threads

Programs that feature more arithmetic per memory access need fewer threads to hide memory stalls

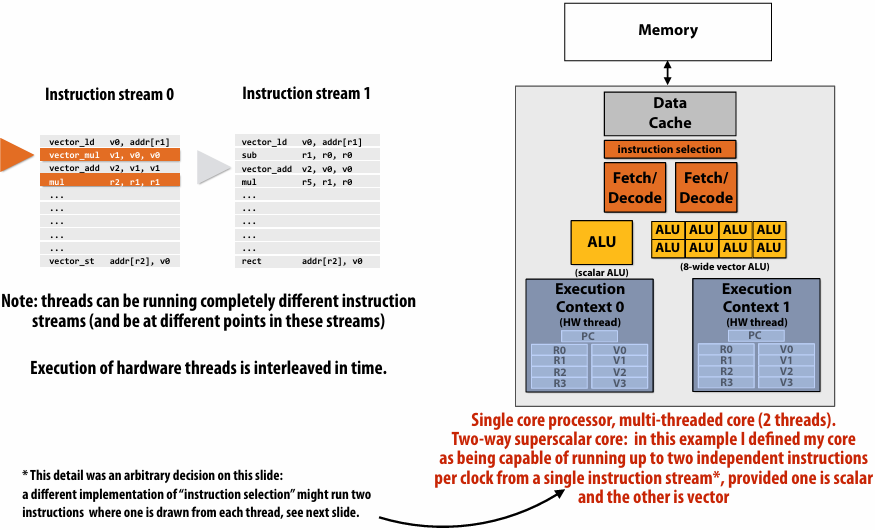

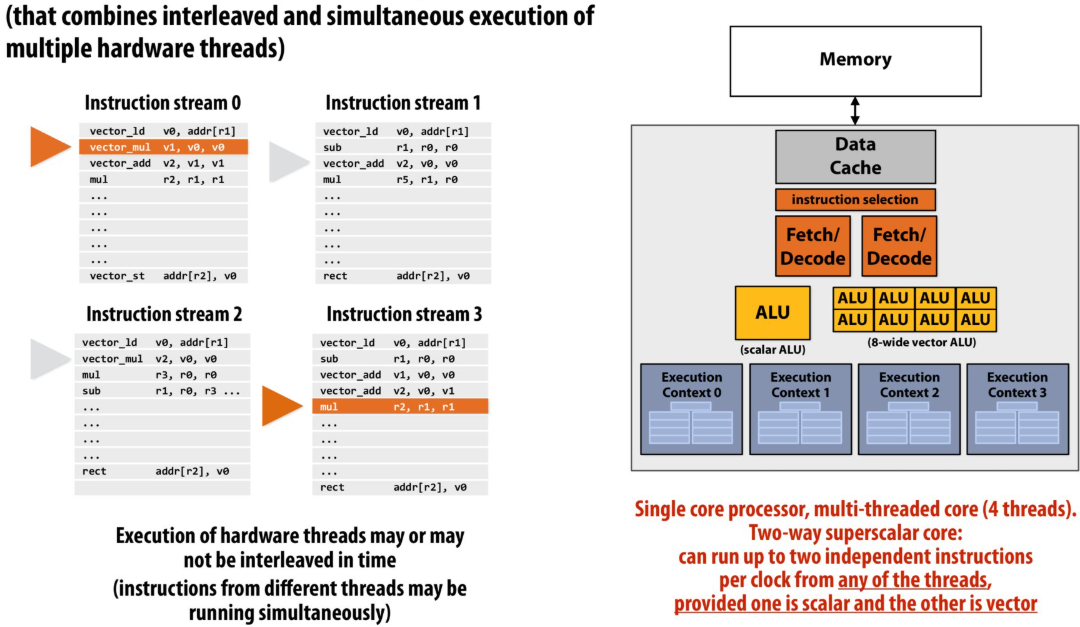

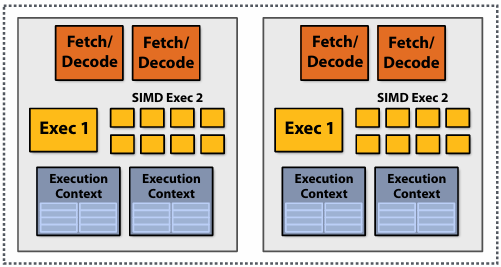

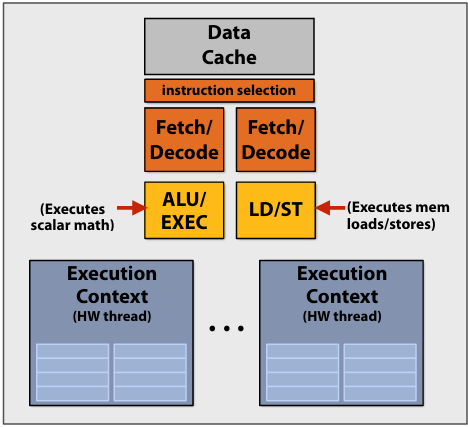

Hardware-supported multi-threading

- Core manages execution contexts for multiple threads

Core still has the same number of ALU resources: multi-threading only helps use them more efficiently in the face of high-latency operations like memory access

Processor makes decision about which thread to run each clock - Interleaved multi-threading (a.k.a.(又称) temporal multi-threading)

each clock, the core chooses a thread, and runs an instruction from the thread on the core’s ALUs - Simultaneous(同步) multi-threading (SMT)

Each clock, core chooses instructions from multiple threads to run on ALUs

Example: Intel Hyper-threading (2 threads per core)

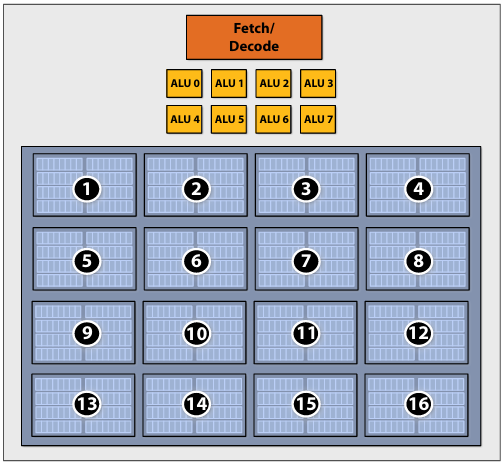

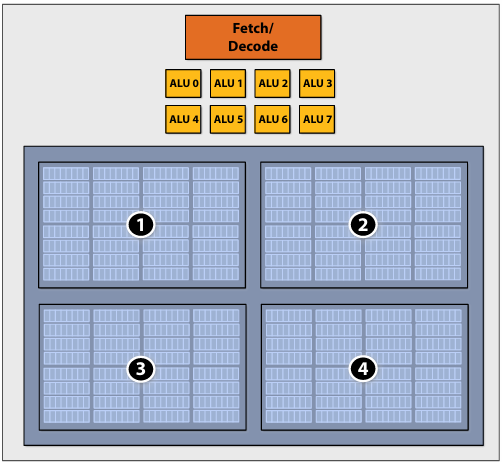

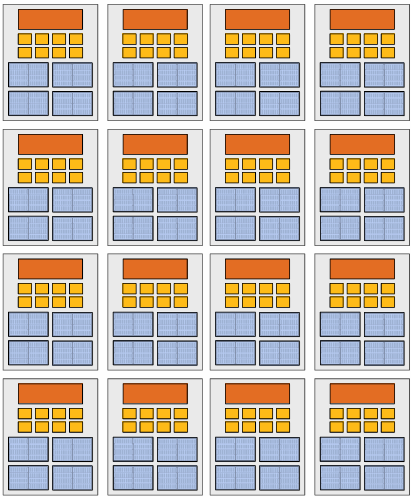

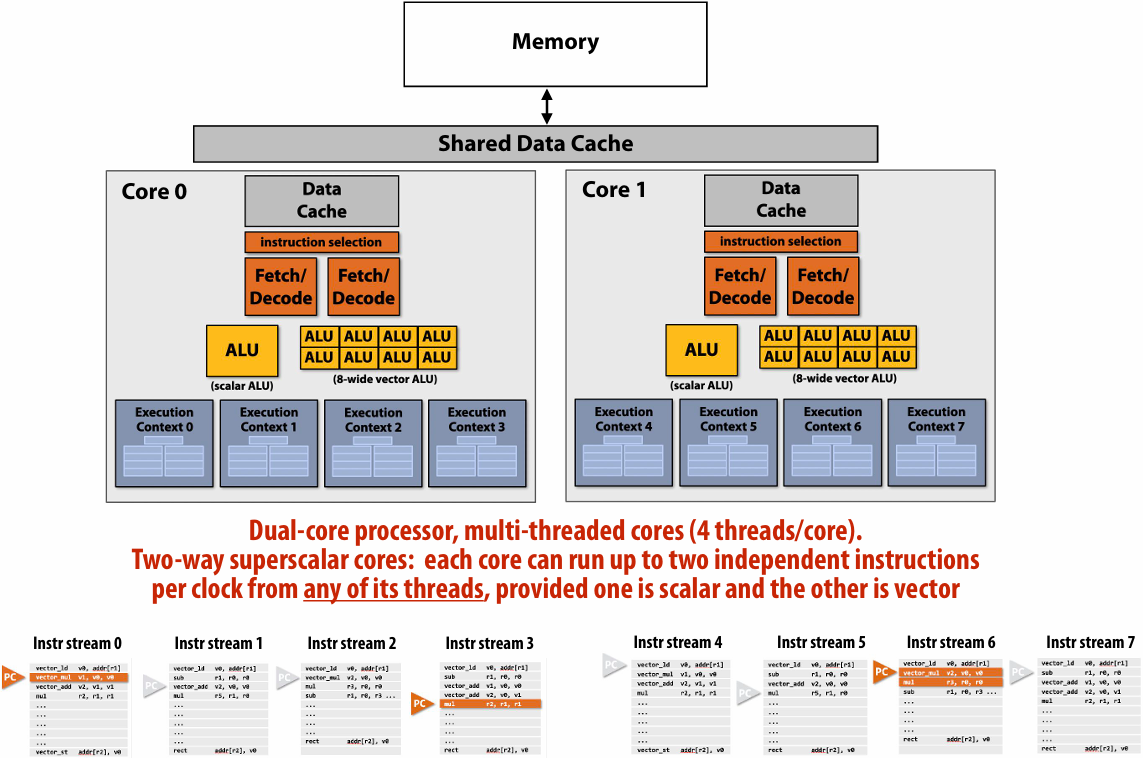

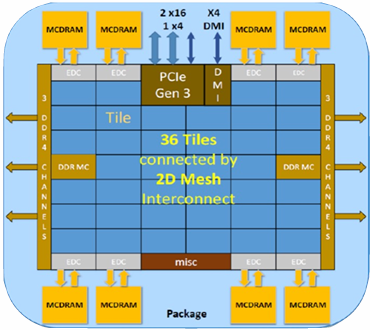

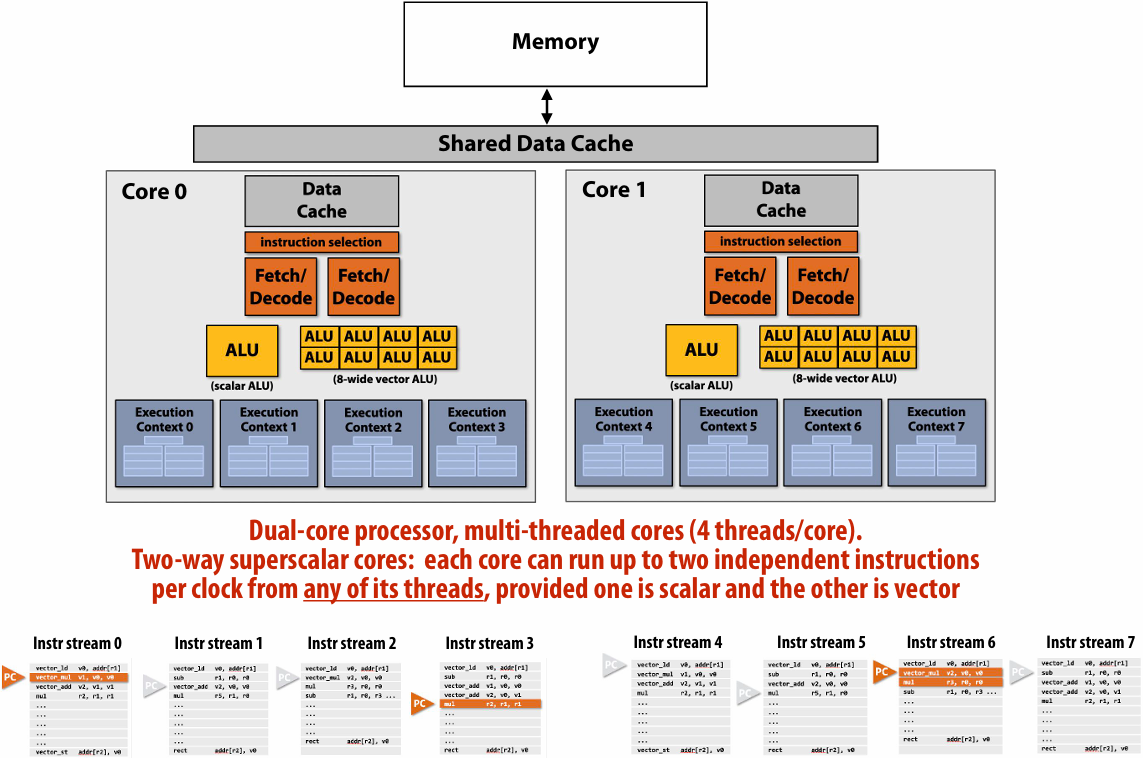

Kayvon’s fictitious(虚构) multi-core chip

16 cores

8 SIMD ALUs per core (128 total)

4 threads per core

16 simultaneous instruction streams

64 total concurrent(同步) instruction streams

512 independent pieces of work are needed to run chip with maximal latency hiding ability

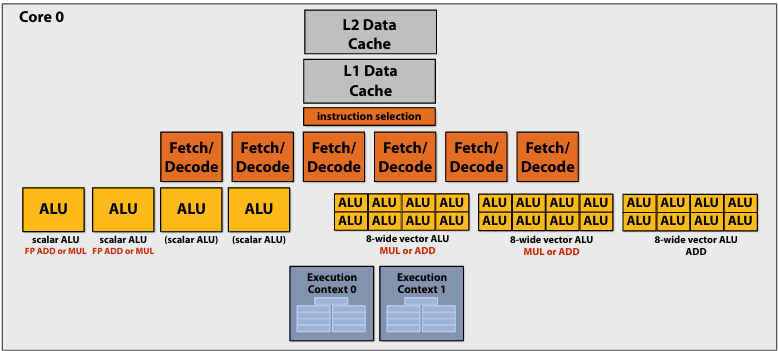

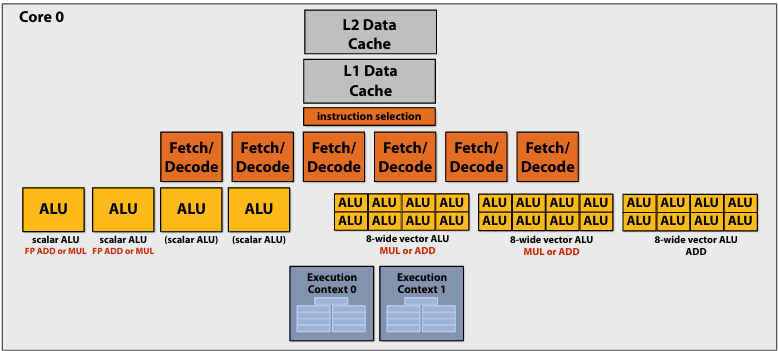

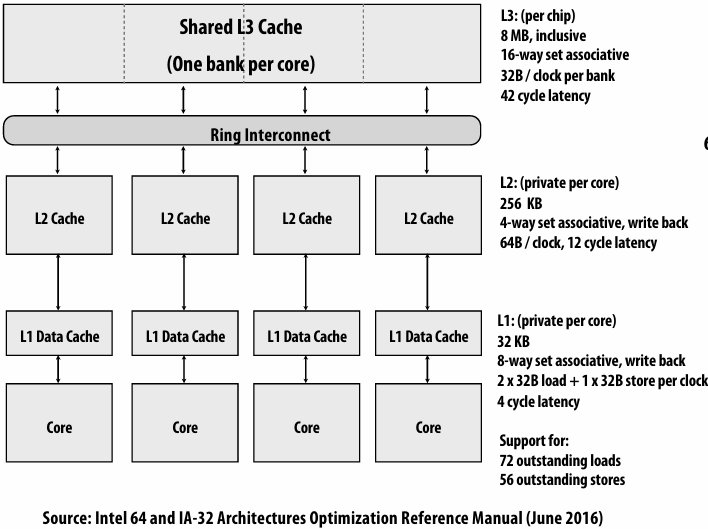

Example: Intel Skylake/Kaby Lake core

Two-way multi-threaded cores (2 threads)

Each core can run up to(最多) four independent scalar instructions

and up to three 8-wide vector instructions (up to 2 vector mul or 3 vector add)

GPUs: extreme throughput-oriented(面向吞吐量) processors

NVIDIA V100

There are 80 SM cores on the V100: That’s 163,840 pieces of data being processed concurrently to get maximal latency hiding!

The story so far…

To utilize modern parallel processors efficiently, an application must:

1.Have sufficient parallel work to utilize all available execution units (across many cores and many execution units per core)

2.Groups of parallel work items must require the same sequences of instructions (to utilize SIMD execution)

3.Expose more parallel work than processor ALUs to enable interleaving of work to hide memory stalls

Summary

Suggestion to students: know these terms

▪ Instruction stream

▪ Multi-core processor

▪ SIMD execution

▪ Coherent control flow(流)

▪ Hardware multi-threading

Interleaved multi-threading

Simultaneous multi-threading

Running code on a simple processor

Superscalar core

SIMD execution capability

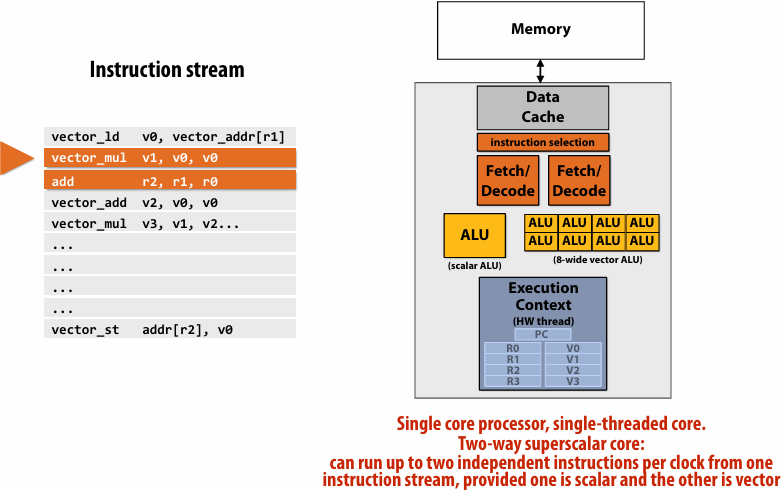

Heterogeneous(异构) superscalar (scalar + SIMD)

Multi-threaded core

Multi-threaded, superscalar core

Multi-core, with multi-threaded, superscalar cores

Example: Intel Skylake/Kaby Lake core

Two-way multi-threaded cores (2 threads)

Each core can run up to(最多) four independent scalar instructions

and up to three 8-wide vector instructions (up to 2 vector mul or 3 vector add)

GPU “SIMT” (single instruction multiple thread)

Thought experiment

You write an application that spawns two threads

The application runs on the processor shown below

Two cores, two-execution contexts per core, up to instructions per clock, one instruction is an 8-wide SIMD instruction

Question: “who” is responsible for mapping the applications’s threads to the processor’s thread execution contexts?

Answer: the operating system

Question: If you were implementing the OS, how would to assign the two threads to the four execution contexts?

Answer: 优先分在不同的核心(Core)上,以避免资源争抢

例外情况: 两个线程之间有极其频繁的数据交换(需要共享 L1 Cache)

Another question: How would you assign threads to execution contexts if your C program spawned five threads?

Answer: 时分复用(Time-sharing)与负载均衡

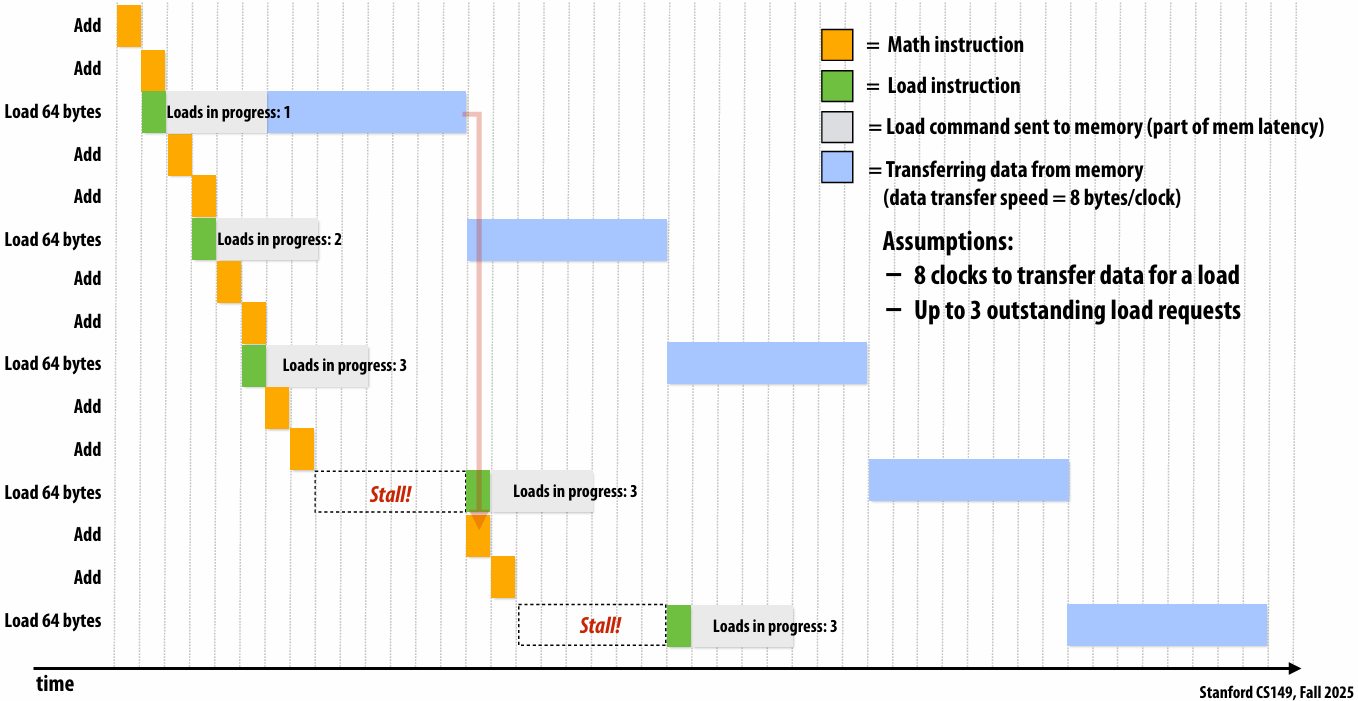

Lecture 3: Modern Multi-Core Architecture (Part II) + ISPC Programming Abstractions

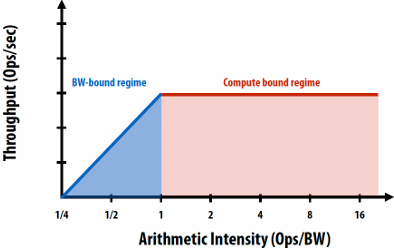

latency and bandwidth

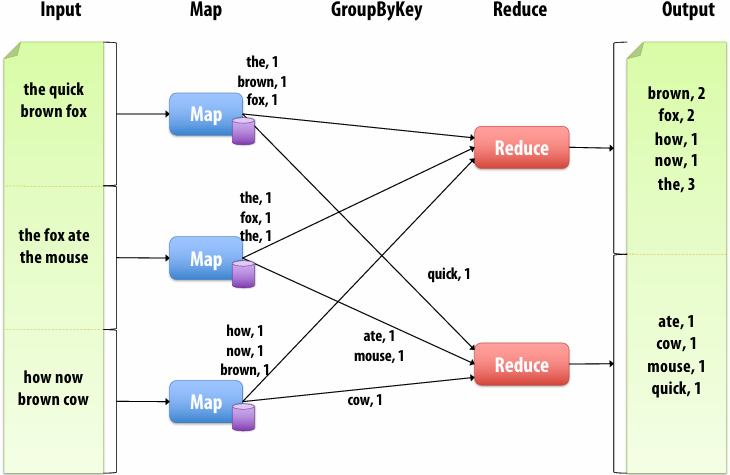

Memory bandwidth

The rate at which the memory system can provide data to a processor

Example: 20 GB/s

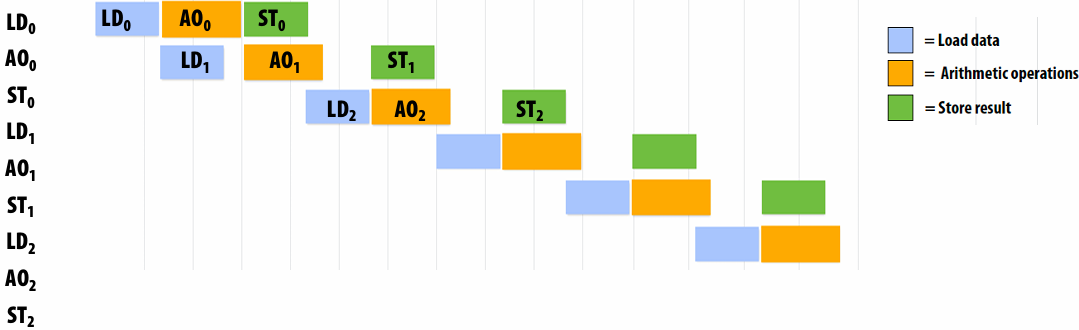

Pipelining

Consider a program that runs threads that repeat the following sequence of three dependent instructions

1.X = load 64 bytes

2.Y = add x + x

3.Z = add x + y

Let’s say we’re running this sequence on many threads of a multi-threaded(Assume there are plenty of hardware threads to hide memory latency) core that:

Executes one math operation per clock

Can issue load instructions in parallel with math

Receives 8 bytes/clock from memory

Processor that can do one add per clock (+ co-issues(同时执行) LDs)

outstanding(未完成的)

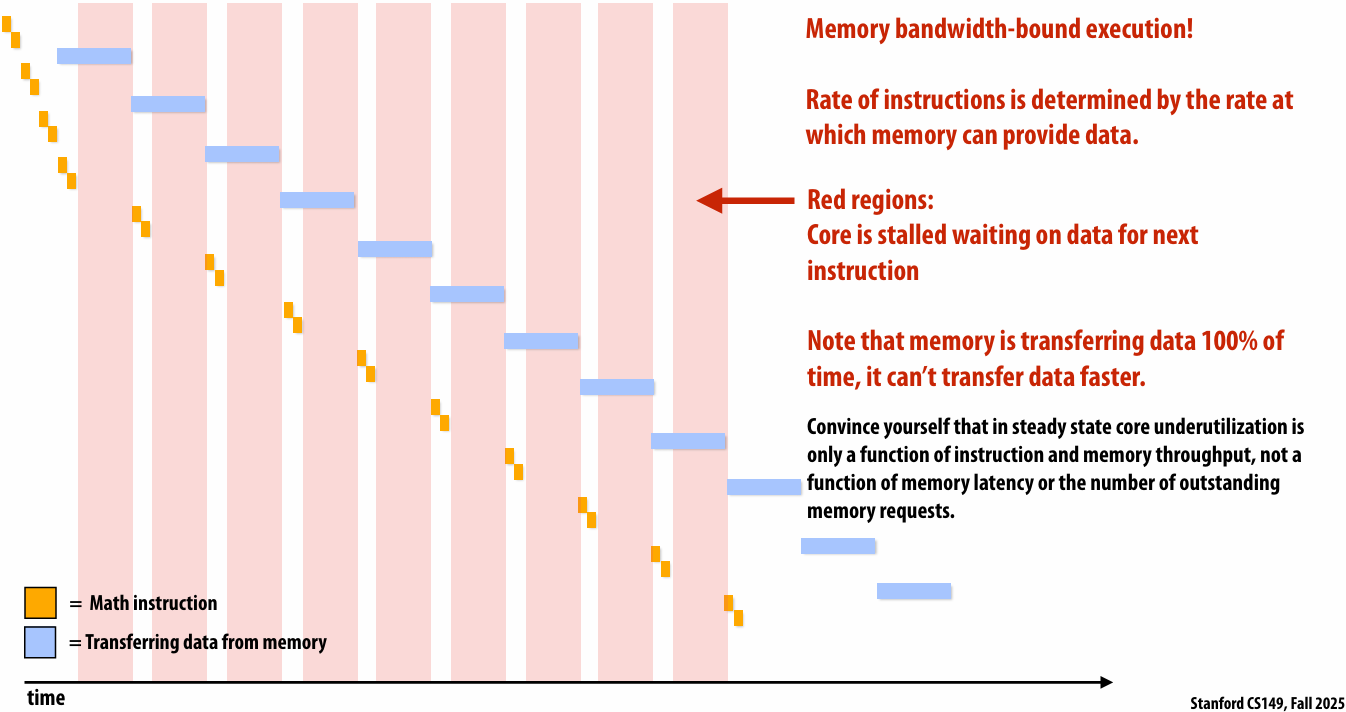

Rate of completing math instructions is limited by memory bandwidth

bandwidth-bound(带宽受限)

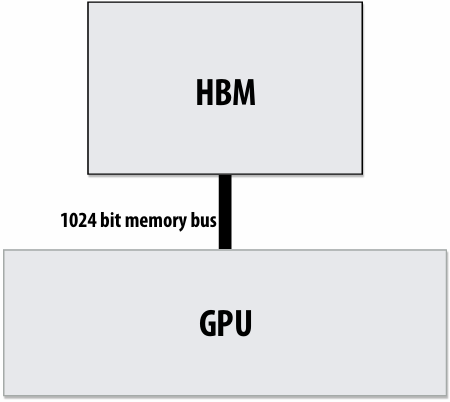

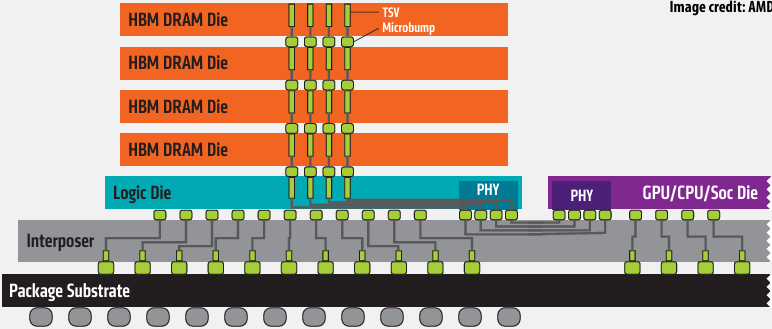

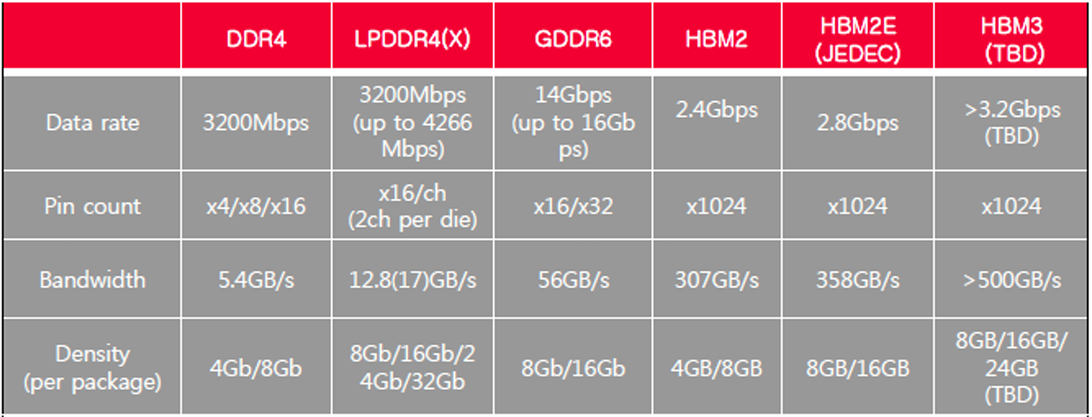

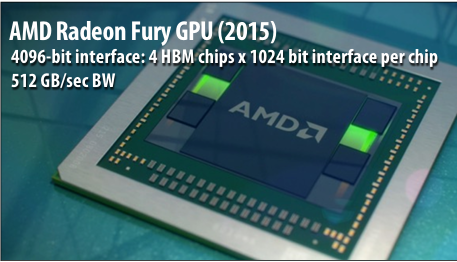

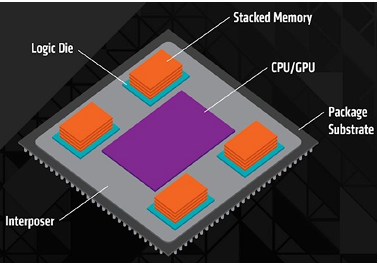

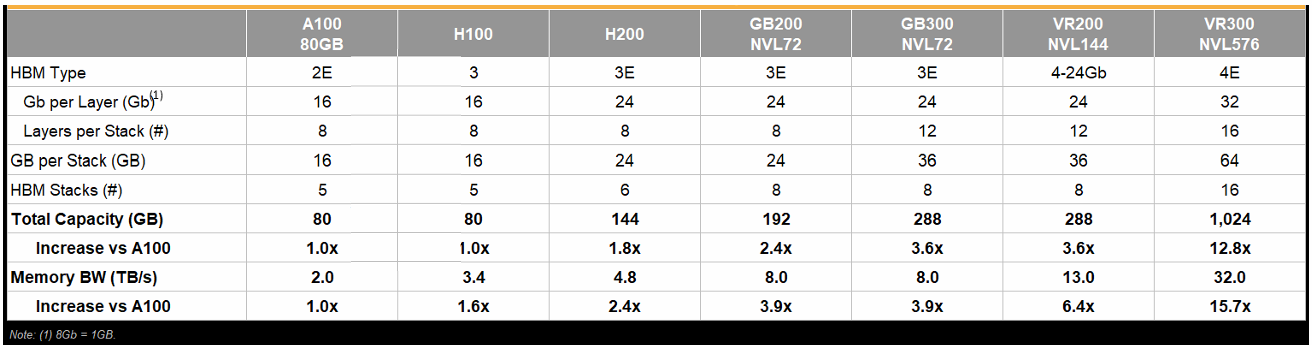

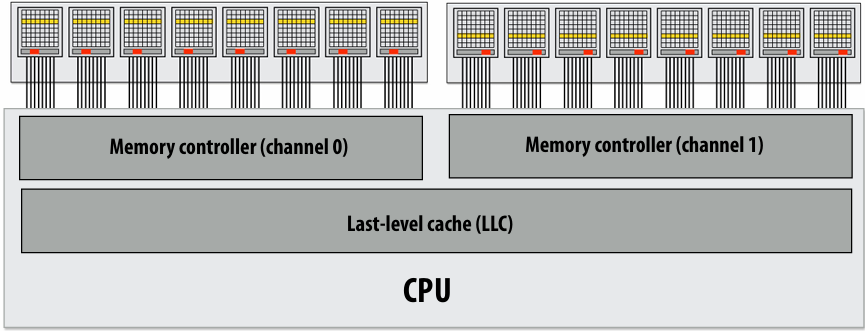

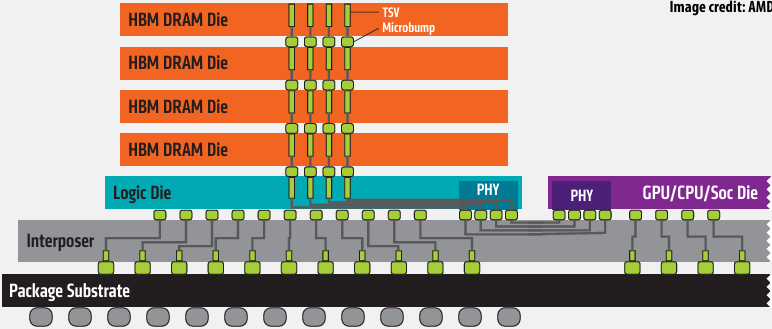

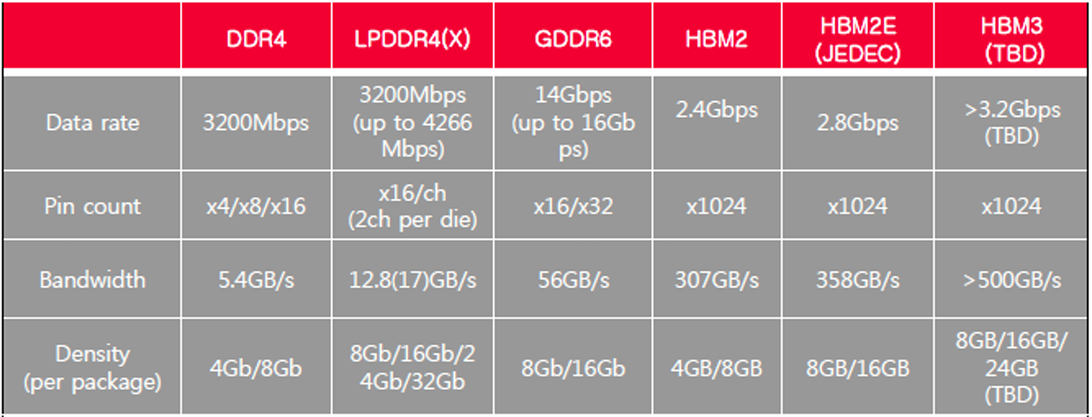

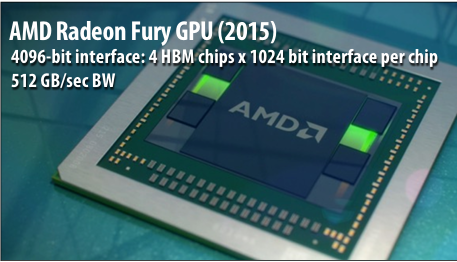

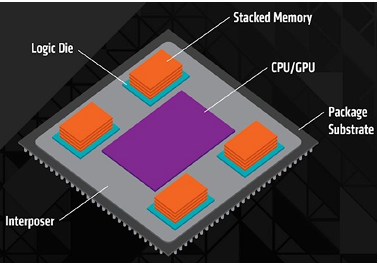

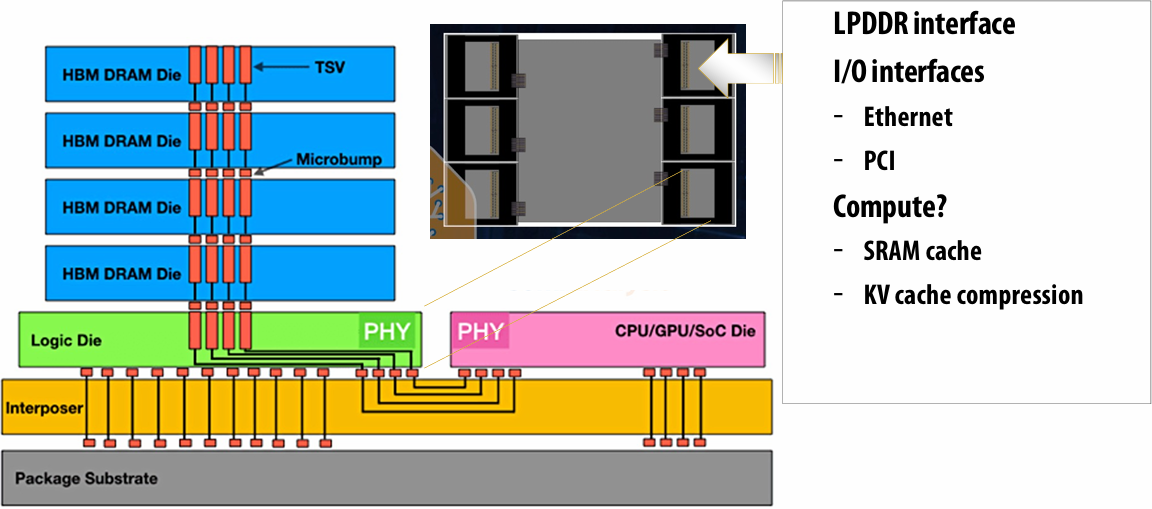

High bandwidth memories

Modern GPUs leverage(利用) high bandwidth memories located near processor

Example:

V100 uses HBM2

900 GB/s

Computation is bandwidth limited!

If processors request data at too high a rate, the memory system cannot keep up

Overcoming bandwidth limits is often the most important challenge facing software developers targeting modern throughput-optimized(优化) systems

In modern computing, bandwidth is the critical resource

Performant parallel programs will:

Organize computation to fetch data from memory less often

Reuse data previously loaded by the same thread (temporal locality optimizations)

Share data across threads (inter-thread(线程间) cooperation)

Favor(优先) performing additional arithmetic(算术运算) to storing/reloading values (the math is “free”)

Main point: programs must access memory infrequently to utilize modern processors efficiently

Abstraction vs. implementation

Semantics(语义): what do the operations provided by a programming model mean?

Given a program, and given the semantics (meaning) of the operations used, what is the answer that the program will compute?

Implementation (aka scheduling(调度)): how will the answer be computed on a parallel machine?

In what (potentially parallel) order will be a program’s operations be executed?

Which operations will be computed by each thread?

Each execution unit? Each lane(通道) of a vector instruction?

Your goal as a student:

Given a program and knowledge of how a parallel programming model is implemented, in your head can you “trace” through what each part of the parallel computer is doing during each step of program

Programming with ISPC

Intel SPMD Program Compiler (ISPC)

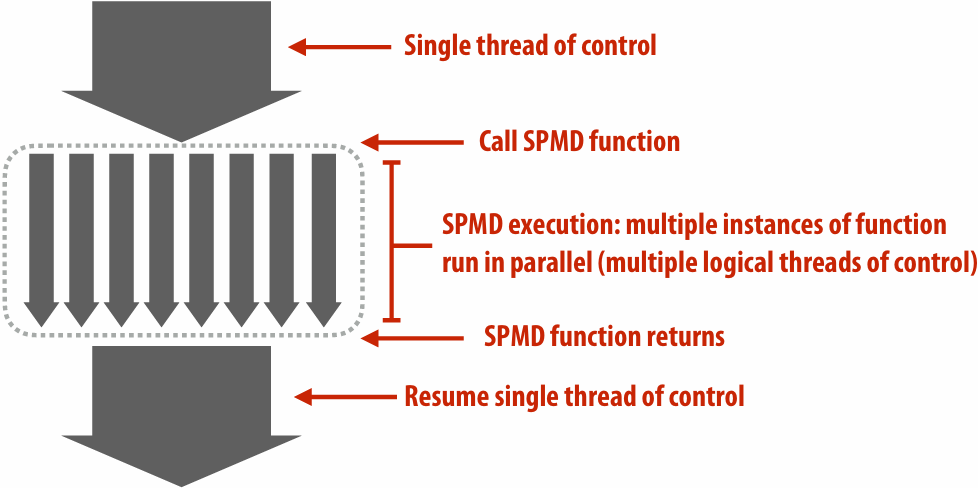

SPMD: single program multiple data

ispc/ispc: Intel® Implicit SPMD Program Compiler

Intel® Implicit SPMD Program Compiler

A great read: “The Story of ISPC” (by Matt Pharr) : The story of ispc: all the links

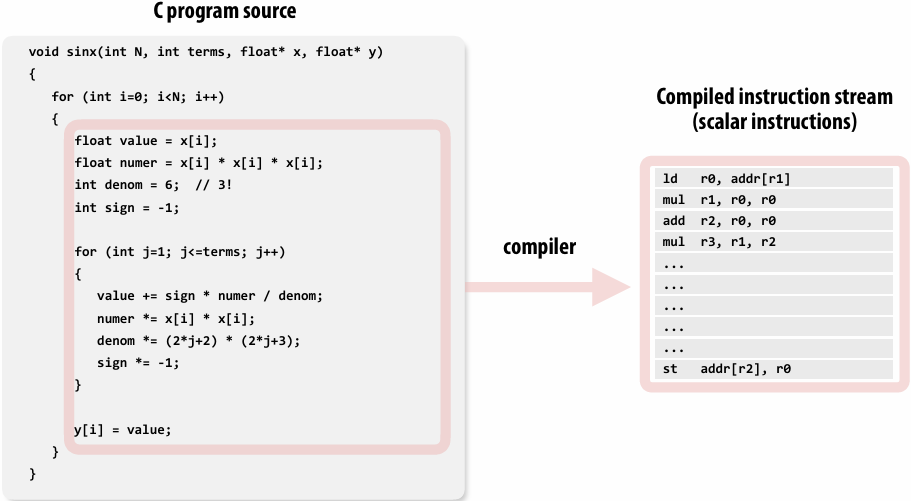

example program

Compute sin(x) using Taylor expansion: sin(x) = x - x3/3! + x5/5! - x7/7! + …

for each element of an array of N floating-point numbers

Invoking sinx()

1 | // C++ code: main.cpp |

1 | // C++ code: sinx.cpp |

sinx() in ISPC

1 | // C++ code: main.cpp |

1 | // ISPC code: sinx.ispc |

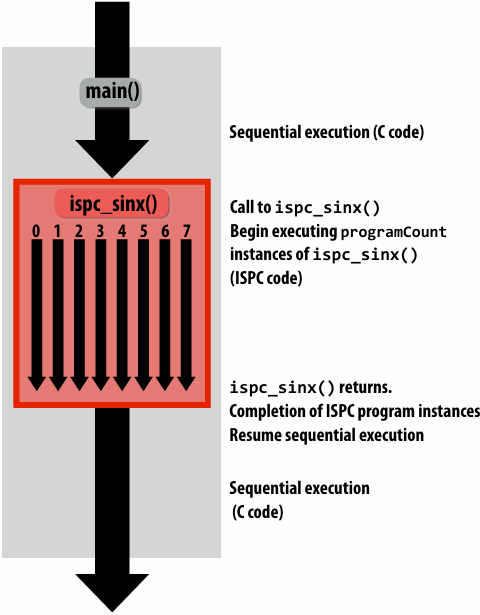

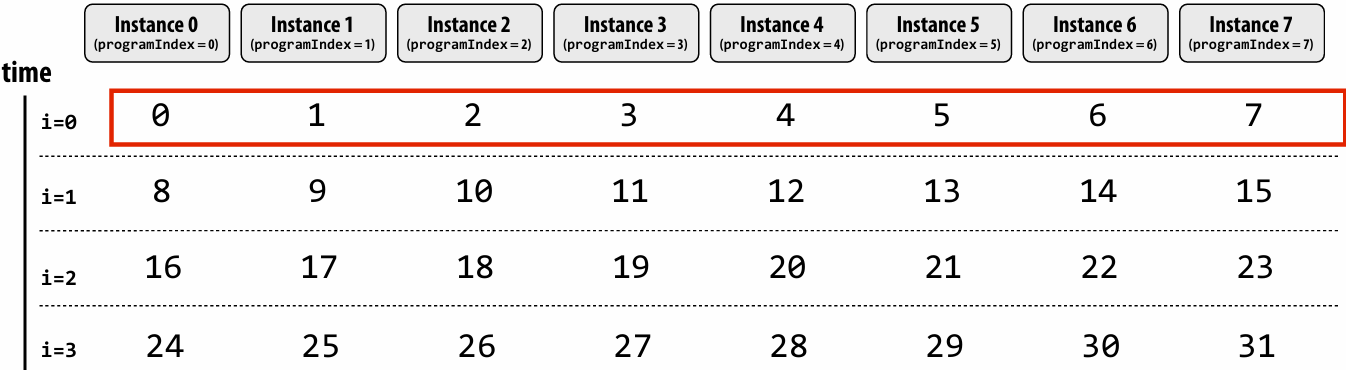

SPMD programming abstraction:

Call to ISPC function spawns “gang(组)” of ISPC “program instances”

All instances run ISPC code concurrently

Each instance has its own copy of local variables( programIndex int float )

Upon return, all instances have completed

ISPC language keywords:

programCount: number of simultaneously executing instances in the gang (uniform value)

programIndex: id of the current instance in the gang. (a non-uniform value: “varying”)

uniform: A type modifier. All instances have the same value for this variable. Its use is purely an optimization. Not needed for correctness

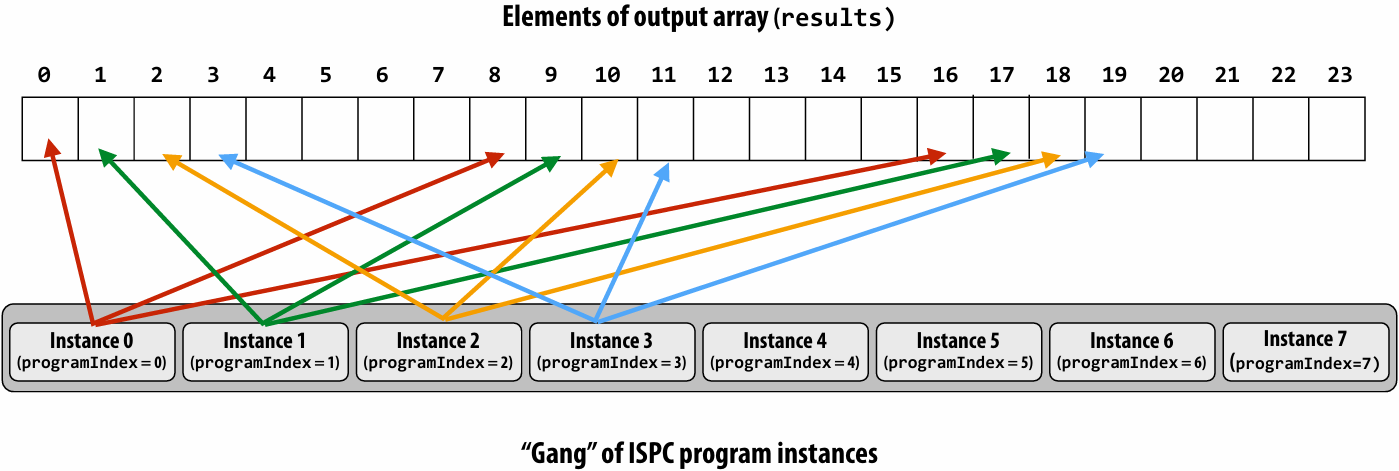

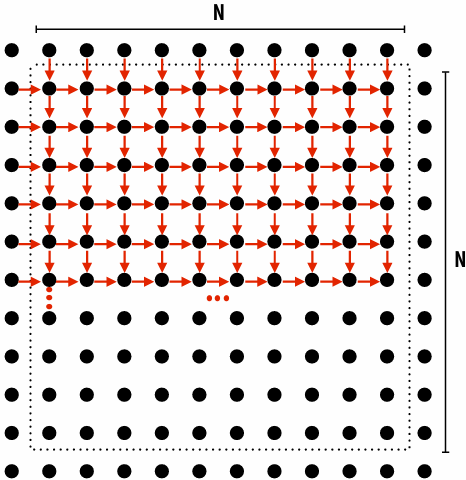

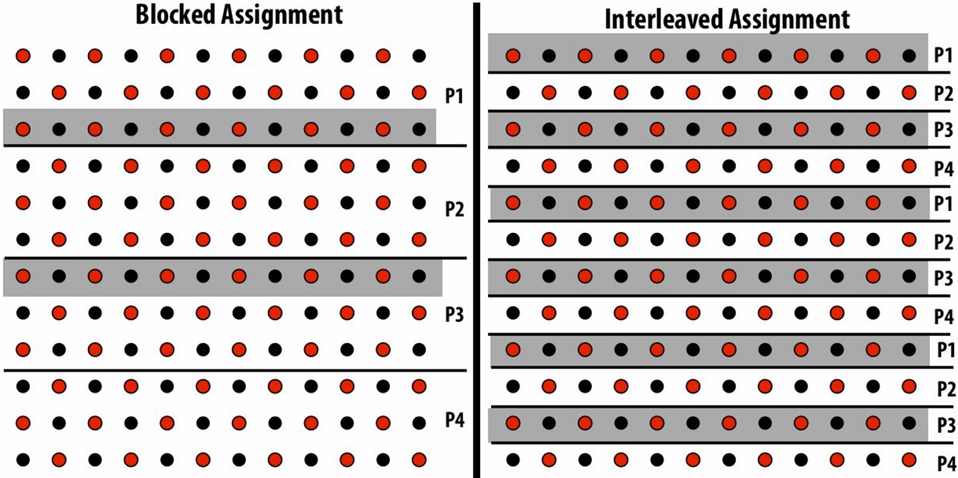

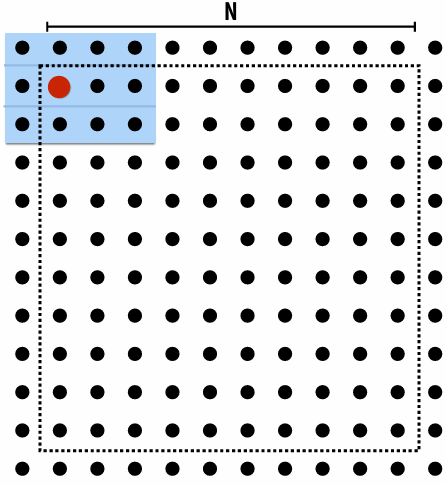

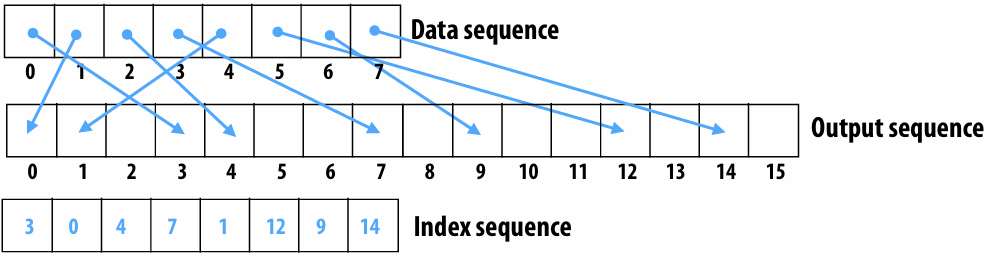

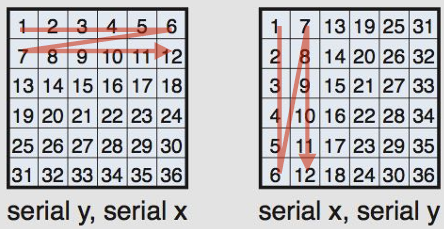

“Interleaved” assignment of array elements to program instances

Invoking sinx() in ISPC

In this illustration programCount = 8

Interleaved assignment of program instances to loop iterations

In this illustration: gang contains eight instances: programCount = 8

ISPC implements the gang abstraction using SIMD instructions

ISPC compiler generates SIMD implementation:

Number of instances in a gang is the SIMD width of the hardware (or a small multiple of SIMD width)

ISPC compiler generates a C++ function binary (.o) whose body contains SIMD instructions

C++ code links against generated object file as usual

sinx() in ISPC: version 2

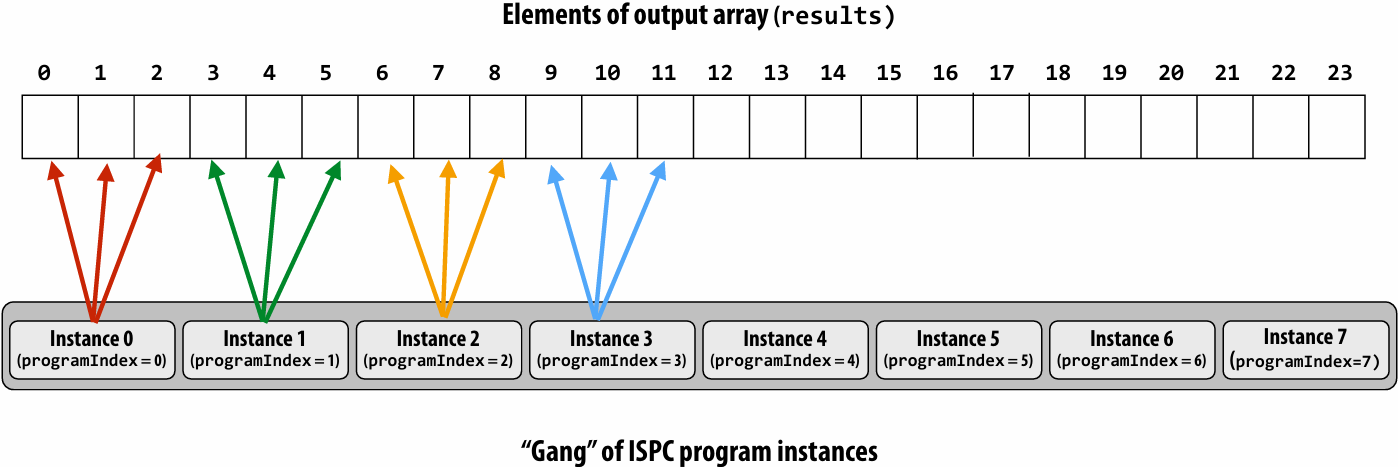

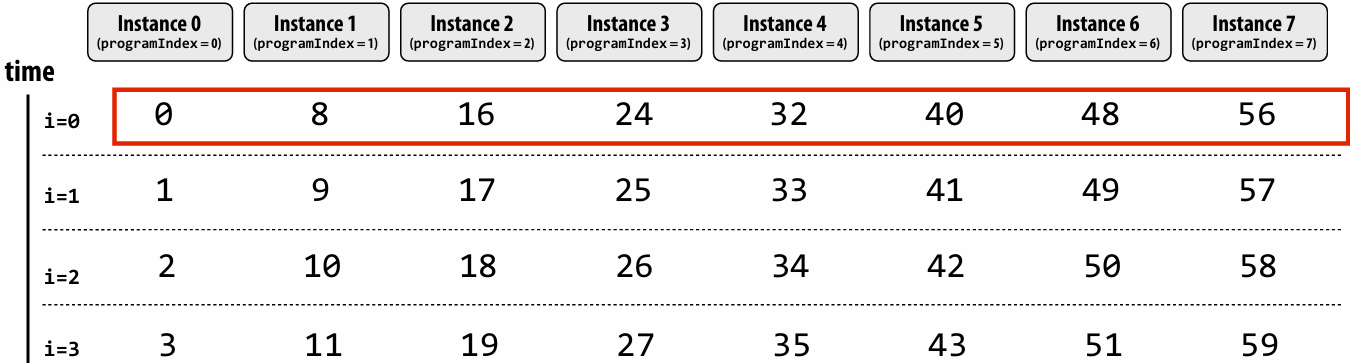

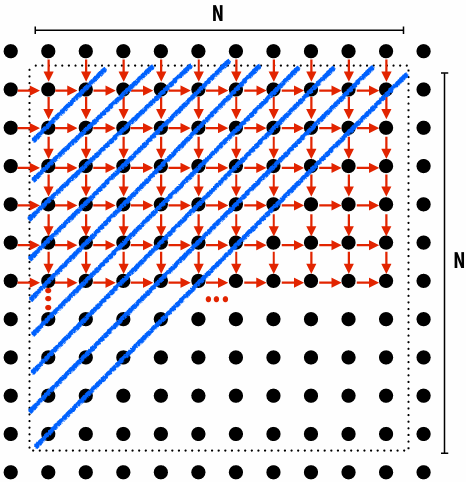

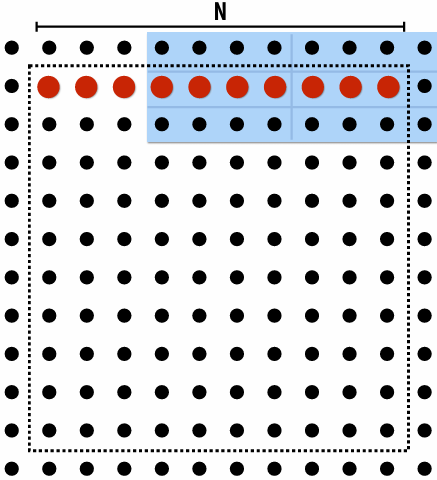

“Blocked” assignment of array elements to program instances

1 | // C++ code: main.cpp |

1 | // ISPC code: sinx.ispc |

Blocked assignment of program instances to loop iterations

In this illustration: gang contains eight instances: programCount = 8

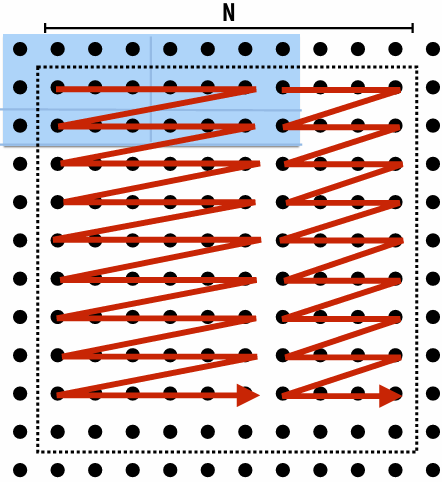

Schedule: interleaved assignment

“Gang” of ISPC program instances

Gang contains four instances: programCount = 8

A single “packed vector load” instruction (vmovaps (see _mm256_load_ps() intrinsic function) ) efficiently implements:

float value = x[idx];

for all program instances, since the eight values are contiguous in memory

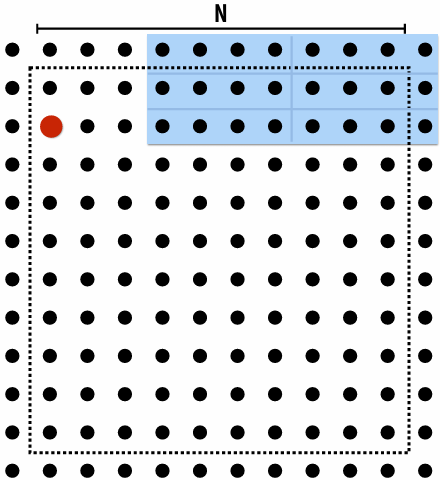

Schedule: blocked assignment

“Gang” of ISPC program instances

Gang contains four instances: programCount = 8

float value = x[idx];

For all program instances now touches eight non-contiguous values in memory

Need “gather” instruction (vgatherdps (see _mm256_i32gather_ps() intrinsic function) ) to implement (gather is a more complex, and more costly SIMD instruction…)

Raising level of abstraction with foreach

1 | // C++ code: main.cpp |

1 | // ISPC code: sinx.ispc |

foreach: key ISPC language construct

foreach declares parallel loop iterations

Programmer says: these are the iterations the entire gang (not each instance) must perform

ISPC implementation takes responsibility for assigning iterations to program instances in the gang

How might foreach be implemented?

Code written using foreach abstraction:

1 | foreach (i = 0 ... N) |

Implementation 1: program instance 0 executes all iterations

1 | if (programCount == 0) { |

Implementation 2: interleave iterations onto program instances

1 | // assume N % programCount = 0 |

Implementation 3: block iterations onto program instances

1 | // assume N % programCount = 0 |

Implementation 4: dynamic assignment of iterations to instances

1 | uniform int nextIter; |

Thinking about iterations, not parallel execution

In many simple cases, using foreach allows the programmer to express their program almost as if it was a sequential program

1 | export void ispc_function( |

What does this program do?

1 | // main C++ code: |

1 | // ISPC code: |

This ISPC program computes the absolute value of elements of x, then repeats it twice in the output array y

1 | // main C++ code: |

1 | // ISPC code: |

The output of this program is undefined!

Possible for multiple iterations of the loop body to write to same memory location

Computing the sum of all elements in an array (incorrectly)

What’s the error in this program?

1 | export uniform float sum_incorrect_1( |

sum is of type float (different variable for all program instances)

Cannot return many copies of a varianble to the calling C code, which expects one return value of type float

Result: compile-time type error

What’s the error in this program?

1 | export uniform float sum_incorrect_2( |

sum is of type uniform float (one copy of variable for all program instances)

x[i] has a different value for each program instance

So what gets copied into sum? (一份sum,多份x[i],无法让多个并行的实例同时修改同一个变量)

Result: compile-time type error

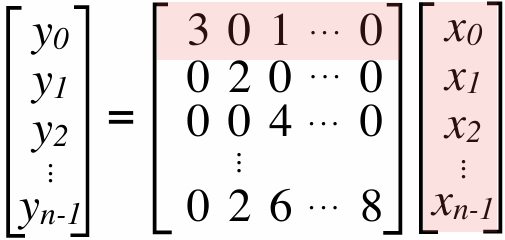

Computing the sum of all elements in an array (correctly)

1 | export uniform float sum_array( |

Each instance accumulates a private partial sum (no communication)

Partial sums are added together using the reduce_add() cross-instance communication primitive

The result is the same total sum for all program instances (reduce_add() returns a uniform float)

The ISPC code will execute in a manner similar to the C code with AVX intrinsics implemented below

1 | float sum_summary_AVX(int N, float* x) { |

ISPC’s cross program instance operations

Compute sum of a variable’s value in all program instances in a gang:

1 | uniform int64 reduce_add(int32 x); |

Compute the min of all values in a gang:

1 | uniform int32 reduce_min(int32 a); |

Broadcast a value from one instance to all instances in a gang:

1 | int32 broadcast(int32 value, uniform int index); |

For all i, pass value from instance i to the instance i+offset % programCount:

1 | int32 rotate(int32 value, uniform int offset); |

ISPC: abstraction vs. implementation

Single program, multiple data (SPMD) programming model

Programmer “thinks”: running a gang is spawning programCount logical instruction streams (each with a different value of programIndex)

This is the programming abstraction

Program is written in terms of this abstractionSingle instruction, multiple data (SIMD) implementation

ISPC compiler emits vector instructions (e.g., AVX2, ARM NEON) that carry out the logic performed by a ISPC gang

ISPC compiler handles mapping of conditional control flow to vector instructions (by masking vector lanes, etc. like you do manually in assignment 1)Semantics of ISPC can be tricky(棘手的)

SPMD abstraction + uniform values (allows implementation details to peek through(透过) abstraction a bit)

SPMD programming model summary

SPMD = “single program, multiple data”

Define one function, run multiple instances of that function in parallel on different input arguments

ISPC tasks

- The ISPC gang abstraction is implemented by SIMD instructions that execute within on thread running on one x86 core of a CPU

- So all the code I’ve shown you in the previous slides would have executed on only one of the four cores of the myth machines(斯坦福大学计算机科学系(Stanford CS)提供的专供学生使用的公共 Linux 计算集群)

- ISPC contains another abstraction: a “task” that is used to achieve multi-core execution. I’ll let you read up about that as you do assignment 1

An ISPC task is just a task. A thread would be an implementation detail. But it’s very true that if you create eight tasks, a smart thing for ISPC to do would be under the hood, spawn eight threads and run them all on different threads. But if you create 100,000 tasks, it probably would be pretty dumb for ISPC to create 100,000 threads.

How does a computer that needs to run apparently a 700 kernel threads right now, how does it run 700 kernel threads on computer?

It’s got a context switch

The operating system from time t o time is saying, here are those eight threads that need to run. I’m going to put them on the processor and let the processor run. And periodically, some timer expires, and the operating system says, well, we have 700 threads. We need to have another 8 run. So we’re going to rip those threads off the processor and put those on. And you can imagine that could be pretty slow

operating system context switch vs. hardware execution context switch

| 特性 | 硬件多线程 (Hardware Multi-threading) | 操作系统上下文切换 (OS Context Switch) |

|---|---|---|

| 谁来做 | 硬件(电路) 自动完成 | 软件(内核代码) 执行指令完成 |

| 寄存器 | 复制多份(每个线程有独立的物理寄存器组) | 只有一套(切换时必须把内容存到 RAM) |

| 切换耗时 | 极快(通常 0 - 数个周期) | 极慢(数千到数十万个周期) |

| 切换触发点 | 硬件检测到 Stall(如 Cache Miss) | 时间片到期、I/O 阻塞、系统调用 |

| 主要目标 | 延迟隐藏 (Latency Hiding),榨干执行单元 | 任务管理、公平性、多任务并发 |

ISPC is a low-level programming language: by exposing(公开) programIndex and programCount, it allows programmer to define what work each program instance does and what data each instance accesses

Can implement programs with undefined output

Can implement programs that are correct only for a specific programCount

Everything outside a foreach must be uniform values and uniform logic(确保程序的控制流在进入并行区域前是明确且同步的)

串行上下文与并行上下文的切换:在 ISPC 中,代码的运行逻辑可以看作是“单线程入口,局部并行”

Outside foreach(外部):代码处于串行控制流中。此时,编译器认为它是在为“整组”程序实例(整个 SIMD 单元)做决策。既然是为整组做决策,所有的变量值和逻辑判断就必须对所有实例都一致(即 uniform)

Inside foreach(内部):这是并行开始的地方。foreach 负责把数据分配给不同的程序实例(Program Instances)。只有在这里,变量才可以是 varying 的(每个实例拥有不同的值)

硬件效率与寄存器利用

uniform 变量:通常存储在 CPU 的标量寄存器(Scalar Registers)中。它们只占一份空间,计算速度极快

varying 变量:存储在 SIMD 向量寄存器中

如果外部变量都是 uniform 的,编译器可以进行大量的优化,避免不必要的向量运算,减少内存带宽压力。只有在真正处理大量数组元素(foreach 内部)时,才动用沉重的向量资源

Summary

Programming models provide a way to think about the organization of parallel programs

They provide abstractions that permit multiple valid implementations

I want you to always be thinking about abstraction vs. implementation for the remainder(剩余部分) of this course

Assignment 1: Analyzing Parallel Program Performance on a Quad-Core CPU

搭建本地Linux开发环境

安装WSL2(Windows Subsystem for Linux)

1.以管理员权限打开PowerShell,运行: wsl –install

2.在Microsoft Store下载 Ubuntu 22.04 LTS

3.优点:你可以在Ubuntu里写C++/CUDA 代码,但依然能用Windows的浏览器看视频、查文档

Windows 安装 WSL2 并运行 Ubuntu 22.04 指南本文为 Windows 10 和 Windows - 掘金

安装 WSL | Microsoft Learn

如果显示正在安装: Ubuntu 这时候卡住在0%(微软商店抽风),那么我们执行如下指令,从GitHub下载

1 | wsl --install -d Ubuntu --web-download |

配置 CUDA Toolkit

1.在 Windows主机安装最新的NVIDIA 驱动

2.在 WSL2(Ubuntu)里安装 CUDA Toolkit(注意要选Linux-WSL 版本)

3.安装完成后,输入nvcc -V, 看到版本号即表示成功

WLS2安装CUDA保姆级教程_wsl2 cuda-CSDN博客

IDE选择

使用Vs Code,并安装”Remote - WSL”扩展

这样你可以在 Windows界面操作,但所有的编译和运行都在WSL2的Linux环境下进行

开始将 VS Code 与 WSL 配合使用 | Microsoft Learn

Using C++ and WSL in VS Code

具体内容和实现

[WorldClass/Stanford CS149 Parallel Computing/asst1 at master · tiny-star3/WorldClass](https://github.com/tiny-star3/WorldClass/tree/master/Stanford CS149 Parallel Computing/asst1)

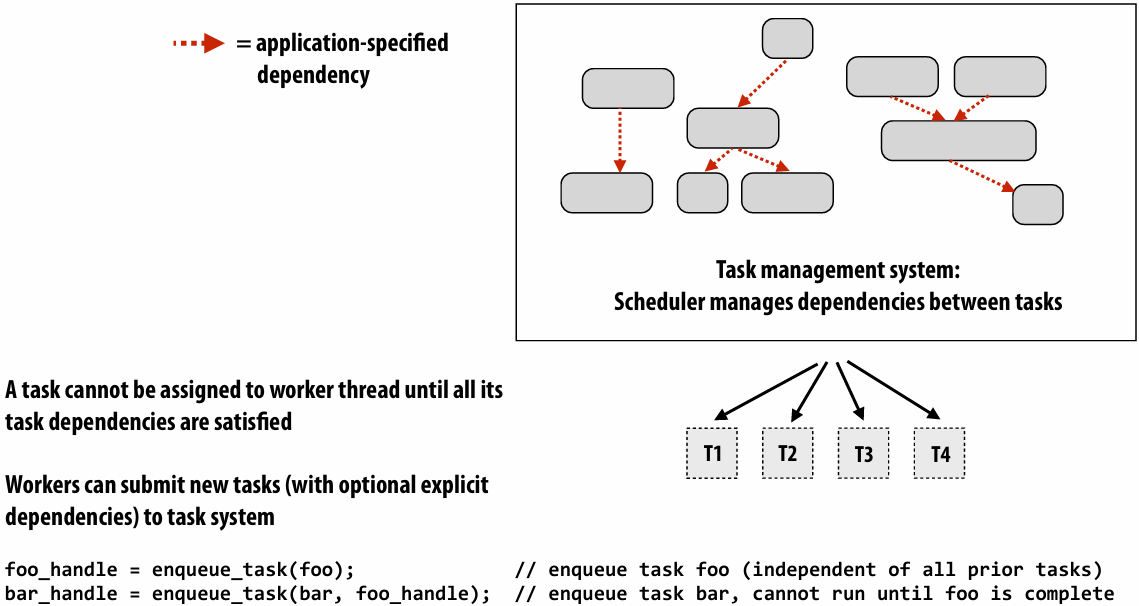

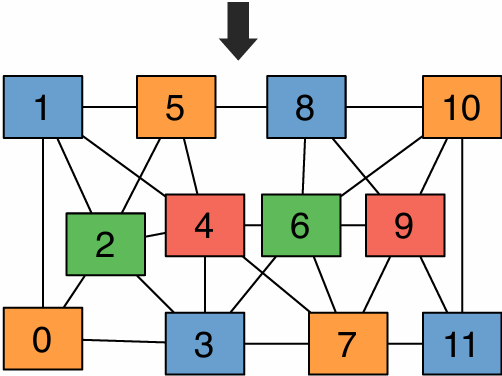

Lecture 4: Parallelizing Code: The Programming Thought Process

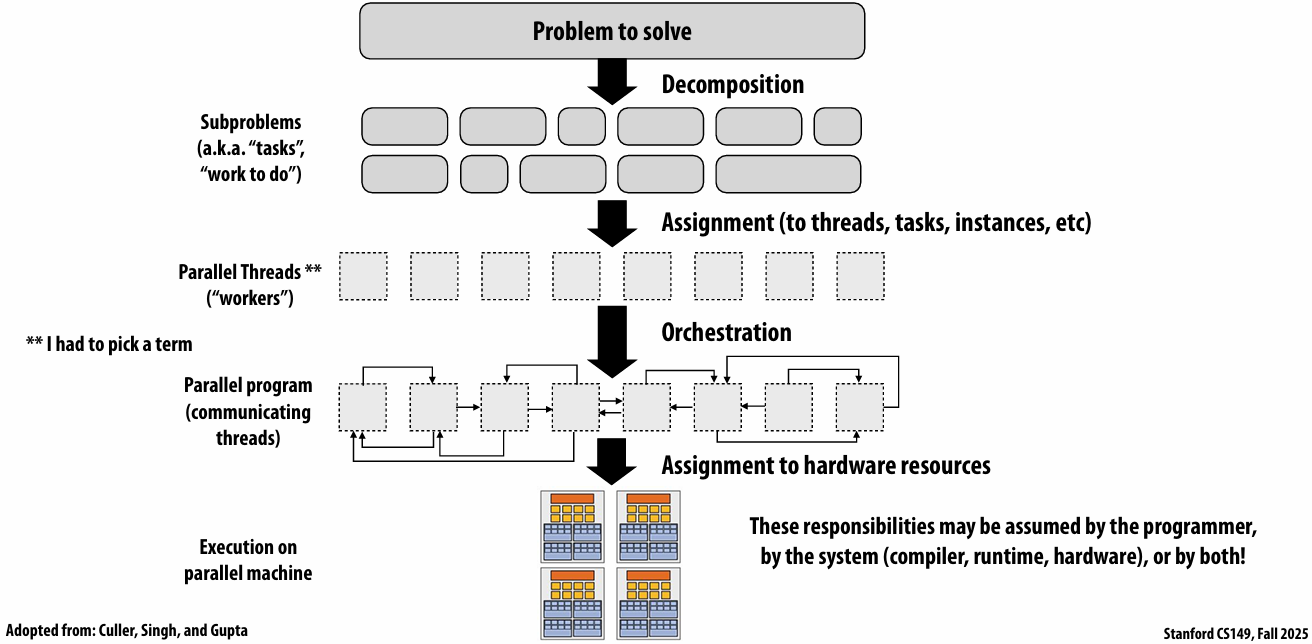

Creating a parallel program

Your thought process:

Identify work that can be performed in parallel

Partition work (and also data associated with the work)

Manage data access, communication, and synchronization

A common goal is maximizing speedup

For a fixed computation: Speedup(P processors) = Time(1 processor) / Time (P processors)

Other goals include achieving high efficiency (cost, area, power, etc.) or working on bigger problems than can fit on one machine

Orchestration 编排

Problem decomposition

Break up problem into tasks that can be carried out in parallel

In general: create at least enough tasks to keep all execution units on a machine busy

Key challenge of decomposition: identifying dependencies (or… a lack of dependencies)

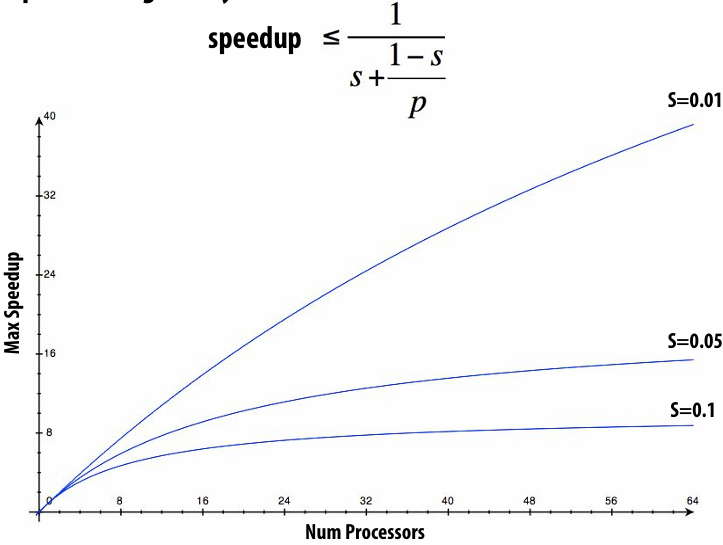

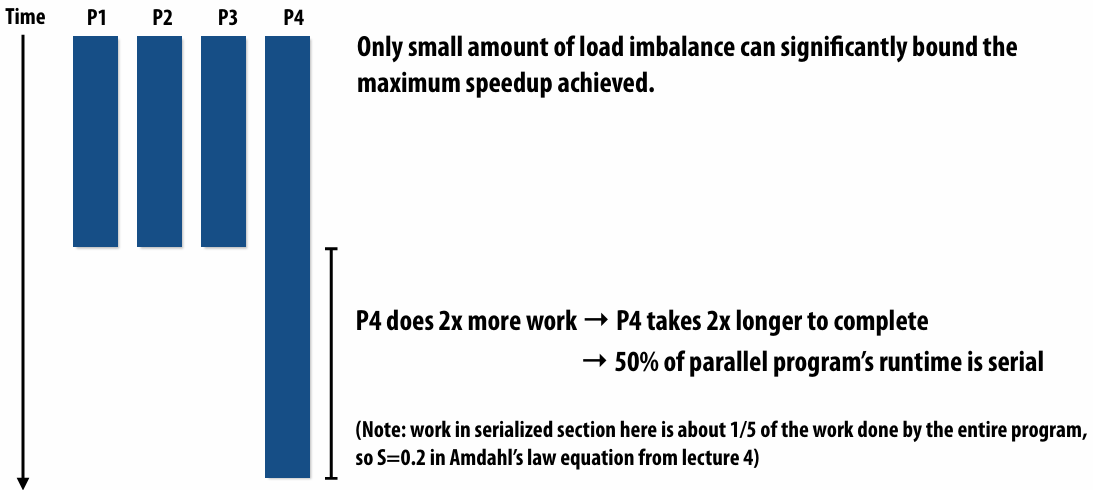

Amdahl’s Law: dependencies limit maximum speedup due to parallelism

Let S = the fraction(比例) of sequential execution that is inherently sequential (dependencies prevent parallel execution)

Then maximum speedup due to parallel execution ≤ 1/S

Max speedup on P processors given by:

Assignment

Assigning tasks to workers

Think of “tasks” as things to do

What are “workers”? (Might be threads, program instances, vector lanes, etc.)

Goals: achieve good workload balance, reduce communication costs

Can be performed statically (before application is run), or dynamically as program executes

Although programmer is often responsible for decomposition, many languages/runtimes take responsibility for assignment

static assignment using C++11 threads

Dynamic assignment using ISPC tasks

Orchestration

Involves:

Structuring communication

Adding synchronization to preserve(维持) dependencies if necessary

Organizing data structures in memory

Scheduling tasks

Goals: reduce costs of communication/sync, preserve locality of data reference, reduce overhead, etc.

Machine details impact many of these decisions

If synchronization is expensive, programmer might use it more sparsely(少)

Assignment to hardware

Assign “threads” (“workers”) to hardware execution units

Example 1: assignment to hardware by the operating system

e.g., map a thread to HW execution context on a CPU core

Example 2: assignment to hardware by the compiler

e.g., Map ISPC program instances to vector instruction lanes

Example 3: assignment to hardware by the hardware

e.g., Map CUDA thread blocks to GPU cores (discussed in a future lecture)

Many interesting decisions:

Place related threads (cooperating threads) on the same core (maximize locality, data sharing, minimize costs of comm/sync)

Place unrelated threads on the same core (one might be bandwidth limited and another might be compute limited) to use machine more efficiently

A parallel programming example

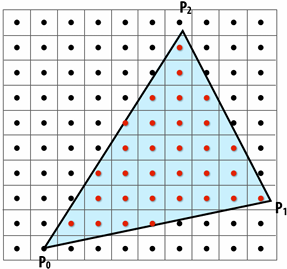

A 2D-grid based solver

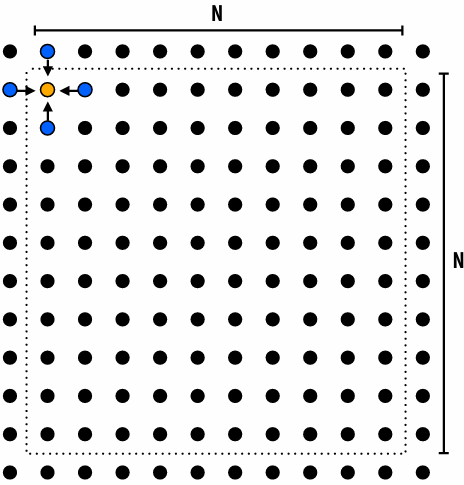

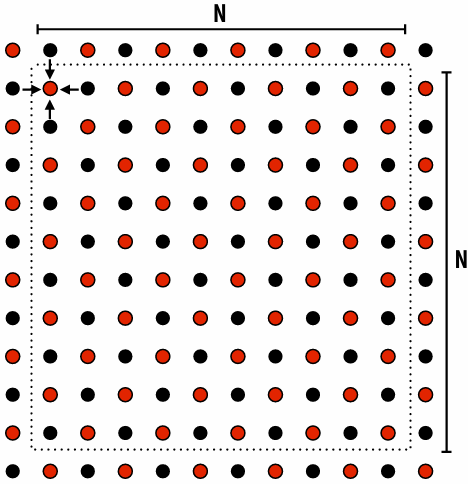

Problem: solve partial differential equation(偏微分方程) (PDE) on (N+2) x (N+2) grid

Solution uses iterative algorithm:

Perform Gauss-Seidel sweeps(高斯-赛德尔扫描) over grid until convergence

A[i,j] = 0.2 * (A[i,j] + A[i,j-1] + A[i-1,j] + A[i,j+1] + A[i+1,j]);

Grid solver algorithm: find the dependencies

Pseudocode for sequential algorithm is provided below

1 | const int n; |

Step 1: identify dependencies (problem decomposition phase)

Each row element depends on element to left

Each row depends on previous row

Note: the dependencies illustrated on this slide are grid element data dependencies in one iteration of the solver (in one iteration of the “while not done” loop)

There is independent work along the diagonals!

Good: parallelism exists!

Possible implementation strategy:

1.Partition grid cells on a diagonal into tasks

2.Update values in parallel

3.When complete, move to next diagonal

Bad: independent work is hard to exploit

Not much parallelism at beginning and end of computation

Frequent synchronization (after completing each diagonal)

Idea: improve performance by changing the algorithm to one that is more amenable(适合) to parallelism

Change the order that grid cell cells are updated

New algorithm iterates to same solution (approximately), but converges to solution differently

Note: floating-point values computed are different, but solution still converges to within error threshold(误差阈值)

Yes, we needed domain knowledge of the Gauss-Seidel method to realize this change is permissible

This is a common technique in parallel programming

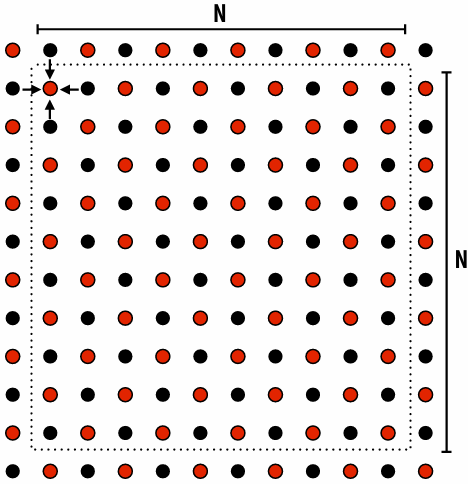

New approach: reorder grid cell update via red-black coloring

Reorder grid traversal(遍历顺序): red-black coloring

Update all red cells in parallel

When done updating red cells , update all black cells in parallel (respect dependency on red cells)

Repeat until convergence

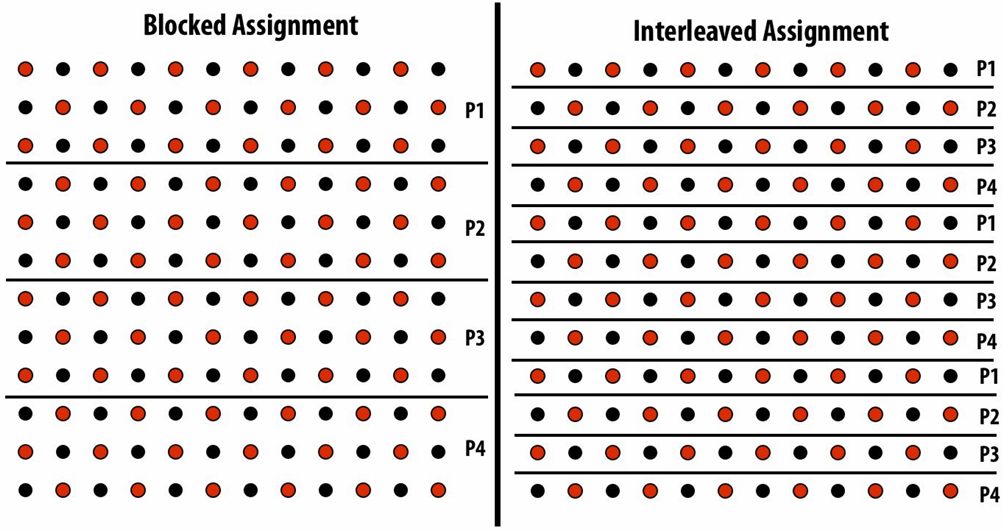

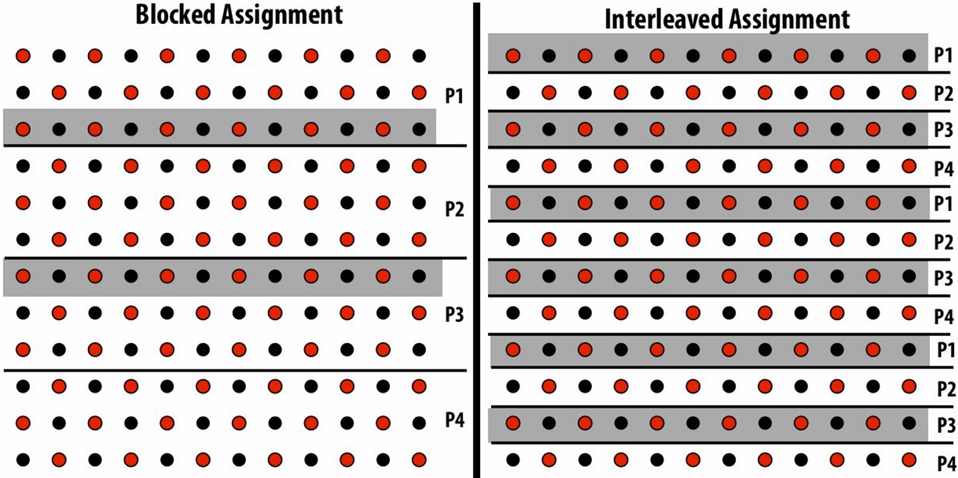

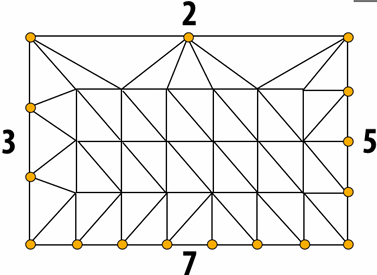

Possible assignments of work to processors

Question: Which is better? Does it matter?

Answer: It depends on the system this program is running on

Consider dependencies in the program

1.Perform red cell update in parallel

2.Wait until all processors done with update

3.Communicate updated red cells to other processors

4.Perform black cell update in parallel

5.Wait until all processors done with update

6.Communicate updated black cells to other processors

7.Repeat

Communication resulting from assignment

shaded area = data that must be sent to P2 each iteration

Blocked assignment requires less data to be communicated between processors

Two ways to think about writing this program

Data parallel thinking

SPMD / shared address space

Data-parallel expression of grid solver

Note: to simplify pseudocode: just showing red-cell update

1 | const int n; |

Shared address space (with SPMD threads) expression of solver

Programmer is responsible for synchronization

Common synchronization primitives:

Locks (provide mutual exclusion): only one thread in the critical region at a time

Barriers: wait for threads to reach this point

1 | // Assume these are global variables (accessible to all threads) |

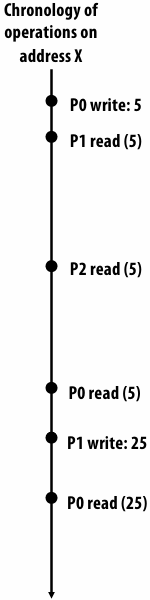

Synchronization in a shared address space

Shared address space model (abstraction)

Threads communicate by:

Reading/writing to shared variables in a shared address space(shared variables)

Communication between threads is implicit in memory loads/stores

Manipulating synchronization primitives(原语)

e.g., ensuring mutual exclusion via use of locks

This is a natural extension of sequential programming

Barrier synchronization primitivebarrier(num_threads)

Barriers are a conservative(保守) way to express dependencies

Barriers divide computation into phases

All computation by all threads before the barrier complete before any computation in any thread after the barrier begins

In other words, all computations after the barrier are assumed to depend on all computations before the barrier

Shared address space solver: one barrier

Idea: Remove dependencies by using different diff variables in successive(连续的) loop iterations

Trade off footprint(牺牲内存占用) for removing dependencies! (a common parallel programming technique)

1 | int n; |

Grid solver implementation in two programming models

Data-parallel programming model

Synchronization:

Single logical thread of control, but iterations of forall loop may be parallelized by the system (implicit barrier at end of forall loop body)

Communication

Implicit in loads and stores (like shared address space)

Special built-in primitives for more complex communication patterns: e.g., reduce

Shared address space

Synchronization:

Mutual exclusion required for shared variables (e.g., via locks)

Barriers used to express dependencies (between phases of computation)

Communication

Implicit in loads/stores to shared variables

Summary

Amdahl’s Law

Overall maximum speedup from parallelism is limited by amount of serial execution in a program

Aspects of creating a parallel program

Decomposition to create independent work, assignment of work to workers, orchestration (to coordinate(协调) processing of work by workers), mapping to hardware

We’ll talk a lot about making good decisions in each of these phases in the coming lectures

Focus today: identifying dependencies

Focus soon: identifying locality(局部性), reducing synchronization

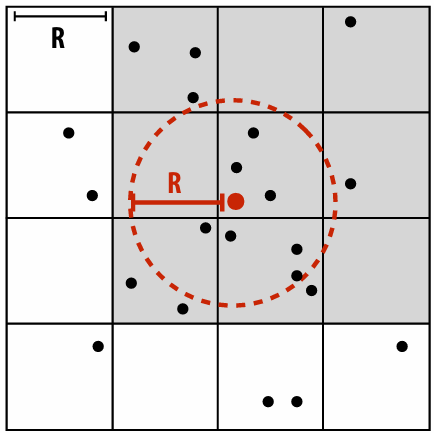

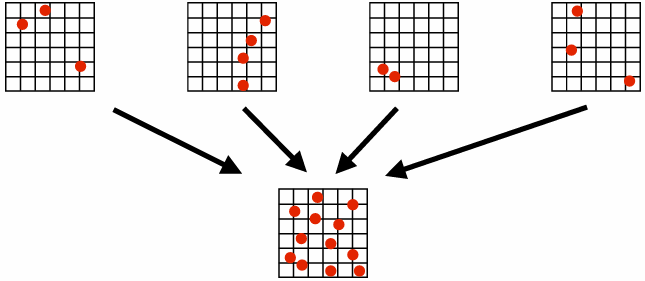

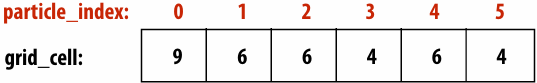

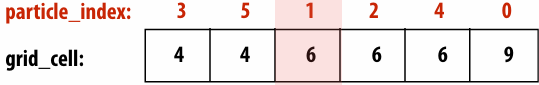

Lecture 5: Performance Optimization Part 1: Work Distribution and Scheduling

Programming for high performance

Optimizing the performance of parallel programs is an iterative process of refining choices for decomposition, assignment, and orchestration…

Key goals (that are at odds with each other) (彼此冲突)

Balance workload onto available execution resources

Reduce communication (to avoid stalls)

Reduce extra work (overhead) performed to increase parallelism, manage assignment, reduce communication, etc

TIP #1: Always implement the simplest solution first, then measure performance to determine if you need to do better

Balancing the workload

Ideally: all processors are computing all the time during program execution

(they are computing simultaneously, and they finish their portion of the work at the same time)

Static assignment

Assignment of work to threads does not depend on dynamic behavior

Assignment not necessarily set at compile-time (we call it static is the assignment is determined when the amount of work and number of workers is known: assignment may depend on runtime parameters such as input data size, number of threads, etc.)

Good aspects of static assignment: simple, essentially zero runtime overhead to perform assignment

When is static assignment applicable?

When the cost (execution time) of work and the amount of work is predictable, allowing the programmer to work out a good assignment in advance

Simplest example: it is known up front that all work has the same cost

When work is predictable, but not all jobs have same cost

Jobs have unequal, but known cost: assign equal number of tasks to processors to ensure good load balance (on average)

When statistics about execution time are predictable (e.g., same cost on average)

“Semi-static” assignment

Cost of work is predictable for near-term future

Idea: recent past is a good predictor of near future

Application periodically(定期) profiles(分析) its execution and re-adjusts assignment

Assignment is “static” for the interval between re-adjustments

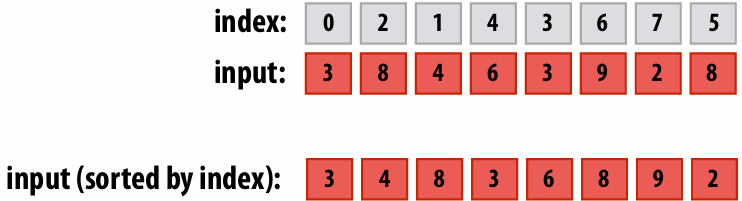

Dynamic assignment

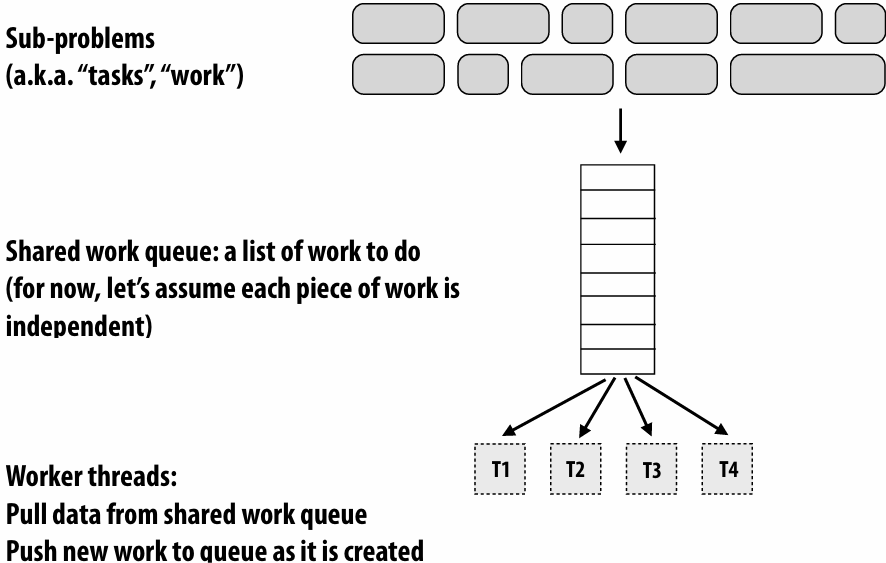

Program determines assignment dynamically at runtime to ensure a well-distributed load

(The execution time of tasks, or the total number of tasks, is unknown or unpredictable.)

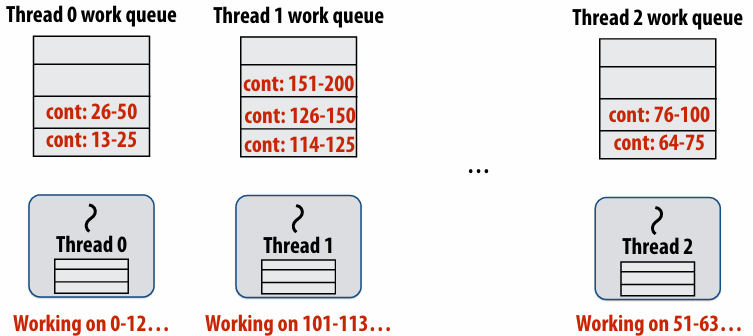

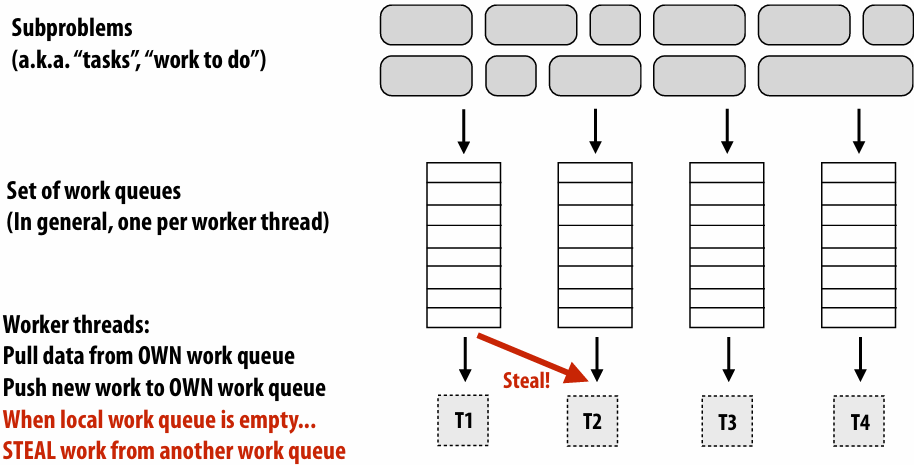

Dynamic assignment using a work queue

Choosing task size

Useful to have many more tasks than processors(many small tasks enables good workload balance via dynamic assignment)

Motivates small granularity tasks

But want as few tasks as possible to minimize overhead of managing the assignment

Motivates large granularity tasks

Ideal granularity depends on many factors(Common theme in this course: must know your workload, and your machine)

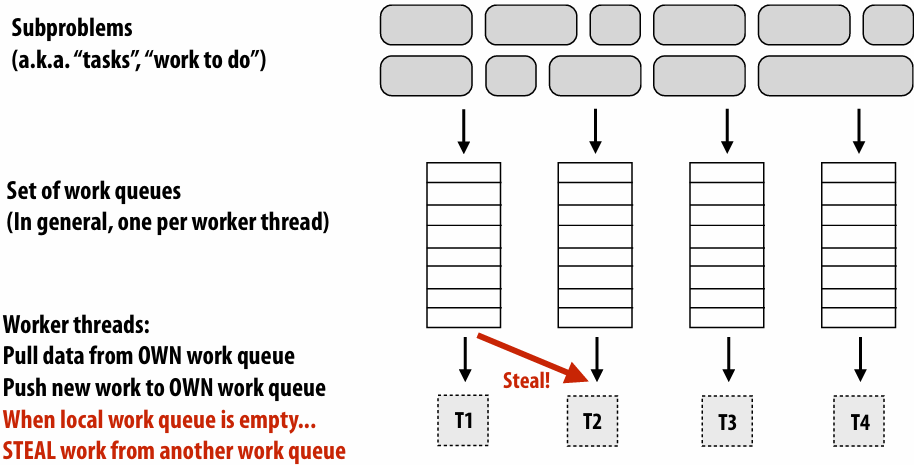

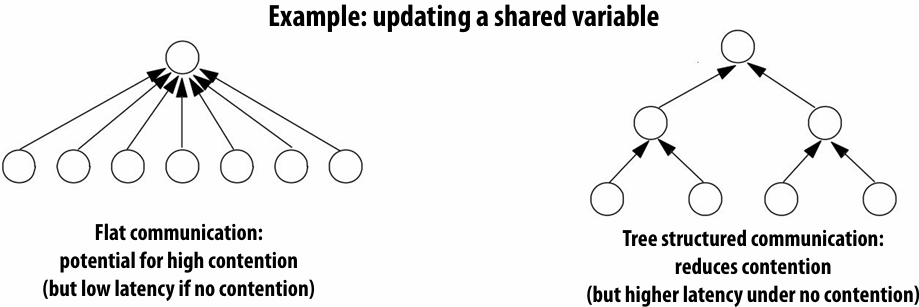

Decreasing synchronization overhead using distributed queues(avoid need for all workers to synchronize on single work queue)

Work in task queues need not be independent

Summary

Challenge: achieving good workload balance

Want all processors working all the time (otherwise(否则), resources are idle(空闲)!)

But want low-cost solution for achieving this balance

Minimize computational overhead (e.g., scheduling/assignment logic)

Minimize synchronization costs

Static assignment vs. dynamic assignment

Really, it is not an either/or(非此即彼) decision, there’s a continuum of choices

Use up-front(预先) knowledge about workload as much as possible to reduce load imbalance and task management/ synchronization costs (in the limit, if the system knows everything, use fully static assignment)

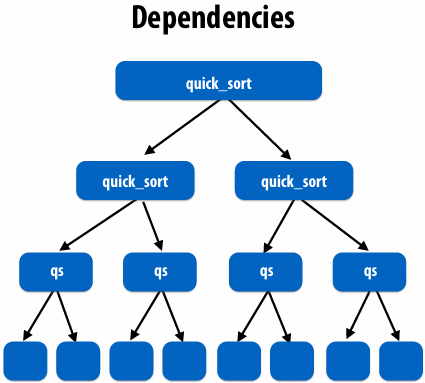

Scheduling fork-join parallelism

Common parallel programming patterns

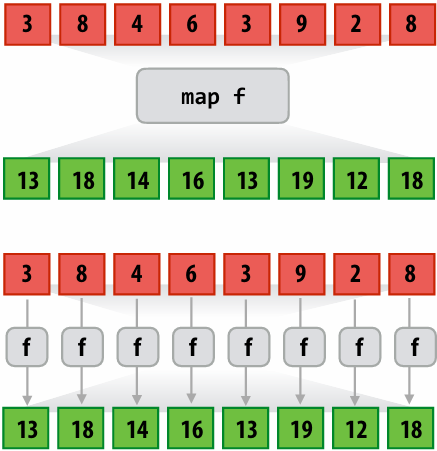

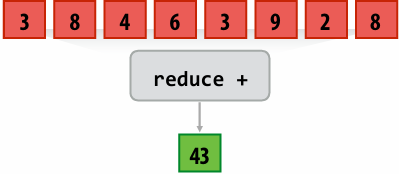

Data parallelism:

Perform same sequence of operations on many data elements

Explicit management of parallelism with threads:

Create one thread per execution unit (or per amount of desired concurrency)

Consider divide-and-conquer algorithms

Quick sort:

1 | // sort elements from ‘begin’ up to (but not including) ‘end’ |

Fork-join pattern

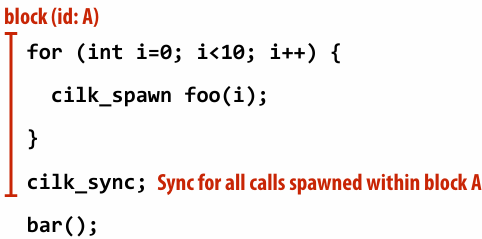

Natural way to express the independent work that is inherent in divide-and-conquer algorithms

This lecture’s code examples will be in Cilk Plus

C++ language extension

Originally developed at MIT, now adapted as open standard (in GCC, Intel ICC)cilk_spawn foo(args);

“fork” (create new logical thread of control)

Semantics: invoke foo, but unlike standard function call, caller may continue executing asynchronously with execution of foocilk_sync;

“join”

Semantics: returns when all calls spawned by current function have completed. (“sync up” with the spawned calls)

Note: there is an implicit cilk_sync at the end of every function that contains a cilk_spawn

(implication: when a Cilk function returns, all work associated with that function is complete)

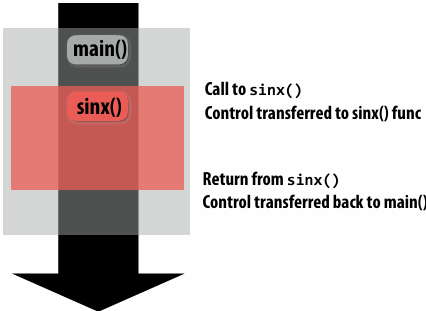

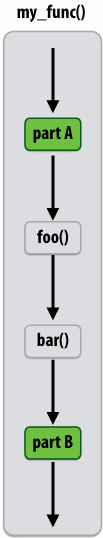

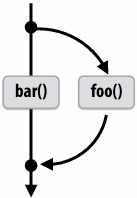

Call-return of a function in C(And many other languages)

1 | void my_func() { |

Semantics of a function call: Control moves to the function that is called (Thread executes instructions for the function)

When function returns, control returns back to caller (thread resumes(恢复) executing instructions from the caller)

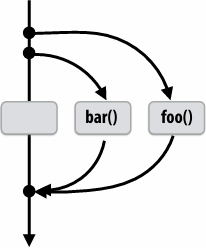

Basic Cilk Plus examples

1 | // foo() and bar() may run in parallel |

1 | // foo() and bar() may run in parallel |

Same amount of independent work first example, but potentially higher runtime overhead (due to two spawns vs. one)

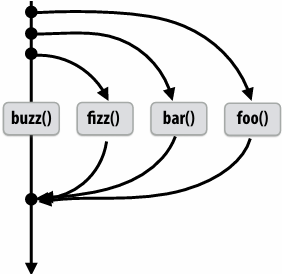

1 | // foo, bar, fizz, buzz, may run in parallel |

Abstraction vs. implementation

Notice that the cilk_spawn abstraction does not specify how or when spawned calls are scheduled to execute

Only that they may be run concurrently with caller (and with all other calls spawned by the caller)

An implementation of Cilk is correct if it implements cilk_spawn foo() the same way as it implementation a normal function call to foo()

But cilk_sync does serve as a constraint on scheduling

All spawned calls must complete before cilk_sync returns

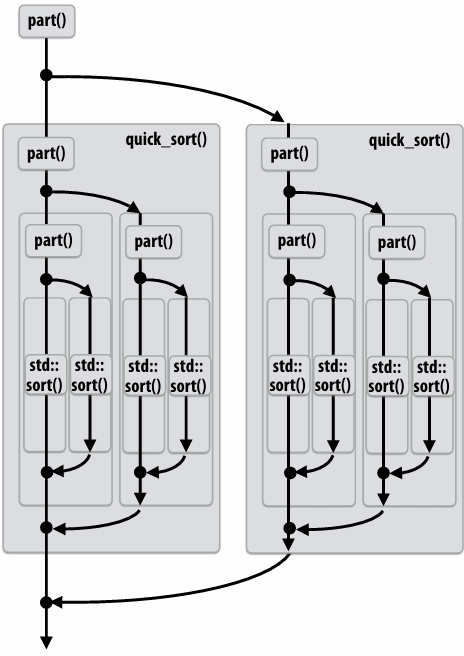

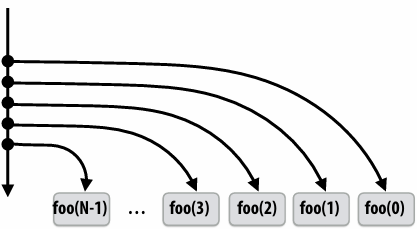

Parallel quicksort in Cilk Plus

1 | void quick_sort(int* begin, int* end){ |

Writing fork-join programs

Main idea: expose independent work (potential parallelism) to the system using cilk_spawn

Recall parallel programming rules of thumb

Want at least as much work as parallel execution capability (e.g., program should probably spawn at least as much work as needed to fill all the machine’s processing resources)

Want more independent work than execution capability to allow for good workload balance of all the work onto the cores

“parallel slack” = ratio of independent work to machine’s parallel execution capability (in practice: ~8 is a good ratio)

But not too much independent work so that granularity of work is too small (too much slack incurs overhead of managing fine-grained(细粒度) work)

Scheduling fork-join programs

Consider very simple scheduler:

Launch pthread for each cilk_spawn using pthread_create

Translate cilk_sync into appropriate pthread_join calls

Potential performance problems?

Heavyweight spawn operation

Many more concurrently running threads than cores

Context switching overhead

Larger working set than necessary, less cache locality

Note: now we are going to talk about the implementation of Cilk

Pool of worker threads

The Cilk Plus runtime maintains pool of worker threads

Think: all threads are created at application launch(It’s perfectly fine to think about it this way, but in reality, runtimes tend to be lazy and initialize worker threads on the first Cilk spawn. (This is a common implementation strategy, ISPC does the same with worker threads that run ISPC tasks.))

Exactly as many worker threads as execution contexts in the machine

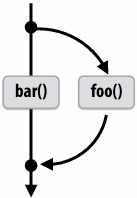

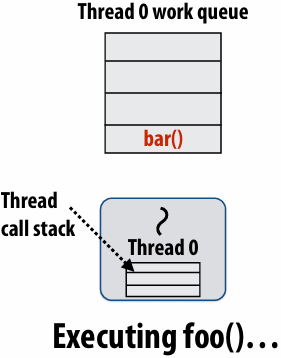

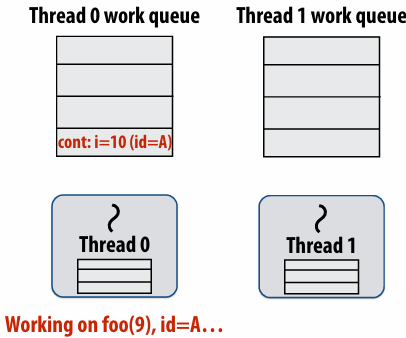

Consider execution of the following code

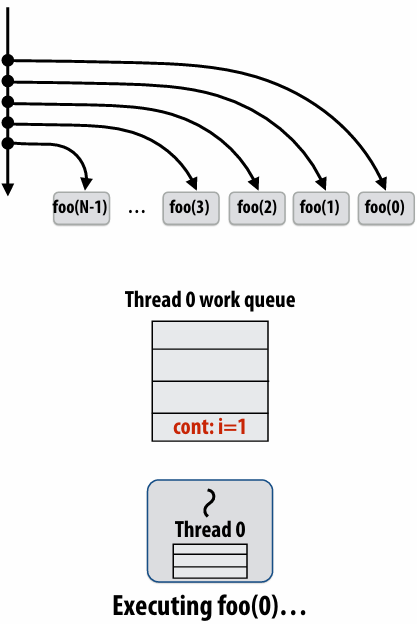

1 | // spawned child |

Specifically, consider execution from the point foo() is spawned

First, consider a serial implementation

Run child first… via a regular function call

Thread runs foo(), then returns from foo(), then runs bar()

Continuation is implicit in the thread’s stack

Per-thread work queues store “work to do”

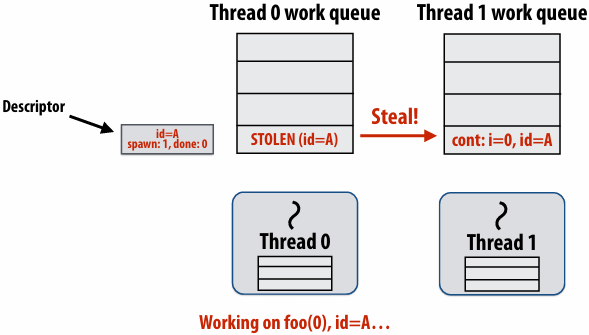

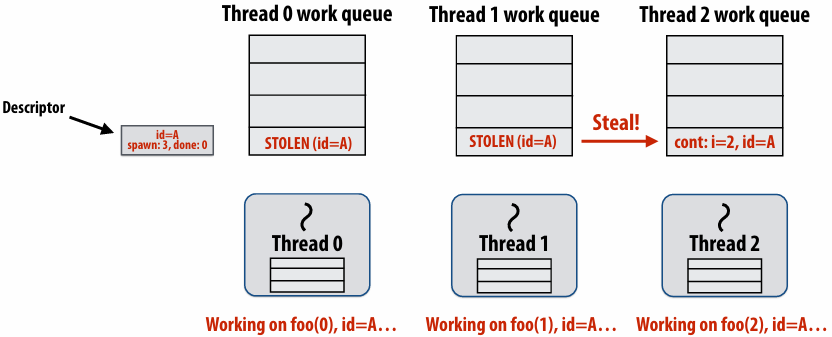

Upon reaching cilk_spawn foo(), thread places continuation in its work queue, and begins executing foo()

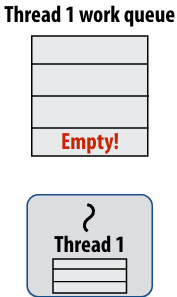

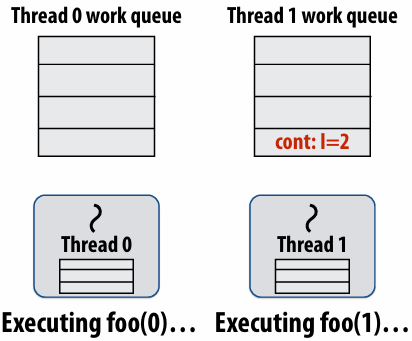

Idle threads “steal” work from busy threads

If thread 1 goes idle (a.k.a. there is no work in its own queue), then it looks in thread 0’s queue for work to do

Run continuation first: queue child for later execution

Child is made available for stealing by other threads (“child stealing”)

Run child first: enqueue continuation for later execution

Continuation is made available for stealing by other threads (“continuation stealing”)

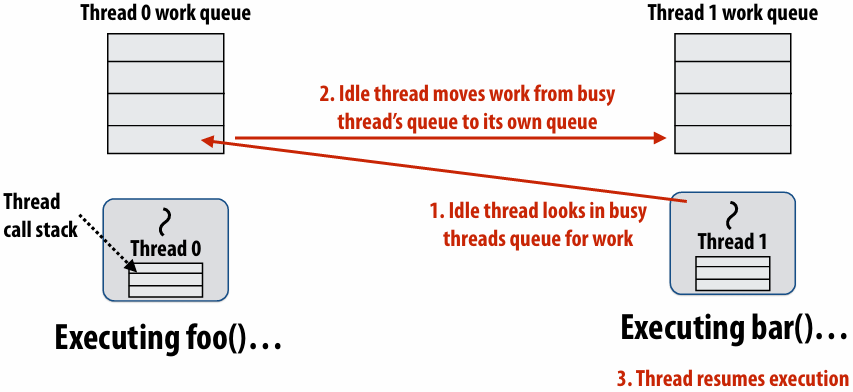

Run continuation first (“child stealing”)

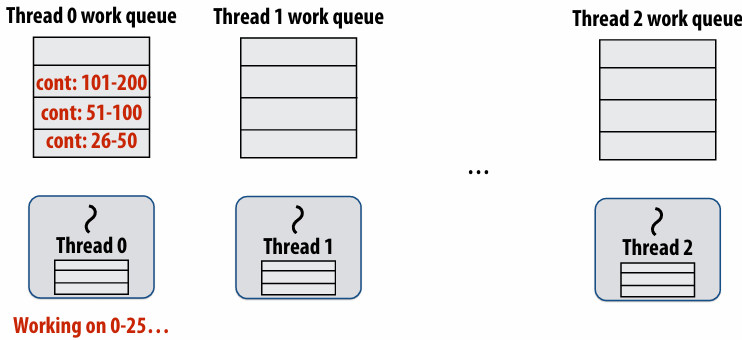

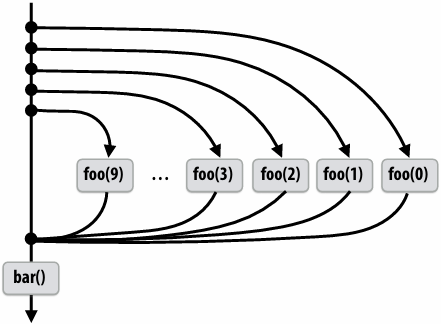

Caller thread spawns work for all iterations before executing any of it

Think: breadth-first traversal(广度优先遍历) of call graph. O(N) space for spawned work (maximum space)

If no stealing, execution order is very different than that of program with cilk_spawn removed

Run child first (“continuation stealing”)

Caller thread only creates one item to steal (continuation that represents all remaining iterations)

If no stealing occurs, thread continually pops continuation from work queue, enqueues new continuation (with updated value of i)

Order of execution is the same as for program with spawn removed

Think: depth-first traversal(深度优先遍历) of call graph

Enqueues continuation with i advanced by 1

If continuation is stolen, stealing thread spawns and executes next iteration

Can prove that work queue storage for system with T threads is no more than T times that of stack storage for single threaded execution

Scheduling quicksort: assume 200 elements

1 | void quick_sort(int* begin, int* end) { |

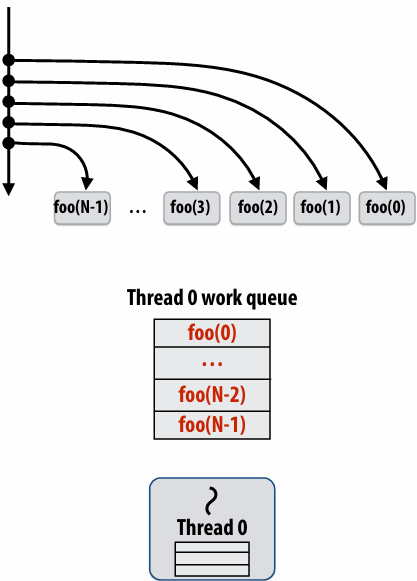

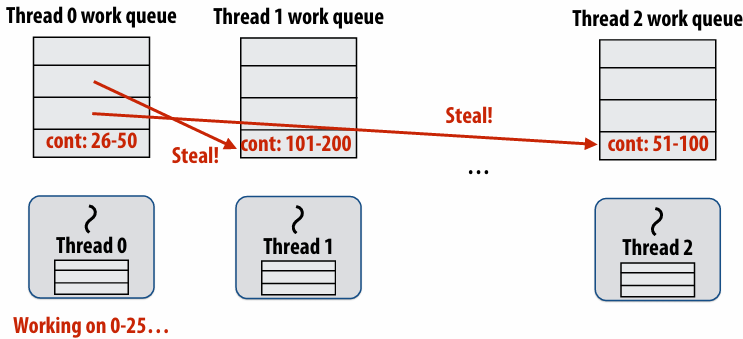

Implementing work stealing: dequeue per worker

Work queue implemented as a dequeue (double ended queue)

Local thread pushes/pops from the “tail” (bottom)

Remote threads steal from “head” (top)

Implementing work stealing: choice of victim

Idle threads randomly choose a thread to attempt to steal from

Steal work from top of dequeue:

Steals largest amount of work (reduce number of steals)

Maximum locality in work each thread performs (when combined with run child first scheme)

Stealing thread and local thread do not contend(争用) for same elements of dequeue(efficient lock-free implementations of dequeue exist)

Child-first work stealing scheduler anticipates divide-and-conquer parallelism

1 | for (int i=0; i<N; i++) { |

1 | void recursive_for(int start, int end) { |

Code at second generates work in parallel, (code at first does not), so it more quickly fills up parallel machine

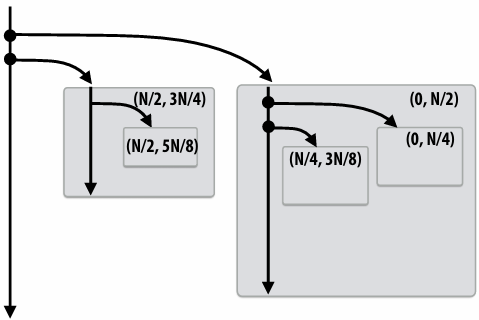

Implementing sync

Implementing sync: no stealing case

If no work has been stolen by other threads, then there’s nothing to do at the sync point

cilk_sync is a no-op

Implementing sync: stealing case

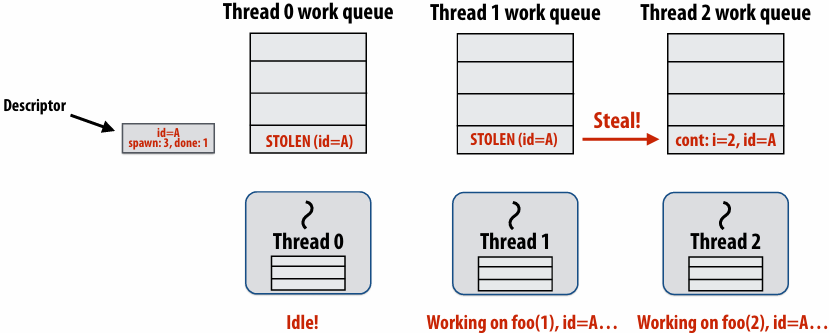

Idle thread 1 steals from busy thread 0

Note: descriptor(描述符) for block A created

The descriptor tracks the number of outstanding(未完成的) spawns for the block, and the number of those spawns that have completed

The 1 spawn tracked by the descriptor corresponds to foo(0) being run by thread 0. (Since the continuation is now owned by thread 1 after the steal.)

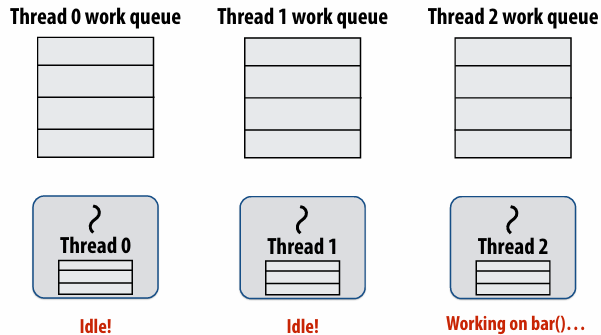

Thread 2 now resumes continuation and executes bar()

Note block A descriptor is now free

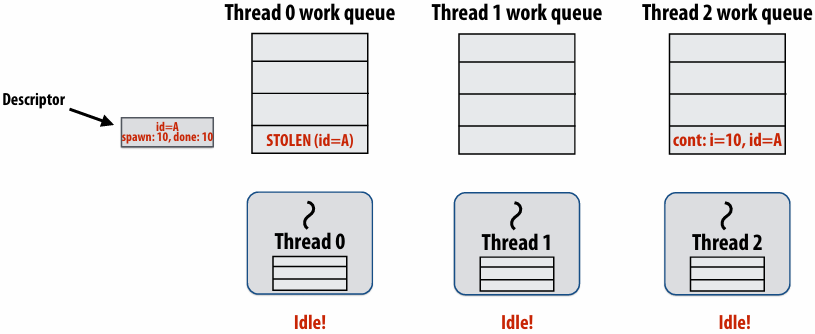

Cilk uses greedy join scheduling

Greedy join scheduling policy

All threads always attempt to steal if there is nothing to do

Threads only go idle if there is no work to steal in the system

Worker thread that initiated spawn may not be thread that executes logic after cilk_sync

Remember:

Overhead of bookkeeping(簿记) steals and managing sync points only occurs when steals occur

If large pieces of work are stolen, this should occur infrequently

Most of the time, threads are pushing/popping local work from their local dequeue

Cilk summary

Fork-join parallelism: a natural way to express divide-and-conquer algorithms

Discussed Cilk Plus, but many other systems also have fork/join primitives (e.g., OpenMP)

Cilk Plus runtime implements spawn/sync abstraction with a locality-aware(局部性感知) work stealing scheduler

Always run spawned child (continuation stealing)

Greedy behavior at join (threads do not wait at join, immediately look for other work to steal)

Lecture 6: Performance Optimization Part II: Locality, Communication, and Contention

shared address space model

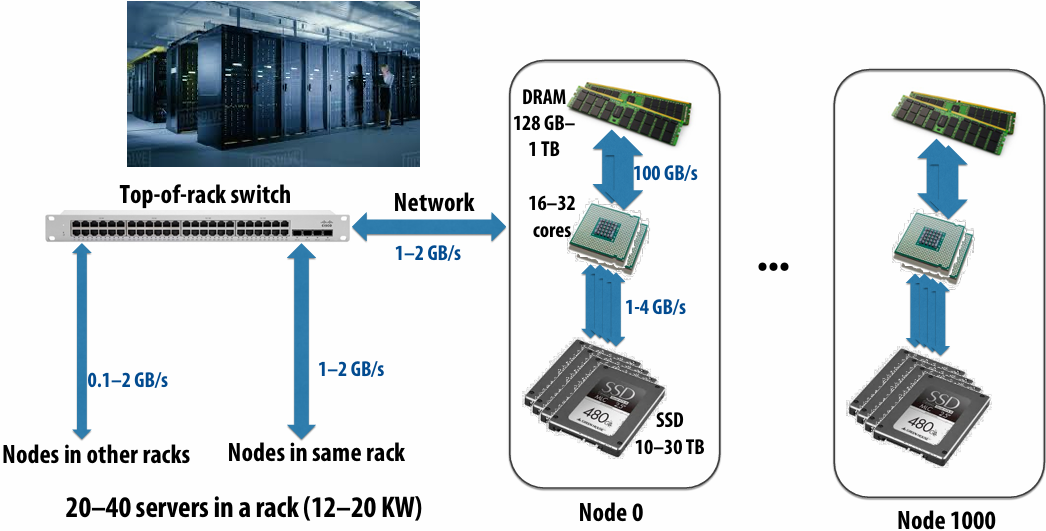

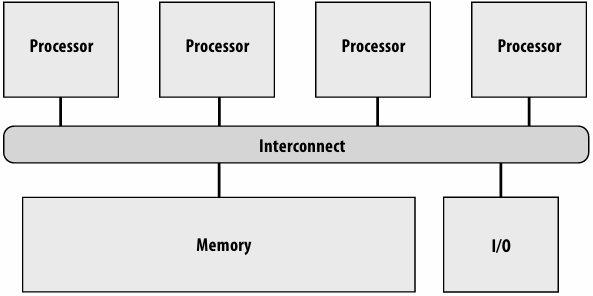

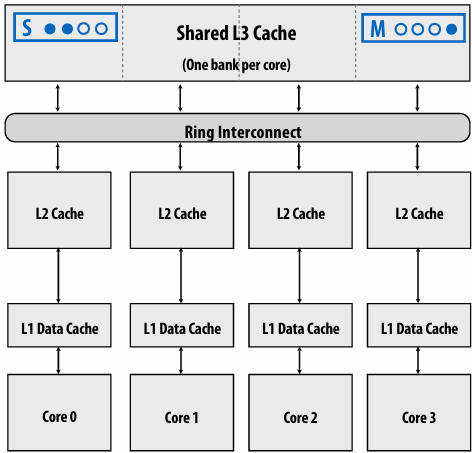

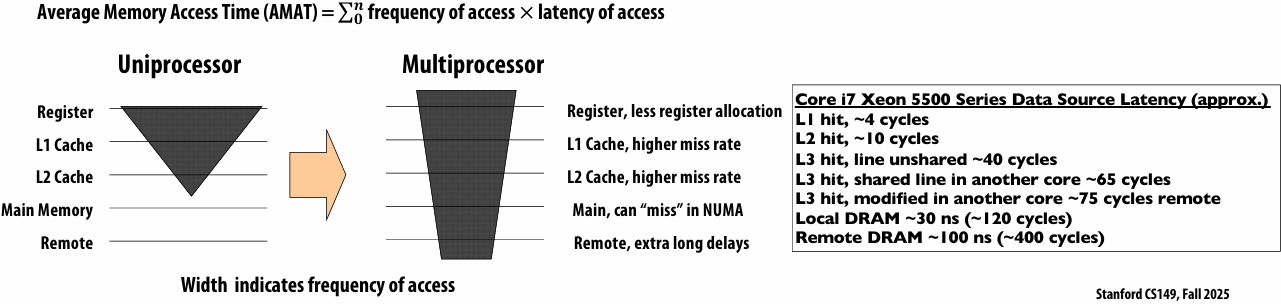

Non-uniform memory access (NUMA)

The latency of accessing a memory location may be different from different processing cores in the system

Bandwidth from any one location may also be different to different CPU cores(In practice, you’ll find NUMA behavior on a single-socket system as well (recall: different cache slices are a different distance from each core))

Communication abstraction

Threads read/write variables in shared address space

Threads manipulate synchronization primitives: locks, atomic ops, etc

Logical extension of uniprocessor programming(But NUMA implementations require reasoning about locality for performance optimization)

Requires hardware support to implement efficiently

Any processor can load and store from any address

Can be costly to scale(扩展) to large numbers of processors(one of the reasons why high-core count processors are expensive)

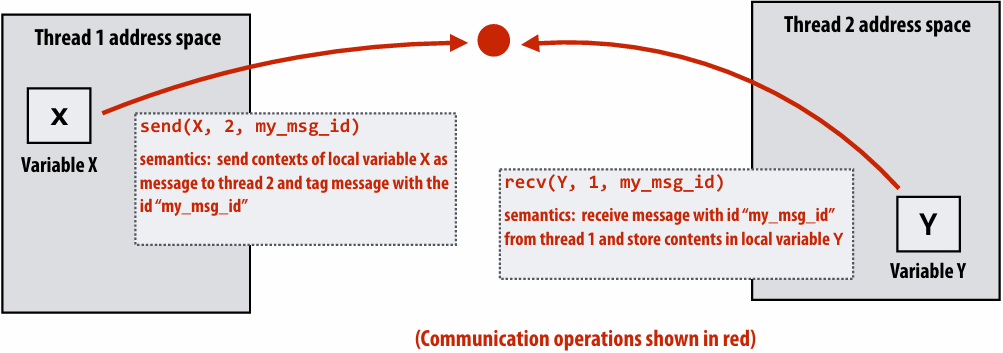

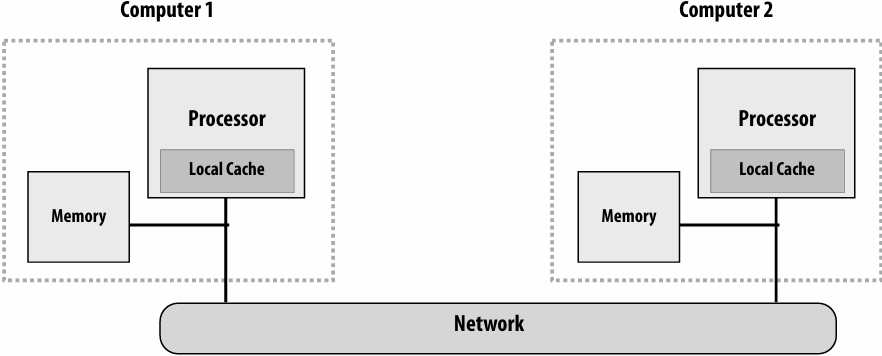

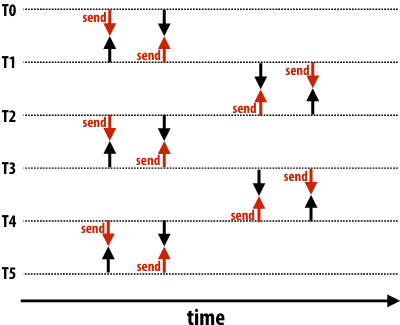

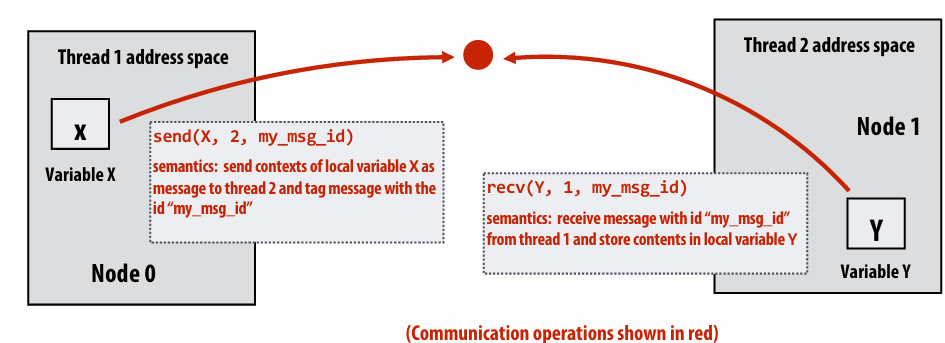

Message passing model

Message passing model (abstraction)

Threads operate within their own private address spaces

Threads communicate by sending/receiving messages

send: specifies(指定) recipient, buffer to be transmitted, and optional message identifier (“tag”)

receive: sender, specifies buffer to store data, and optional message identifier

Sending messages is the only way to exchange data between threads 1 and 2

Message passing (implementation)

Hardware need not implement a single shared address space for all processors (it only needs to provide mechanisms to communicate messages between nodes)

Can connect commodity(通用) systems together to form a large parallel machine(message passing is a programming model for clusters and supercomputers)

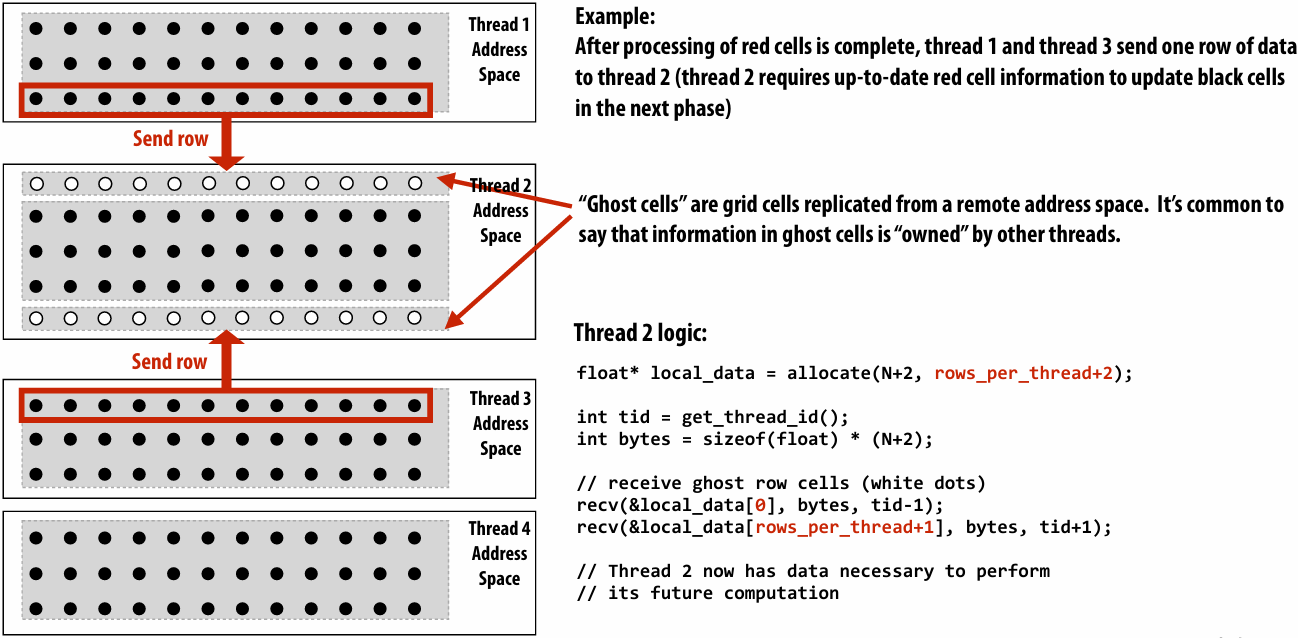

Message passing expression of solver

Recall the grid solver application:

Update all red cells in parallel

When done updating red cells , update all black cells in parallel (respect dependency on red cells)

Repeat until convergence

Let’s think about expressing a parallel grid solver with communication via messages

One possible message passing machine configuration: a cluster of two machines

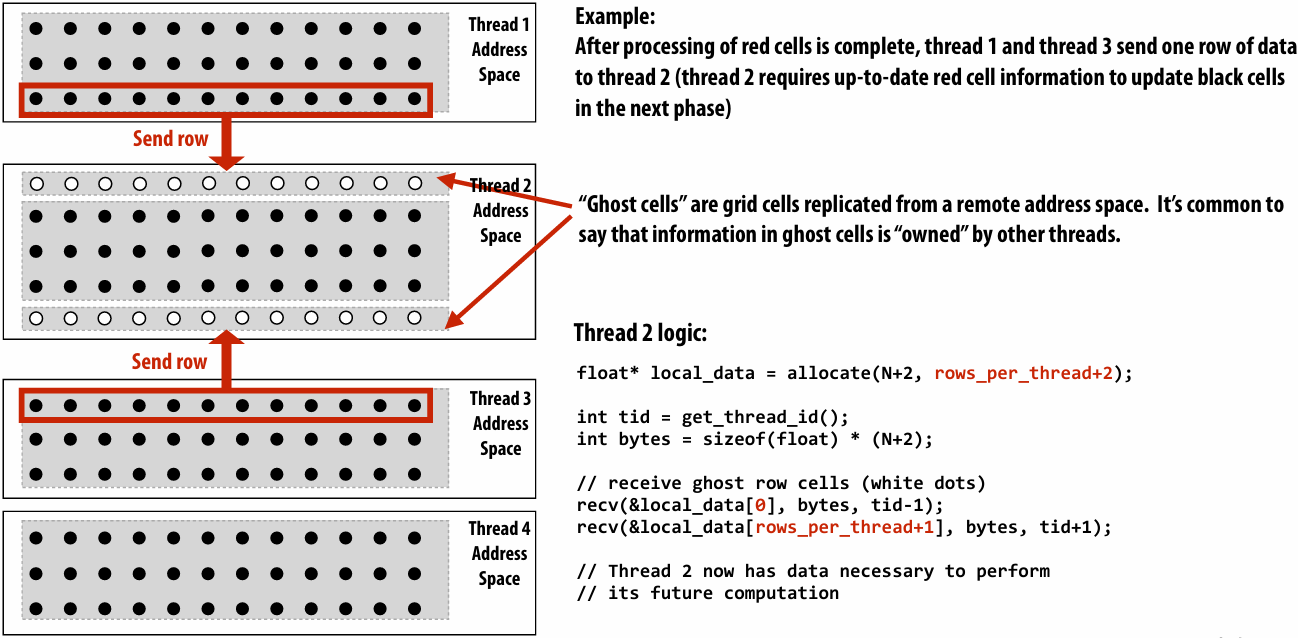

Message passing model: each thread operates in its own address space

In this figure: four threads

The grid data is partitioned into four allocations, each residing in one of the four unique thread address spaces

(four per-thread private arrays)

Data replication is now required to correctly execute the program

Grid data stored in four separate address spaces (four private arrays)

Message passing solver

Similar structure to shared address space solver, but now communication is explicit in message sends and receives

1 | int N; |

Notes on the message passing example

Computation

Array indexing is relative to local address space

Communication:

Performed by sending and receiving messages

Bulk(批量) transfer: communicate entire rows at a time

Synchronization:

Performed by sending and receiving messages

Consider how to implement mutual exclusion, barriers, flags using messages

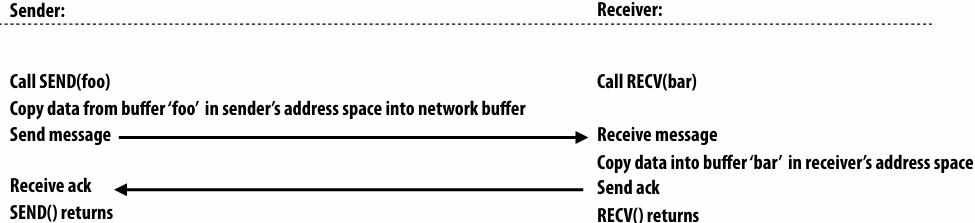

Synchronous (blocking) send and receive

send(): call returns when sender receives acknowledgement that message data resides(存在) in address space of receiver

recv(): call returns when data from received message is copied into address space of receiver and acknowledgement sent back to sender

硬件确保 message 和 ack 传输成功

There is a big problem with our message passing solver if it uses synchronous send/recv

同时 send/receive 会导致 deadlock

Message passing solver (fixed to avoid deadlock)

Send and receive ghost rows to “neighbor threads”

Even-numbered threads send, then receive

Odd-numbered thread recv, then send

1 | int N; |

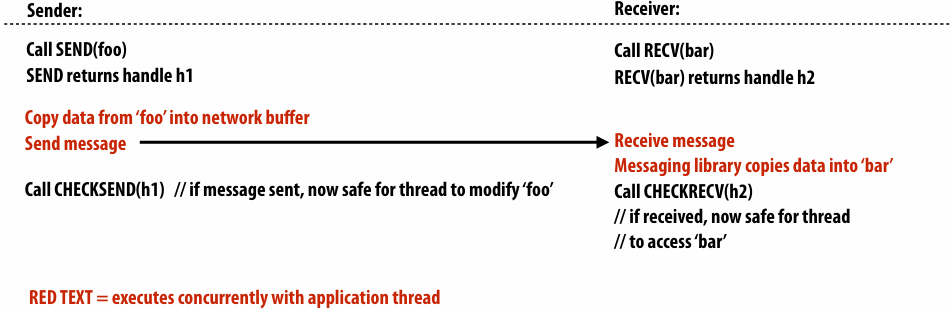

Non-blocking asynchronous send/recv

send(): call returns immediately

Buffer provided to send() cannot be modified by calling thread since message processing occurs concurrently with thread execution

Calling thread can perform other work while waiting for message to be sent

recv(): posts intent(意图) to receive in the future, returns immediately

Use checksend(), checkrecv() to determine actual status of send/receipt

Calling thread can perform other work while waiting for message to be received

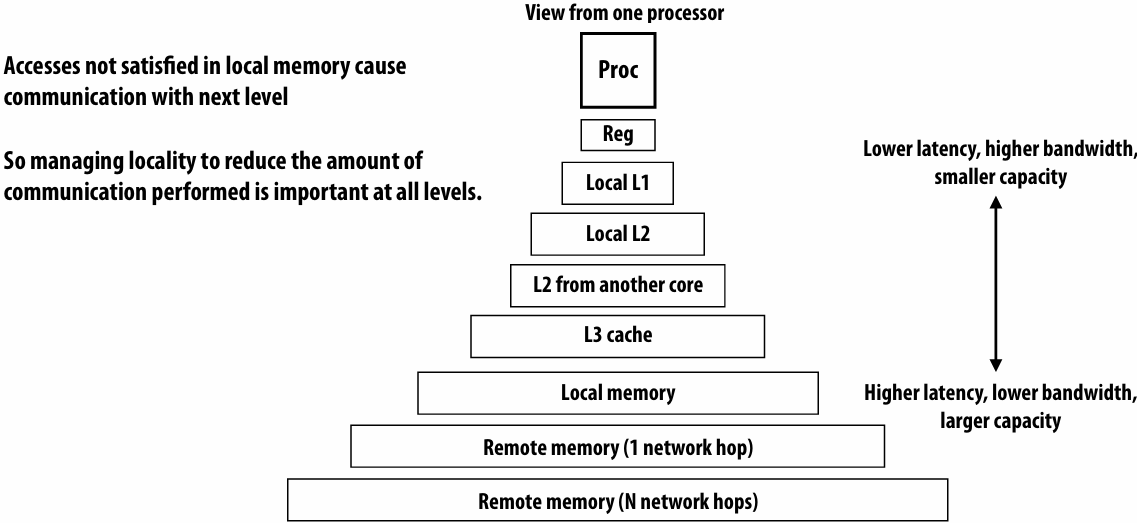

When I talk about communication, I’m not just referring to messages between machines

More examples:

Communication between cores on a chip

Communication between a core and its cache

Communication between a core and memory

Think of a parallel system as an extended memory hierarchy(层次结构)

I want you to think of “communication” generally:

Communication between a processor and its cache

Communication between processor and memory (e.g., memory on same machine)

Communication between processor and a remote memory(e.g., memory on another node in the cluster, accessed by sending a network message)

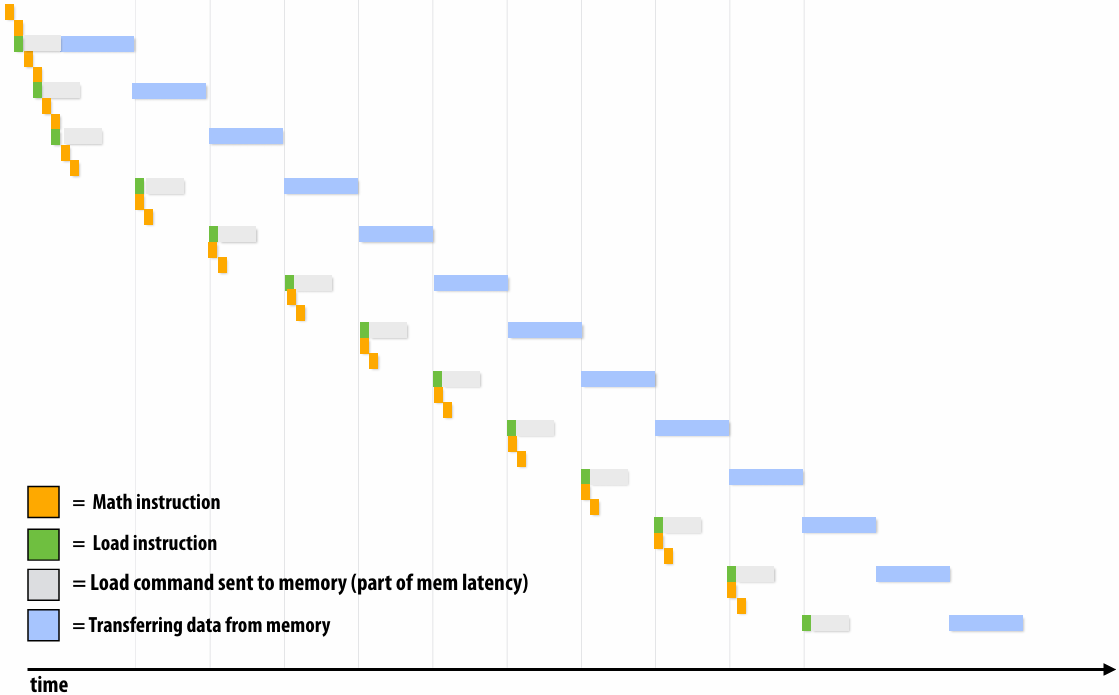

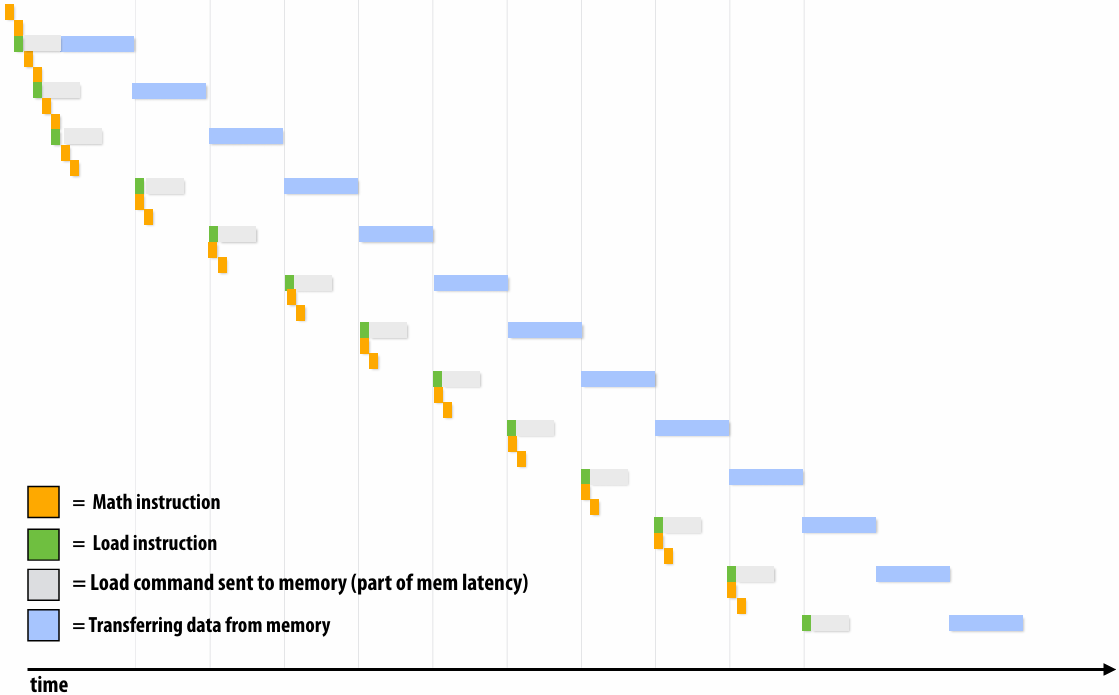

Discussion of bandwidth-limited execution (from lecture 3)

This was an example where the processor executed 2 instructions for each cache line load

How do you tell from the figure that the memory bus is fully utilized?

观察图中的蓝色长条(代表从内存传输数据的时间),在进入稳态(Steady State)后,发现一个蓝色长条的终点紧接着下一个蓝色长条的起点,没有间隙意味着内存总线在时间轴上是 100% 占满的。它始终在传输数据,没有任何空闲时间。即使核心(Core)在红色区域停顿(Stalled),内存总线依然在全力奔跑,说明总线被完全利用

How would you illustrate higher memory latency (keep in mind memory requests are pipelined and memory bus bandwidth is not changed)?

内存延迟 (Latency):从 CPU 发出内存请求到数据返回之间的等待时间

内存总线带宽 (Bandwidth):单位时间内内存系统能够传输的最大数据量

内存延迟体现在“发送加载命令(灰色)”到“数据开始传输(蓝色起始)”之间的间隔。如果延迟变高,这个间隔(灰色和蓝色之间的距离)会拉长。由于带宽没变,蓝色长条的宽度(长度)不会变。由于是流水线化的,只要请求够多,蓝色长条依然会保持“首尾相衔”。整排蓝色长条会相对于指令簇整体向右平移。在程序刚开始时,处理器等待第一份数据回来的时间(初始延迟)会变长,但一旦进入稳态,数据传输的频率(吞吐量)保持不变

How would the figure change if memory bus bandwidth was increased?

加带宽意味着在相同时间内可以传输更多数据,或者传输相同大小(一个缓存行)的数据所需的时间变短。每个蓝色长条会变窄(水平方向变短)。如果数学计算(黄色)的时间不变,蓝色长条变窄意味着数据回来的更快了,指令簇之间的空白区域(处理器停顿/Stalls)会缩小,整体执行速度加快

Would there still be processor stalls if the ratio of math instructions to load instructions was significantly increased? Why?

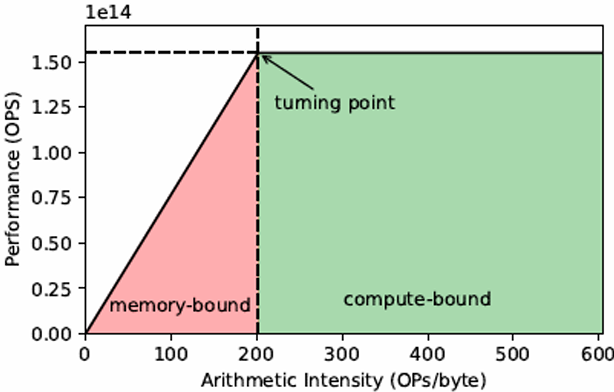

可能不会(停顿会消失),当每个加载指令对应的数学指令变多时,黄色长条的总长度(计算时间)会增加。如果计算时间长到足以覆盖掉数据传输的时间(蓝色长条的时间),那么当核心算完当前数据时,下一份数据已经传输完成了,此时程序从“访存受限(Memory-bound)”转变为“计算受限(Compute-bound)”。处理器始终在忙于计算,不再需要停下来等数据。即与计算强度(Arithmetic Intensity)有关

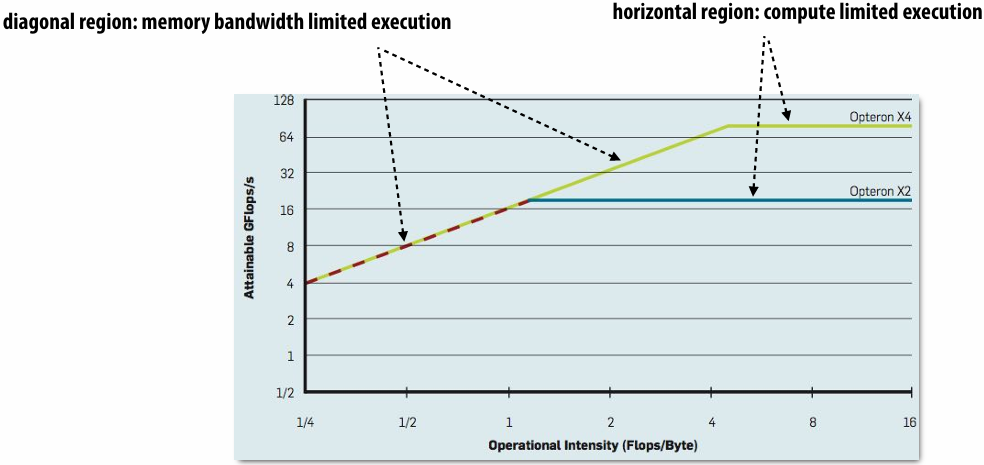

Arithmetic intensity

amount of computation (e.g., instructions) / amount of communication (e.g., bytes)

If numerator(分子) is the execution time of computation, ratio gives average bandwidth requirement of code(倒数)

1 / “Arithmetic intensity” = communication-to-computation ratio

Some people like to refer to communication to computation ratio

I find arithmetic intensity a more intuitive(直观) quantity, since higher is better

It also sounds cooler

High arithmetic intensity (low communication-to-computation ratio) is required to efficiently utilize modern parallel processors since the ratio of compute capability to available bandwidth is high (recall element-wise vector multiply example from lecture 3)

Two reasons for communication: inherent vs. artifactual communication

Inherent(固有) communication

Communication that must occur in a parallel algorithm

The communication is fundamental to the algorithm

In our messaging passing example at the start of class, sending ghost rows was inherent communication

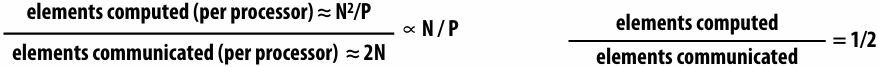

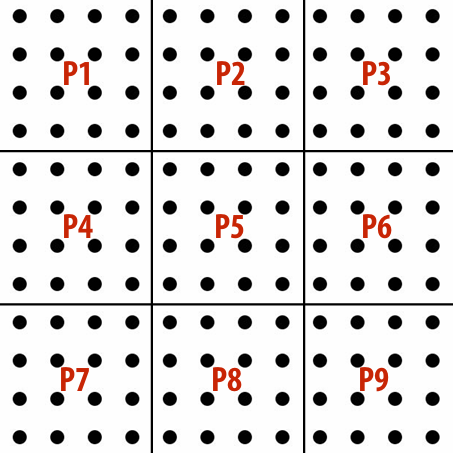

Reducing inherent communication

Good assignment decisions can reduce inherent communication(increase arithmetic intensity)

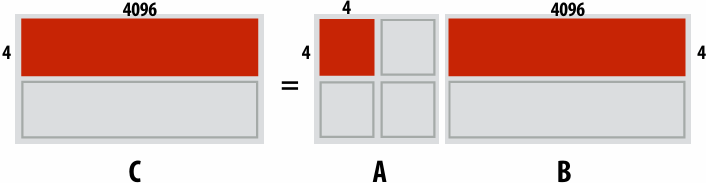

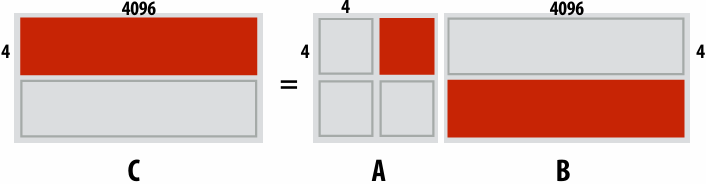

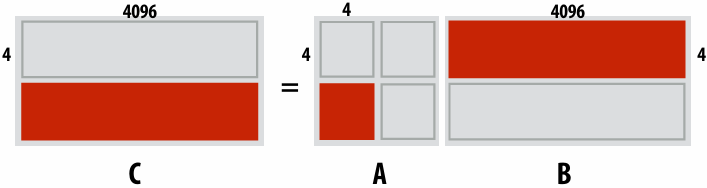

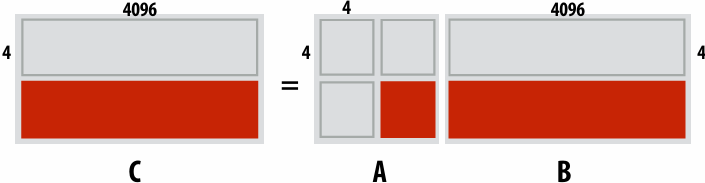

1D blocked assignment: N x N grid 1D interleaved assignment: N x N grid

2D blocked assignment: N x N grid

N2 elements

P processors

elements computed(per processor): N2/P

elements communicated(per processor):

arithmetic intensity:

Asymptotically(渐进地) better communication scaling than 1D blocked assignment

Communication costs increase sub-linearly(亚线性) with(随) P

Assignment captures 2D locality of algorithm

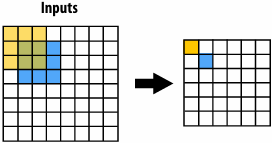

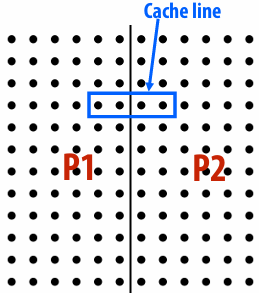

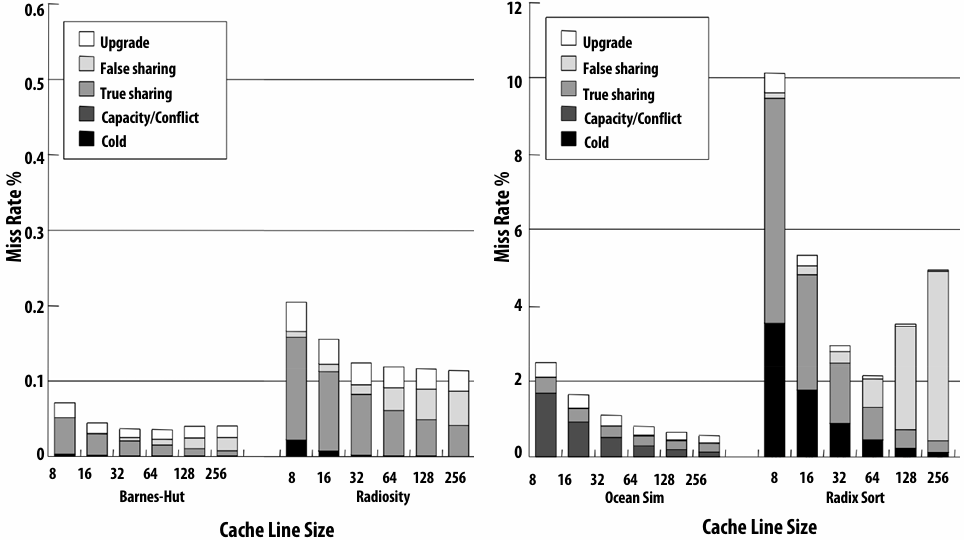

Artifactual(人工) communication

Inherent communication: information that fundamentally must be moved between processors to carry out the algorithm given the specified assignment (assumes unlimited capacity caches, minimum granularity transfers, etc.)

Artifactual communication: all other communication (artifactual communication results from practical details of system implementation)

Example: Artifactual communication arises from the behavior of caches

In this case: the communication is between memory and the processor

Data access in grid solver: row-major traversal

Assume row-major grid layout

Assume cache line is 4 grid elements

Cache capacity is 24 grid elements (6 lines)

Blue elements show data that is in cache after completing update to red element

Blue elements show data in cache at end of processing first row

Although elements (x,y)=(0,1), (1,1), (2,1), (0,2), and (2,2) have been accessed previously, they are no longer present in cache at start of processing the first output element in row 2

As a result, this program loads three cache lines for every four elements of output

Artifactual communication examples

System has minimum granularity of data transfer (system must communicate more data than what is needed by application)

Program loads one 4-byte float value but entire 64-byte cache line must be transferred from memory (16x more communication than necessary)

System operation might result in unnecessary communication:

Program stores 16 consecutive 4-byte float values, and as a result the entire 64-byte cache line is loaded from memory, entirely overwritten, then subsequently stored to memory (2x overhead… load was unnecessary since entire cache line was overwritten)

Finite replication capacity: the same data communicated to processor multiple times because cache is too small to retain it between accesses (capacity misses)

Techniques for reducing Artifactual communication

Improving temporal locality by changing grid traversal order

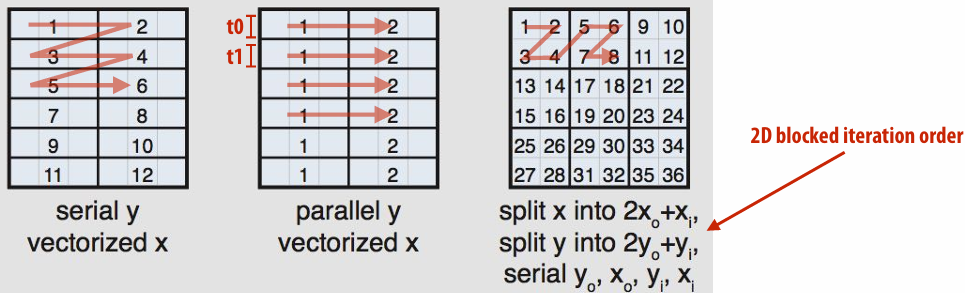

“Blocking”: reorder computation to reduce capacity misses

“Blocked” iteration order

(diagram shows state of cache after f inishing work from first row of first block)

Now load two cache lines for every six elements of output

Improving temporal(时间) locality by “fusing(融合)” loops

1 | // Two loads, one store per math op (arithmetic intensity = 1/3) |

1 | // Four loads, one store per 3 math ops (arithmetic intensity = 3/5) |

Code on top is more modular(模块化) (e.g, array-based math library like numPy in Python)

Code on bottom performs much better

Optimization: improve arithmetic intensity by sharing data

Exploit sharing: co-locate(共同定位) tasks that operate on the same data

Schedule threads working on the same data structure at the same time on the same processor

Reduces inherent communication

Contention

A resource can perform operations at a given throughput (number of transactions per unit time)

Memory, communication links, servers, CA’s at office hours, etc

Contention occurs when many requests to a resource are made within a small window of time(the resource is a “hot spot”)

Example: distributed work queues reduce contention(contention in access to single shared work queue)

Worker threads:

Pull data from OWN work queue

Push new work to OWN work queue

(no contention when all processors have work to do)

When local work queue is empty…

STEAL work from random work queue

(synchronization okay at this point since the thread would have sat idle anyway)

Summary: reducing communication costs

Reduce overhead of communication to sender/receiver

Send fewer messages, make messages larger (amortize(分摊) overhead)

Coalesce(合并) many small messages into large ones

Reduce latency of communication

Application writer: restructure code to exploit locality

Hardware implementor: improve communication architecture

Reduce contention

Replicate contended resources (e.g., local copies, fine-grained(细粒度) locks)

Stagger(错开) access to contended resources

Increase communication/computation overlap(重叠)

Application writer: use asynchronous communication (e.g., async messages)

Requires additional concurrency in application (more concurrency than number of execution units)

Here are some tricks for understanding the performance of parallel software

Remember: Always, always, always try the simplest parallel solution first, then measure performance to see where you stand

A useful performance analysis strategy

Determine if your performance is limited by computation, memory bandwidth (or memory latency), or synchronization?

Try and establish “high watermarks”

What’s the best you can do in practice?

How close is your implementation to a best-case scenario?

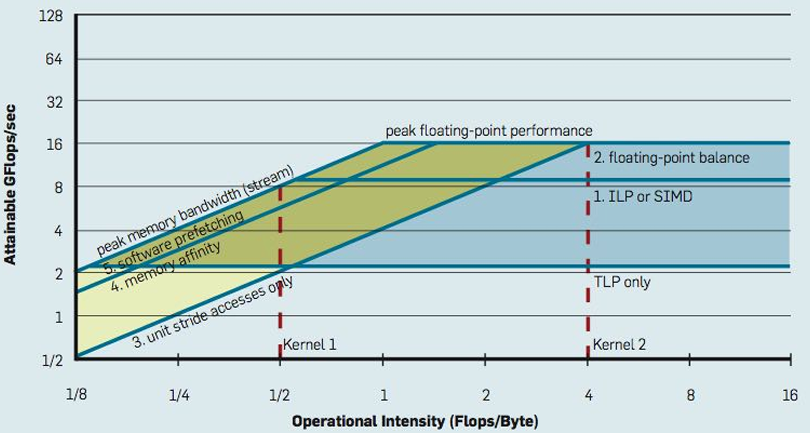

Roofline model

In plot below, different points on the X axis correspond to different programs with different arithmetic intensities

The Y axis is the maximum obtainable instruction throughput for a program with a given arithmetic intensity

斜率是带宽(bandwidth)

Roofline model: optimization regions

Use various levels of optimization in benchmarks(基准)(e.g., best performance with and without using SIMD instructions)

Establishing high watermarks

Computation, memory access, and synchronization are almost never perfectly overlapped. As a result, overall performance will rarely be dictated(决定) entirely by compute or by bandwidth or by sync. Even so, the sensitivity of performance change to the above program modifications can be a good indication of dominant costs

Add “math” (non-memory instructions)

Does execution time increase linearly with operation count as math is added?(If so, this is evidence that code is instruction-rate limited)

Remove almost all math, but load same data

How much does execution time decrease? If not much, you might suspect memory bottleneck

Change all array accesses to A[0]

How much faster does your code get? (This establishes an upper bound on benefit of improving locality of data access)

Remove all atomic operations or locks

How much faster does your code get? (provided(假设) it still does approximately the same amount of work)(This establishes an upper bound on benefit of reducing sync overhead.)

Use profilers/performance monitoring tools

Image is “CPU usage(使用率)” from activity monitor in OS X while browsing the web in Chrome (from a laptop with a quad-core Core i7 CPU)

Graph plots(绘制) percentage of time OS has scheduled a process thread onto a processor execution context

Not very helpful for optimizing performance

All modern processors have low-level event “performance counters”

Registers that count important details such as: instructions completed, clock ticks, L2/L3 cache hits/misses, bytes read from memory controller, etc

Example: Intel’s Performance Counter Monitor Tool provides a C++ API for accessing these registers

1 | PCM *m = PCM::getInstance(); |

Also see Intel VTune, PAPI, oprofile, etc

Understanding problem size issues can very helpful when assessing program performance

Understanding scaling

There can be complex interactions(交互) between the size of the problem to solve and the size of the parallel computer

Can impact load balance, overhead, arithmetic intensity, locality of data access

Effects can be dramatic and application dependent

Evaluating a machine with a fixed problem size can be problematic

Too small a problem:

Parallelism overheads dominate(掩盖) parallelism benefits (may even result in slow downs)

Problem size may be appropriate for small machines, but inappropriate for large ones (does not reflect realistic usage of large machine!)

Too large a problem: (problem size chosen to be appropriate for large machine)

Key working set may not “fit” in small machine (causing thrashing to disk, or key working set exceeds cache capacity, or can’t run at all)

When problem working set “fits” in a large machine but not small one, super-linear speedups can occur

Can be desirable to scale problem size as machine sizes grow

(buy a bigger machine to compute more, rather than just compute the same problem faster)

Summary of tips

Measure, measure, measure…

Establish high watermarks for your program

Are you compute, synchronization, or bandwidth bound?

Be aware of scaling issues

Is the problem “well sized” for the machine?

Assignment 2: Scheduling Task Graphs on a Multi-Core CPU

具体内容和实现

[WorldClass/Stanford CS149 Parallel Computing/asst2 at master · tiny-star3/WorldClass](https://github.com/tiny-star3/WorldClass/tree/master/Stanford CS149 Parallel Computing/asst2)

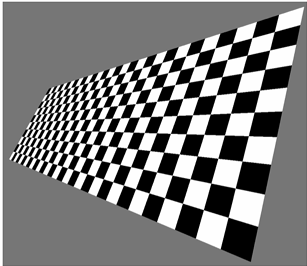

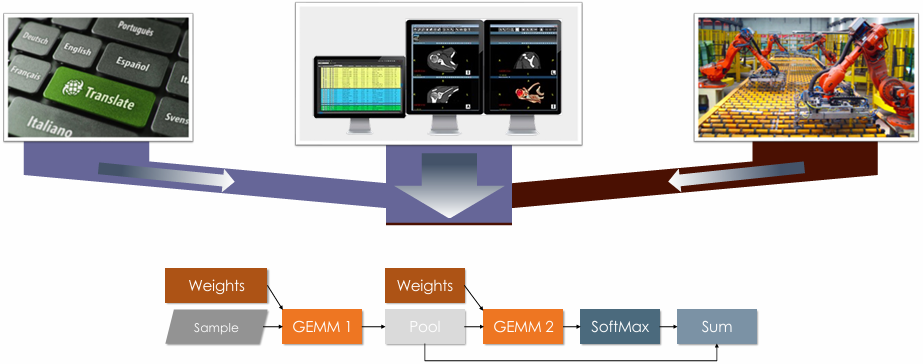

Lecture 7: GPU Architecture & CUDA Programming

GPU compute mode

Review: how to run code on a CPU

Lets say a user wants to run a program on a multi-core CPU…

OS loads program text into memory

OS selects CPU execution context

OS interrupts processor, prepares execution context (sets contents of registers, program counter, etc. to prepare execution context)

Go!

Processor begins executing instructions from within the environment maintained in the execution context

How to run code on a GPU (prior to 2007)

Let’s say a user wants to draw a picture using a GPU…

Application (via graphics driver) provides GPU shader program binaries

Application sets graphics pipeline(管道) parameters (e.g., output image size)

Application provides GPU a buffer of vertices

Application sends GPU a “draw” command: drawPrimitives(vertex_buffer)

This was the only interface to GPU hardware

GPU hardware could only execute graphics pipeline computations

NVIDIA Tesla architecture (2007)

First alternative, non-graphics-specific (“compute mode”) interface to GPU hardware

Let’s say a user wants to run a non-graphics program on the GPU’s programmable cores…

Application can allocate buffers in GPU memory and copy data to/from buffers

Application (via graphics driver) provides GPU a single kernel program binary

Application tells GPU to run the kernel in an SPMD fashion (“run N instances of this kernel”) launch(myKernel, N)

Interestingly, this is a far simpler operation than the graphics operation drawPrimitives()

CUDA programming language

Introduced in 2007 with NVIDIA Tesla architecture

“C-like” language to express programs that run on GPUs using the compute-mode hardware interface

Relatively low-level: CUDA’s abstractions closely match the capabilities/performance characteristics of modern GPUs (design goal: maintain low abstraction distance)

CUDA programming abstractions

Describe CUDA abstractions using CUDA terminology

Specifically, be careful with the use of the term “CUDA thread”. A CUDA thread presents a similar abstraction as a pthread in that both correspond to logical threads of control, but the implementation of a CUDA thread is very different

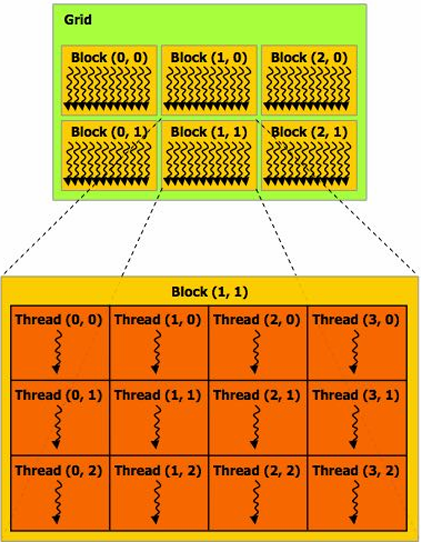

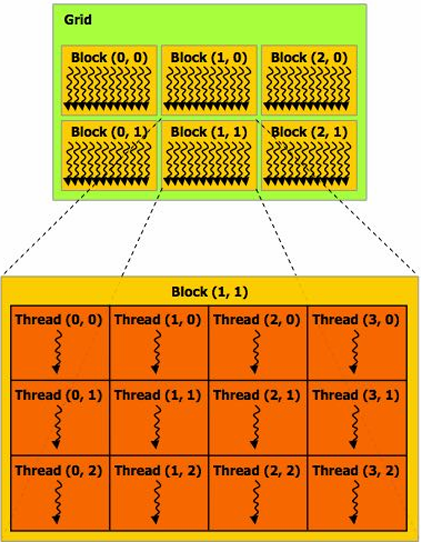

CUDA programs consist of a hierarchy of concurrent threads

Thread IDs can be up to 3-dimensional (2D example below)

Multi-dimensional thread ids are convenient for problems that are naturally N-D

Basic CUDA syntax

1 | // Regular application thread running on CPU (the “host”) |

1 | // SPMD execution of device kernel function: |

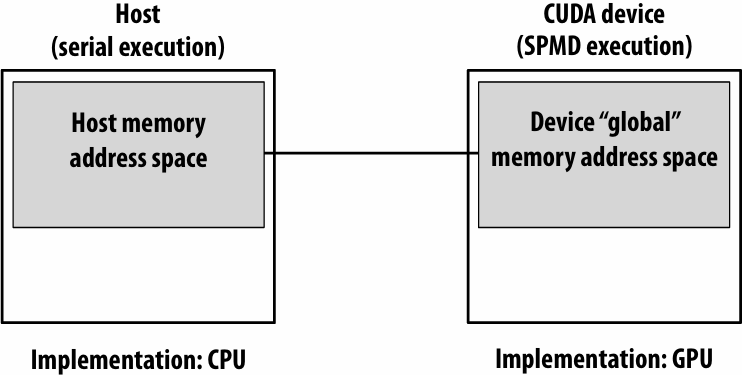

Clear separation of host and device code

Separation of execution into host and device code is performed statically by the programmer

Number of SPMD “CUDA threads” is explicit in the program

Number of kernel invocations(调用) is not determined by size of data collection(Block内都会调用)

(a kernel launch is not specified by map(kernel, collection) as was the case with graphics shader programming)

1 | // Regular application thread running on CPU (the “host”) |

1 | // CUDA kernel definition |

CUDA execution model

CUDA memory model

Distinct(独立的) host and device address spaces

memcpy primitive

Move data between address spaces

1 | float* A = new float[N]; // allocate buffer in host mem |

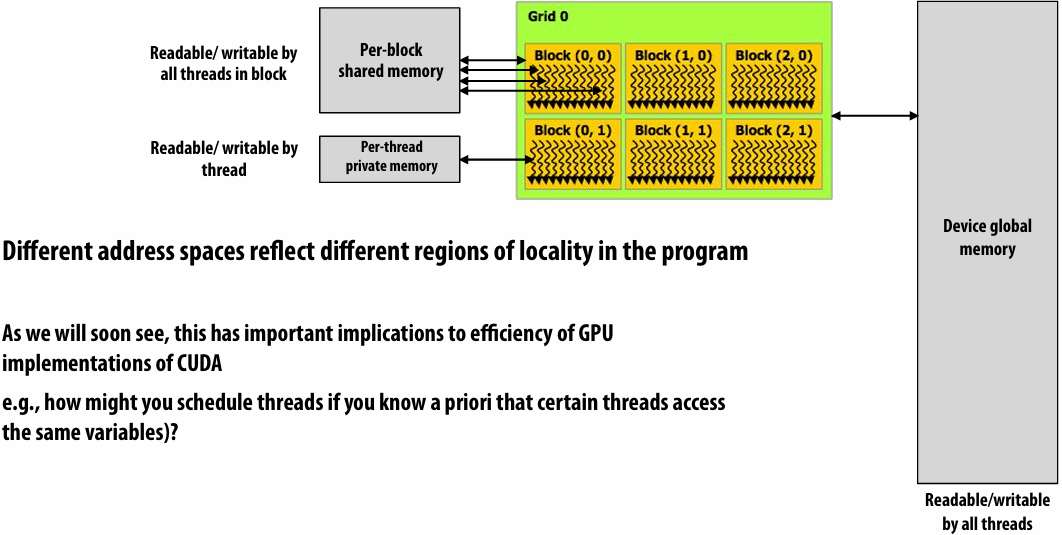

CUDA device memory model

Three distinct types of address spaces visible to kernels

priori(先验)

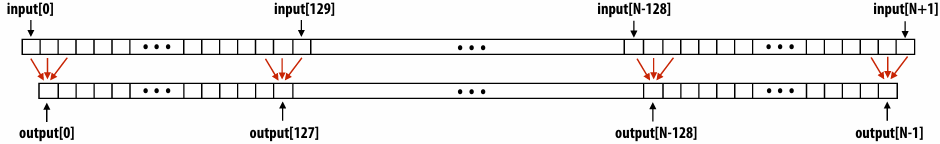

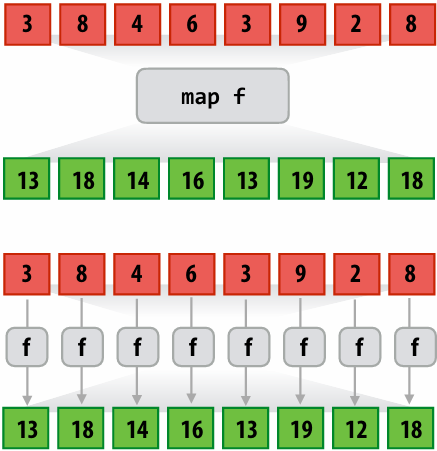

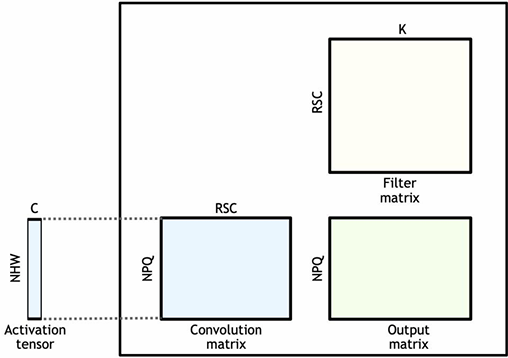

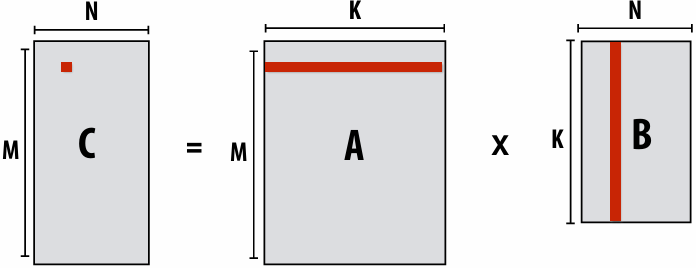

CUDA example: 1D convolution(卷积)

output[i] = (input[i] + input[i+1] + input[i+2]) / 3.f;

1D convolution in CUDA (version 1)

One thread per output element

1 | // CUDA Kernel |

1 | // Host code |

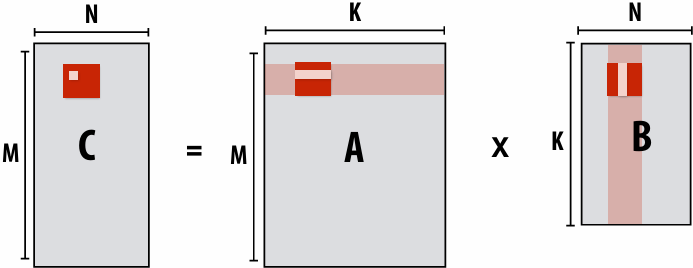

1D convolution in CUDA (version 2)

One thread per output element: stage input data in per-block shared memory

1 | // CUDA Kernel |

1 | // Host code |

CUDA synchronization constructs

__syncthreads()

Barrier: wait for all threads in the block to arrive at this point

Atomic operations

e.g., float atomicAdd(float* addr, float amount)

CUDA provides atomic operations on both global memory addresses and per-block shared memory addresses

Host/device synchronization

Implicit barrier across all threads at return of kernel

Summary: CUDA abstractions

Execution: thread hierarchy

Bulk launch of many threads (this is imprecise… I’ll clarify later)

Two-level hierarchy: threads are grouped into thread blocks

Distributed address space

Built-in memcpy primitives to copy between host and device address spaces

Three different types of device address spaces

Per thread, per block (“shared”), or per program (“global”)

Barrier synchronization primitive for threads in thread block

Atomic primitives for additional synchronization (shared and global variables)

SPMD vs. SIMD

SPMD is a programming model question independent of the implementation

So like ISPC is an SPMD programming model, CUDA is a SPMD programming model

What that means is I write one program, like this program right here, and the system will run it many times with different thread IDs

Now how we execute all those threads or all those instances efficiently is up to the implementation

And ISPC use SIMD the instructions to execute things very efficiently

CUDA implementation on modern GPUs

CUDA semantics

1 |

|

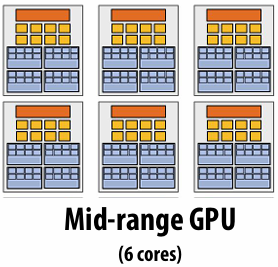

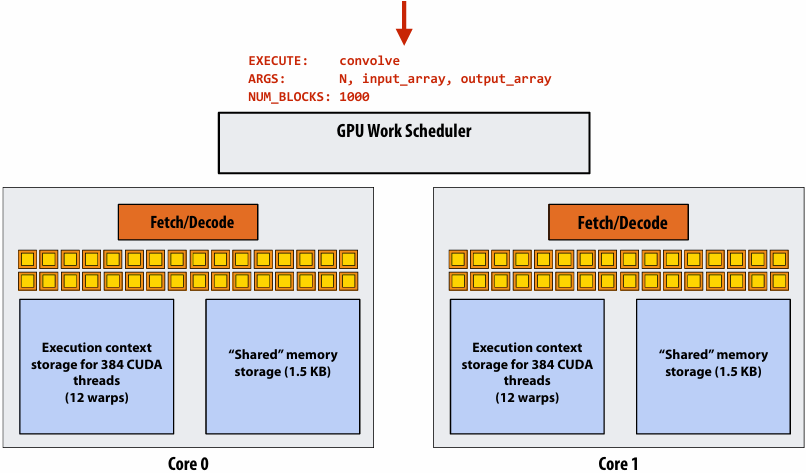

Assigning work

Desirable for CUDA program to run on all of these GPUs without modification

Note: there is no concept of num_cores in the CUDA programs I have shown you. (CUDA thread launch is similar in spirit to a forall loop in data parallel model examples)

CUDA compilation

A compiled CUDA device binary includes:

Program text (instructions)

Information about required resources:

128 threads per block

B bytes of local data per thread

128+2=130 floats (520 bytes) of shared space per thread block

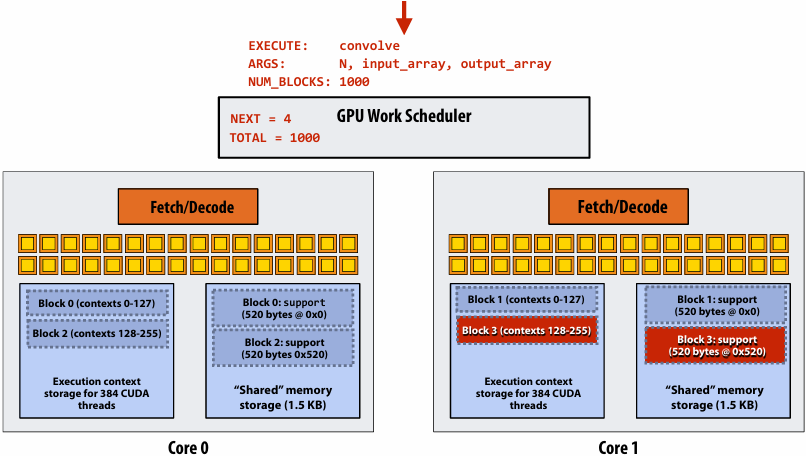

CUDA thread-block assignment

More detail on GPU architecture

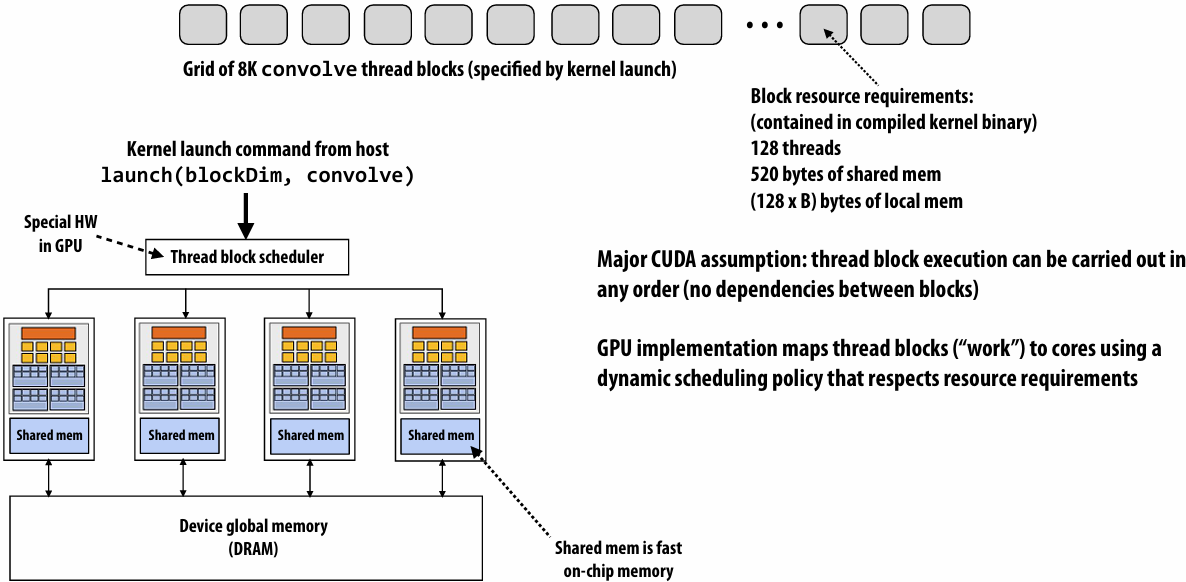

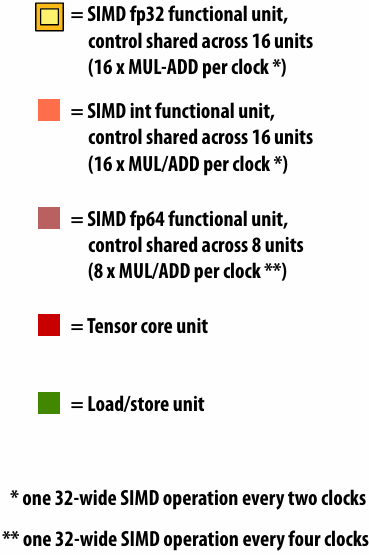

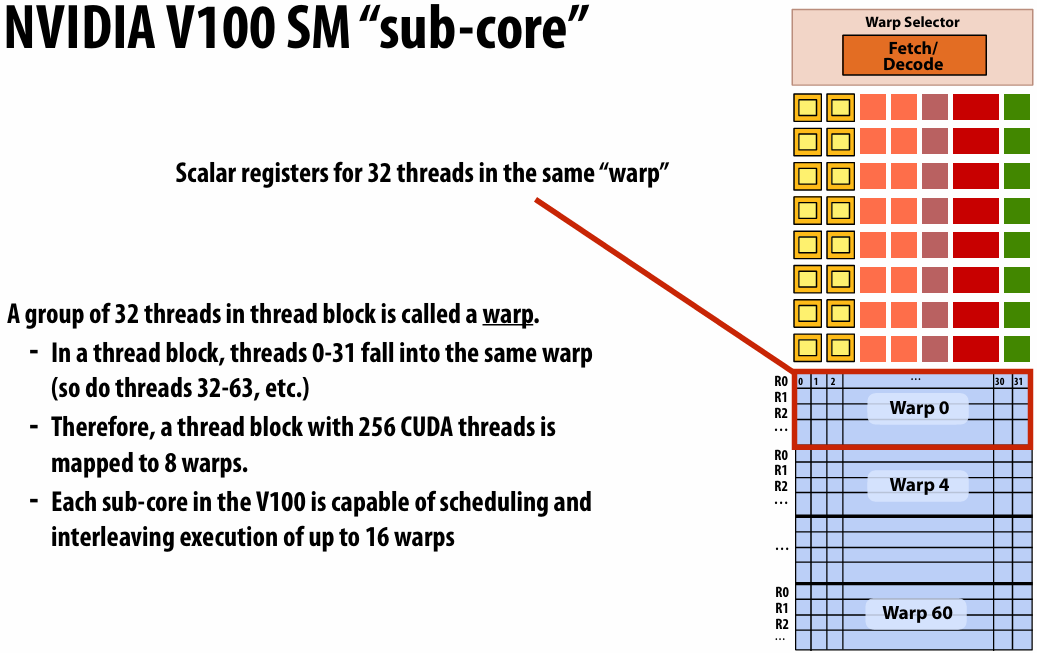

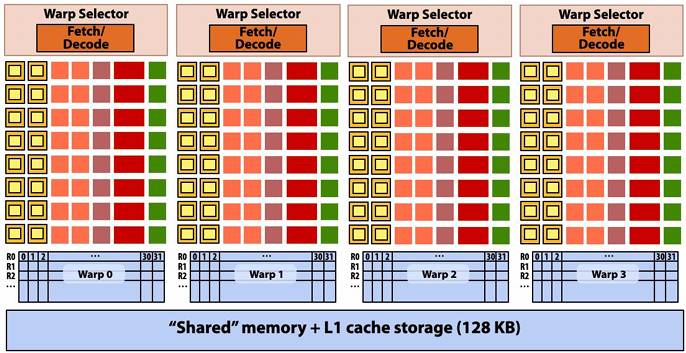

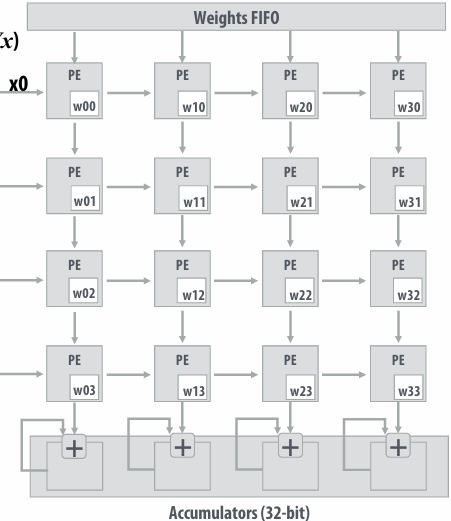

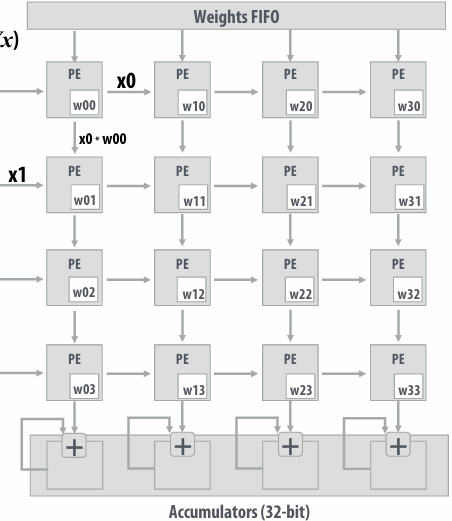

NVIDIA V100 SM “sub-core”

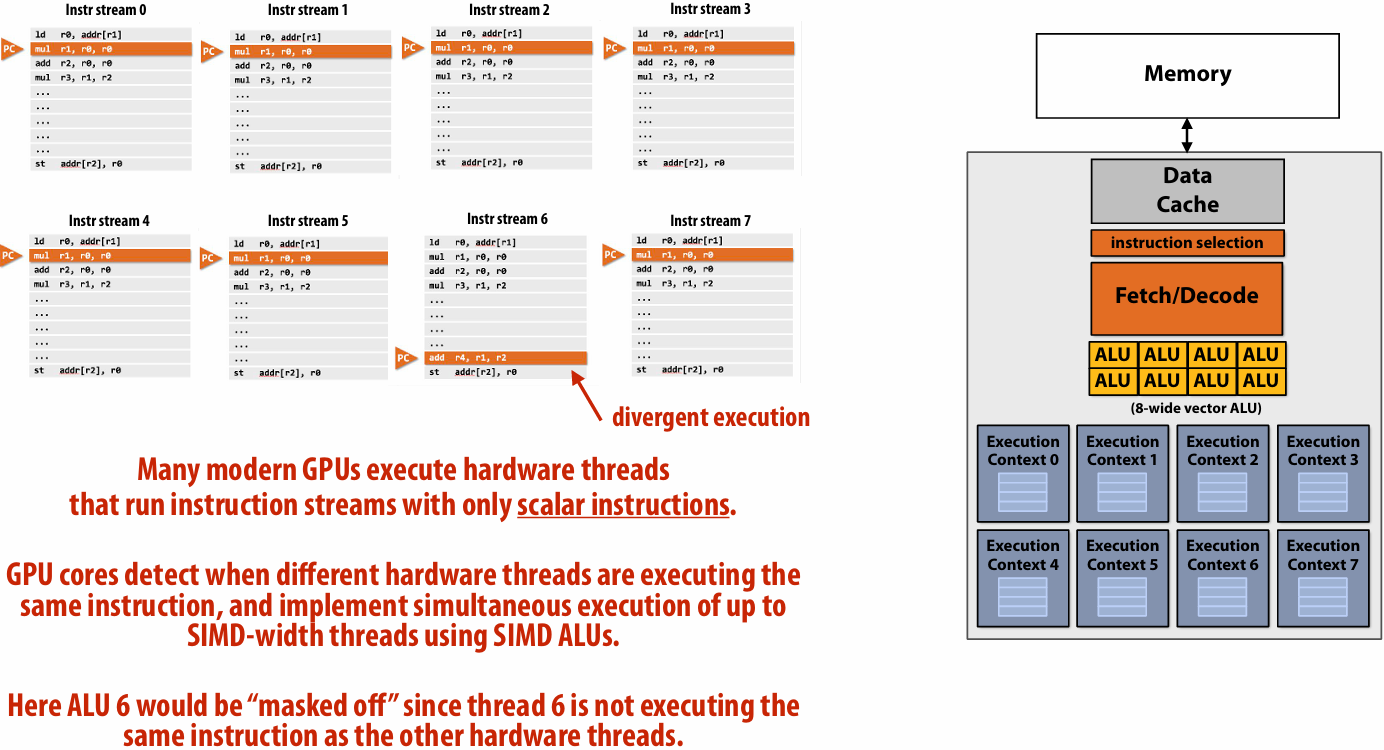

Threads in a warp are executed in a SIMD manner, **if they share the same instruction**

NVIDIA calls this SIMT (single instruction multiple CUDA thread)

If the 32 CUDA threads do not share the same instruction, performance can suffer due to divergent execution

This mapping is similar to how ISPC runs program instances in a gang(But GPU hardware is dynamically checking whether 32 independent CUDA threads share an instruction, and if this is true, it executes all 32 threads in a SIMD manner. The CUDA program is not compiled to SIMD instructions like ISPC gangs)(一个硬件实现,一个软件实现)

A warp is not part of CUDA, but is an important CUDA implementation detail on modern NVIDIA GPUs

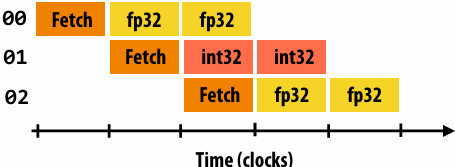

Instruction execution

Instruction stream for CUDA threads in a warp…

(note in this example all instructions are independent)

00 fp32 mul r0 r1 r2

01 int32 add r3 r4 r5

02 fp32 mul r6 r7 r8

…

Remember, entire warp of CUDA threads is running this instruction stream

So each instruction is run by all 32 CUDA threads in the warp

Since there are 16 ALUs, running the instruction for the entire warp takes two clocks

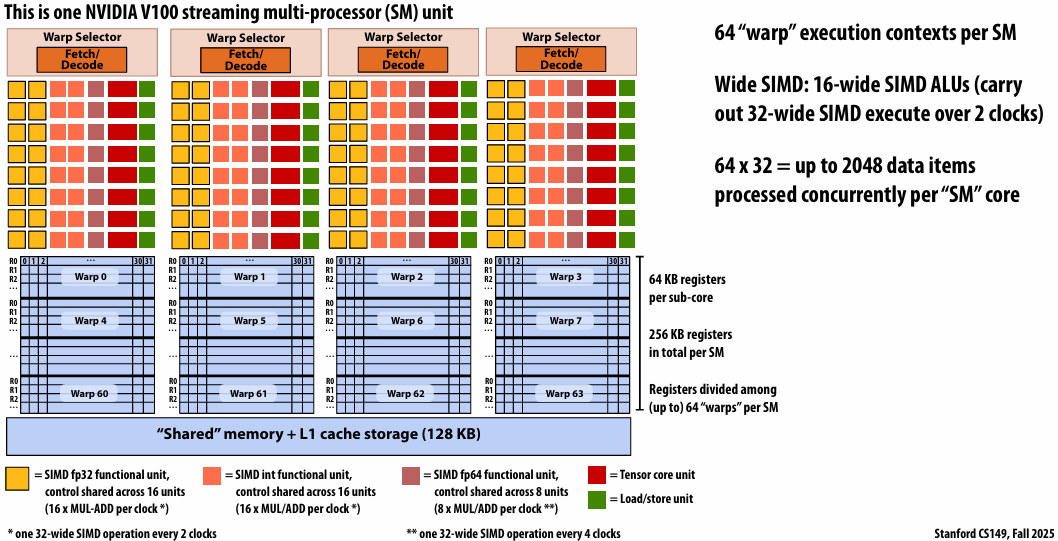

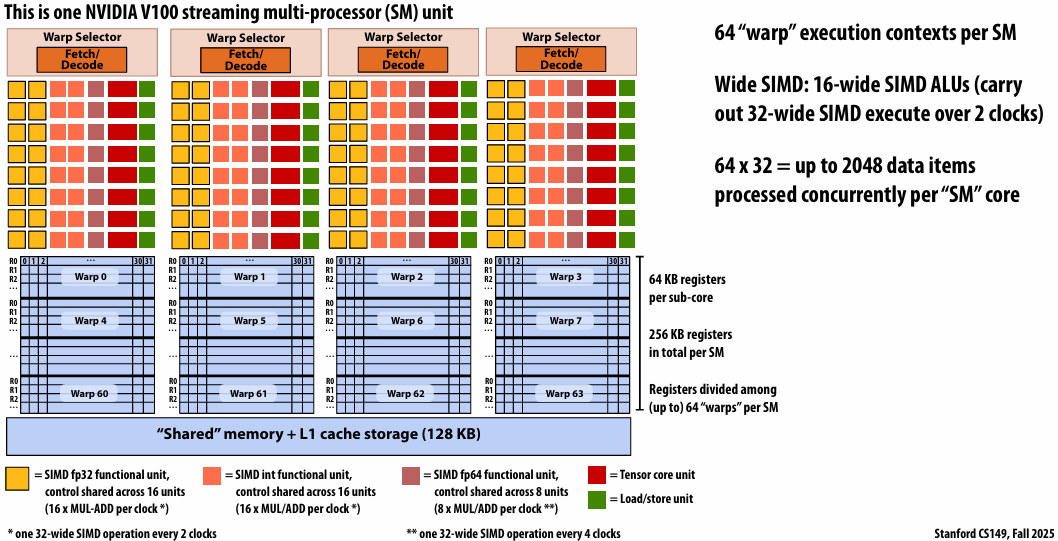

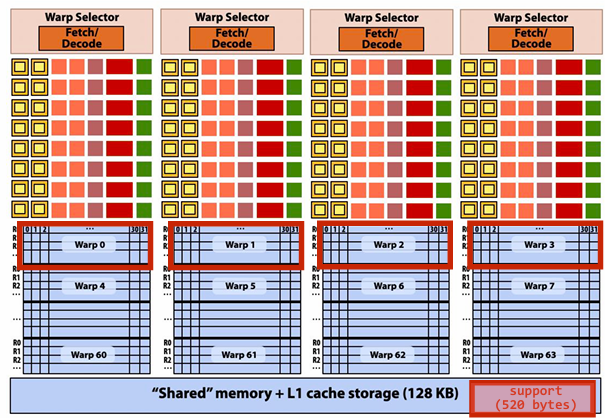

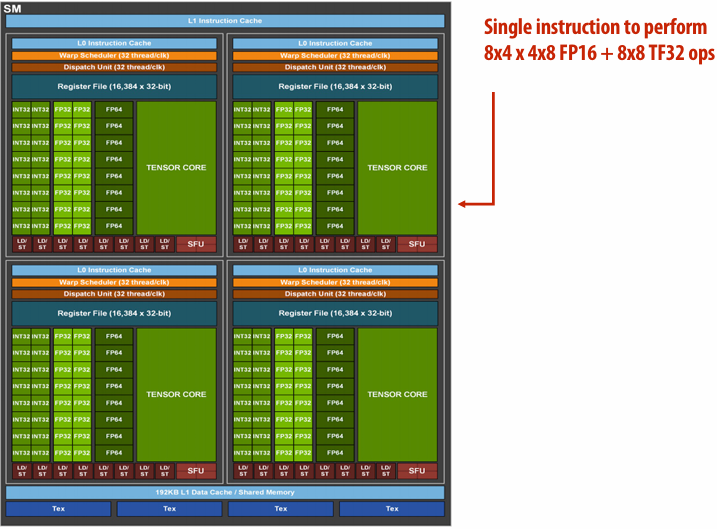

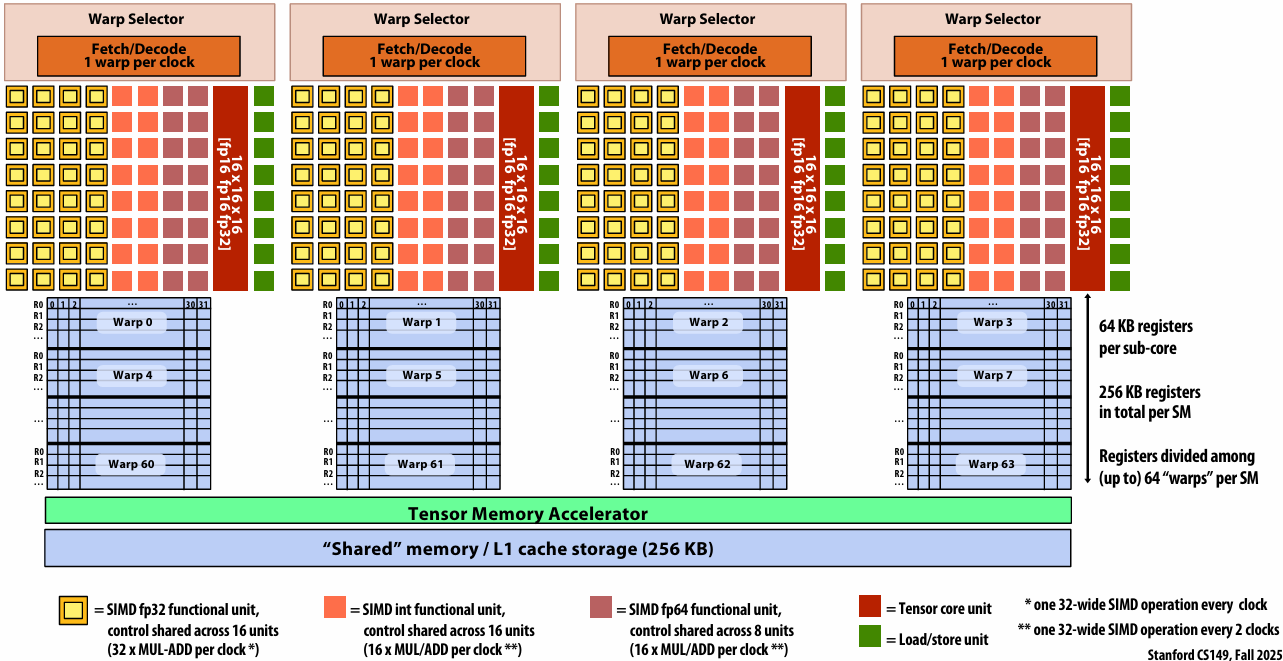

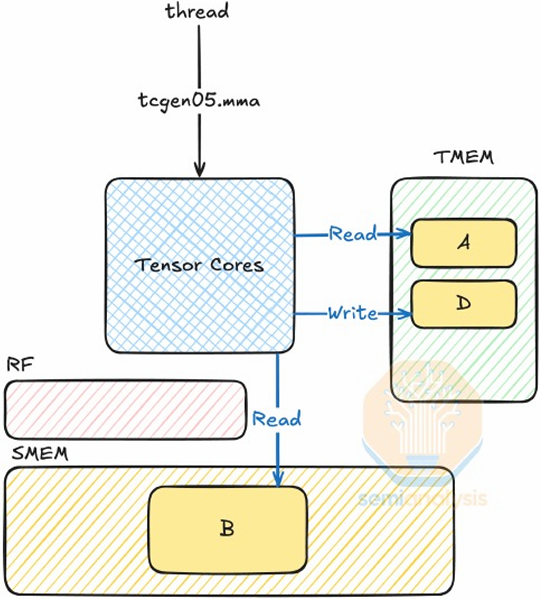

NVIDIA V100 GPU SM

This is one NVIDIA V100 streaming multi-processor (SM) unit

Running a thread block on a V100 SM

A convolve thread block is executed by 4 warps(4 warps x 32 threads/warp = 128 CUDA threads per block)

1 |

|

SM core operation each clock:

Each sub-core selects one runnable warp (from the 16 warps in its partition)

Each sub-core runs next instruction for the CUDA threads in the warp (this instruction may apply to all or a subset of the CUDA threads in a warp depending on divergence)

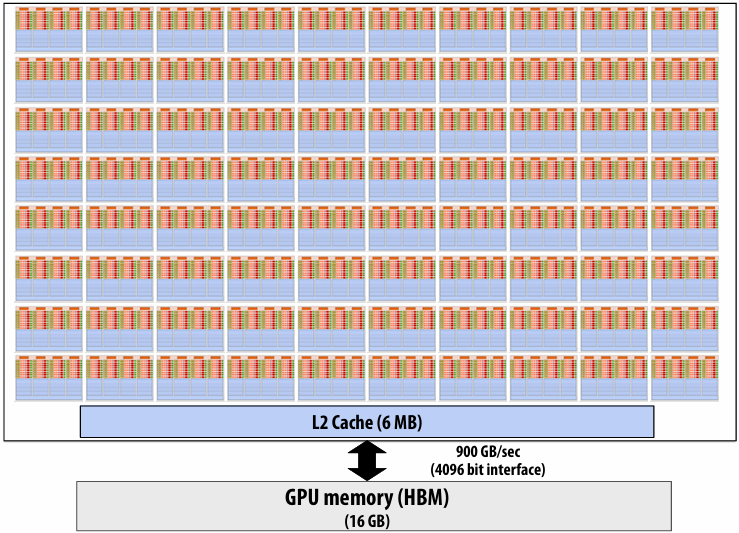

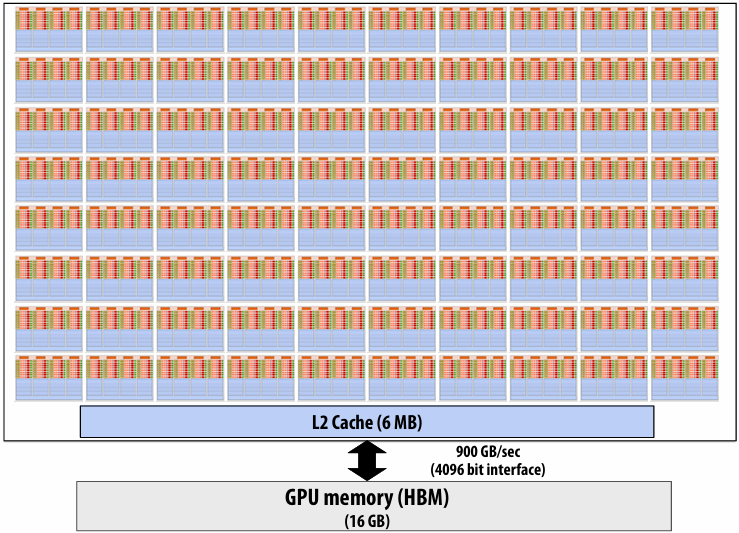

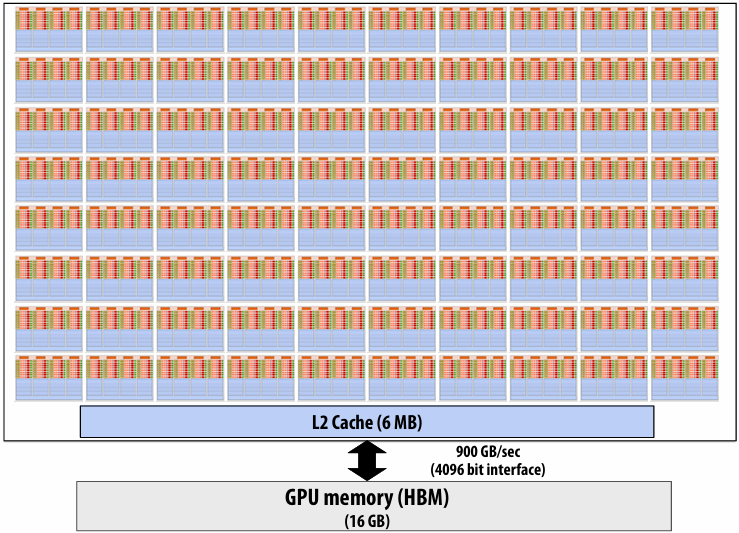

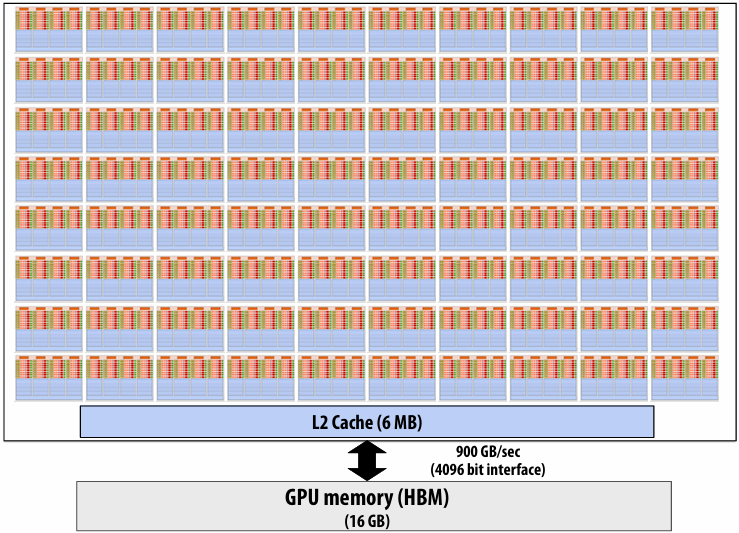

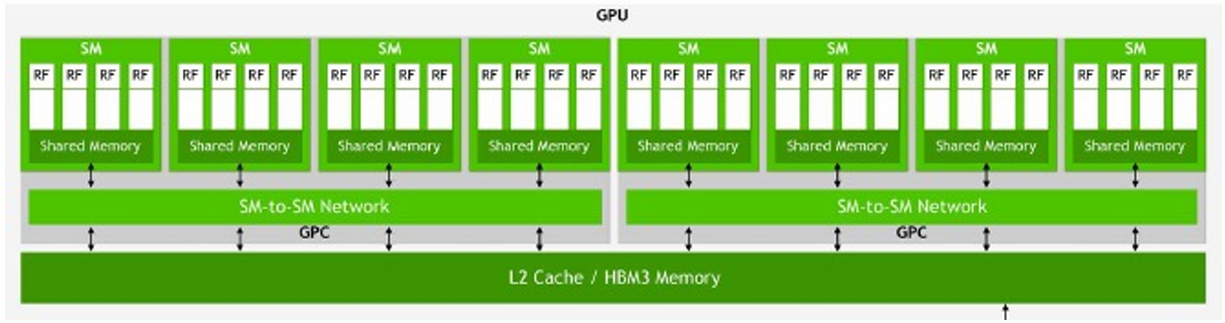

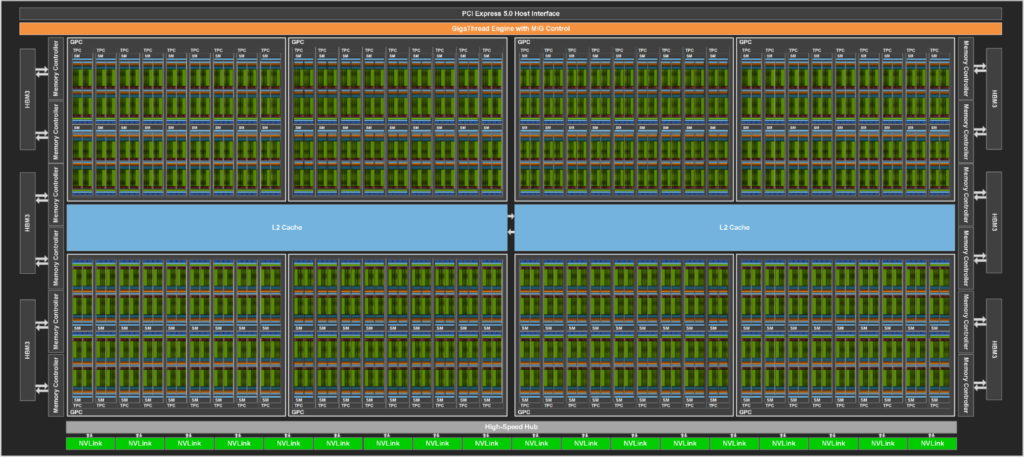

NVIDIA V100 GPU (80 SMs)

Summary: geometry of the V100 GPU

1.245 GHz clock

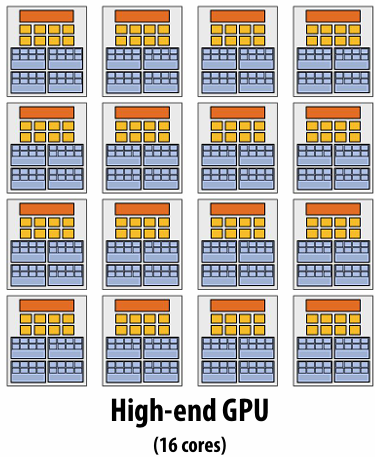

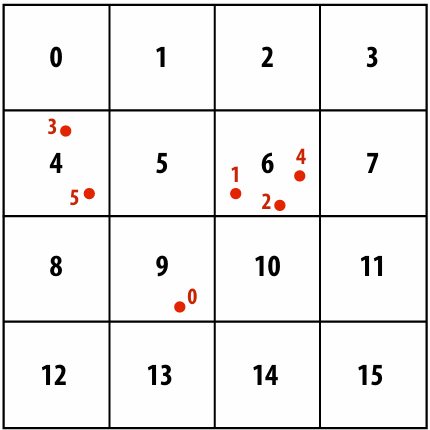

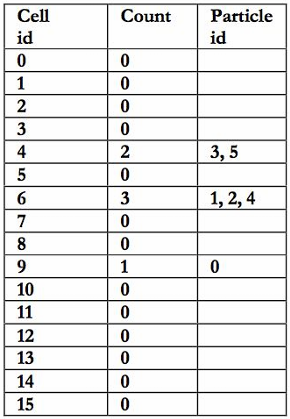

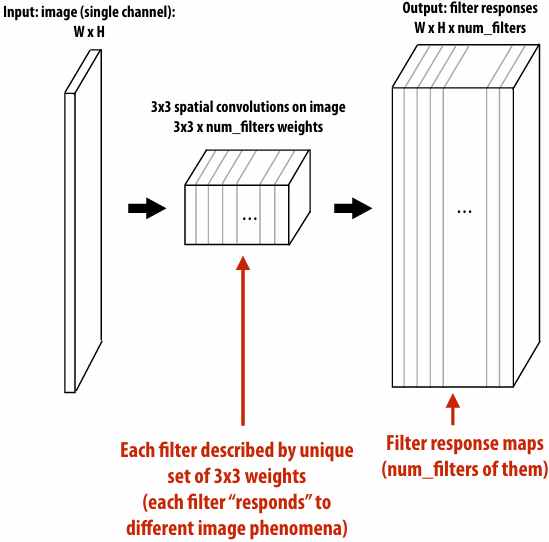

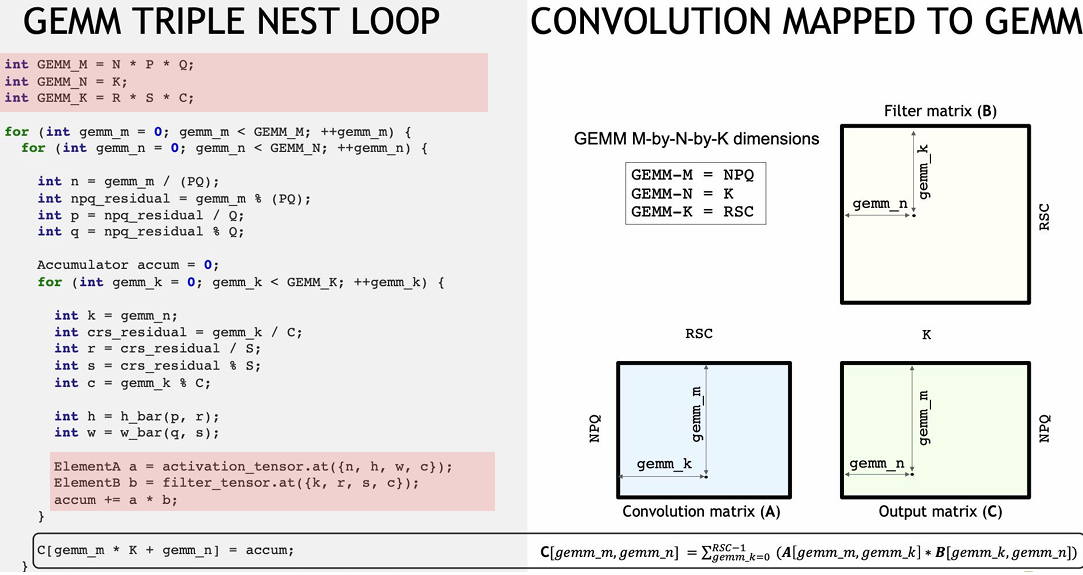

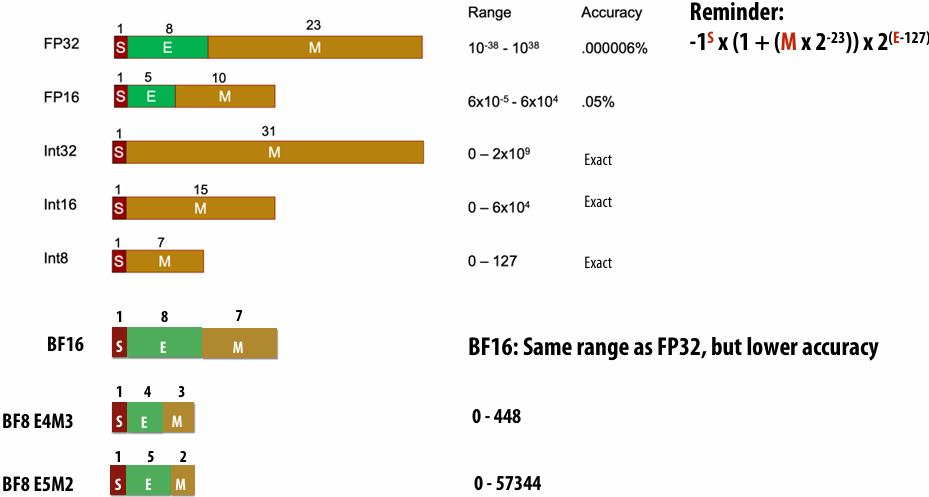

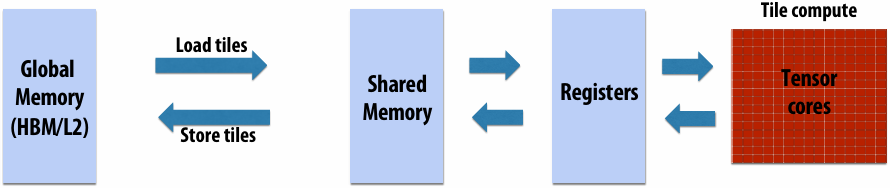

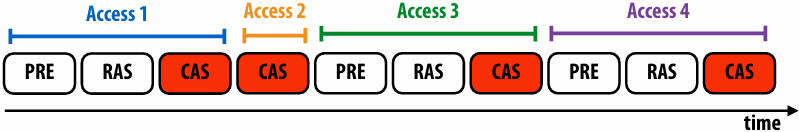

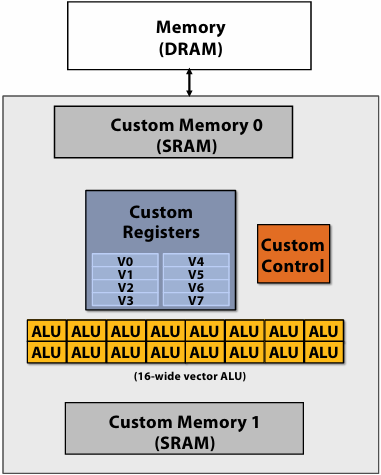

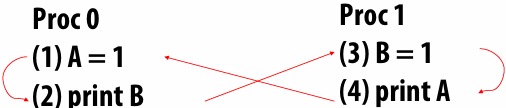

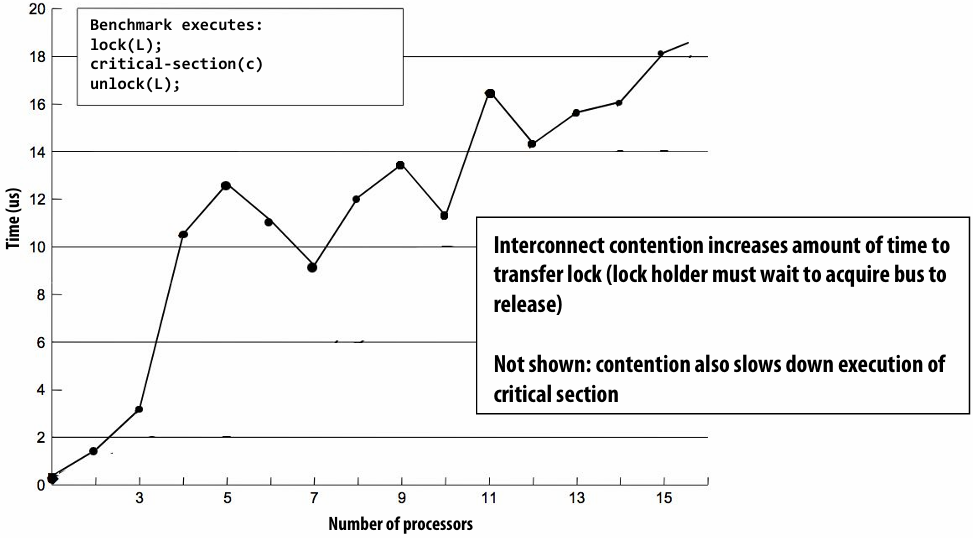

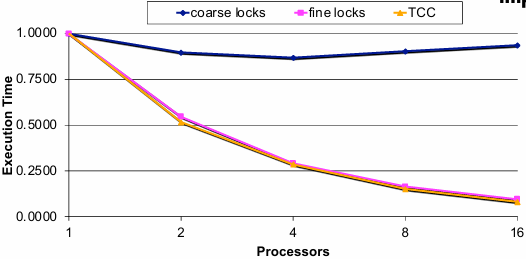

80 SM cores per chip